31 Post Implementation Survey Questions for Success

Explore 25 post implementation survey questions with sample answers, designed to gather feedback, measure success, and improve project results.

Post-implementation feedback tells you what happened after the big launch party ends and the real work begins. It helps you measure adoption, satisfaction, and ROI after a new software go-live, product launch, CRM rollout, or process change, while also revealing the smartest questions to ask when implementing new software next time. If you use the right implementation survey at the right moment, your team can refine onboarding, improve training, strengthen communication, and build a practical online survey tool survey software implementation checklist that gets better with every rollout.

Introduction – Why Post-Implementation Surveys Are Critical to Project Success

What post-implementation feedback really tells you

Post-implementation surveys turn opinions into action.

When you launch something new, you need more than a thumbs-up and a celebratory screenshot in the team chat.

You need real feedback that shows whether people are using the solution, liking the experience, and getting enough value from it to justify the investment.

That is where an implementation survey becomes your secret weapon, minus the dramatic soundtrack.

A good post-launch survey helps you measure three things that matter fast.

Adoption, which tells you whether people are actually using the system.

Satisfaction, which shows how users feel about the rollout, support, and daily experience.

ROI, which reveals whether the change is improving productivity, reducing cost, or moving business goals in the right direction.

These surveys are useful in common rollout scenarios.

A new software platform goes live and you need to know whether users can complete key tasks.

A product launch reaches customers and you want meaningful post product launch survey questions to capture early reactions.

A CRM rollout finishes and you need a questionnaire for CRM implementation that uncovers training gaps and workflow friction.

A new internal process is introduced and leadership wants a survey follow up that shows whether the change actually stuck.

Plus, the best survey programs do not stop at one questionnaire.

They include follow-up survey questions at several points in the journey, so you can learn what happened right after launch, a few weeks later, and months down the road.

In the sections ahead, you will see the core survey types that help teams iterate efficiently, improve future rollouts, and ask better implementation questions every time.

Research shows user satisfaction is significantly linked to information-system performance (r=0.42), making post-implementation satisfaction questions especially valuable (ScienceDirect).

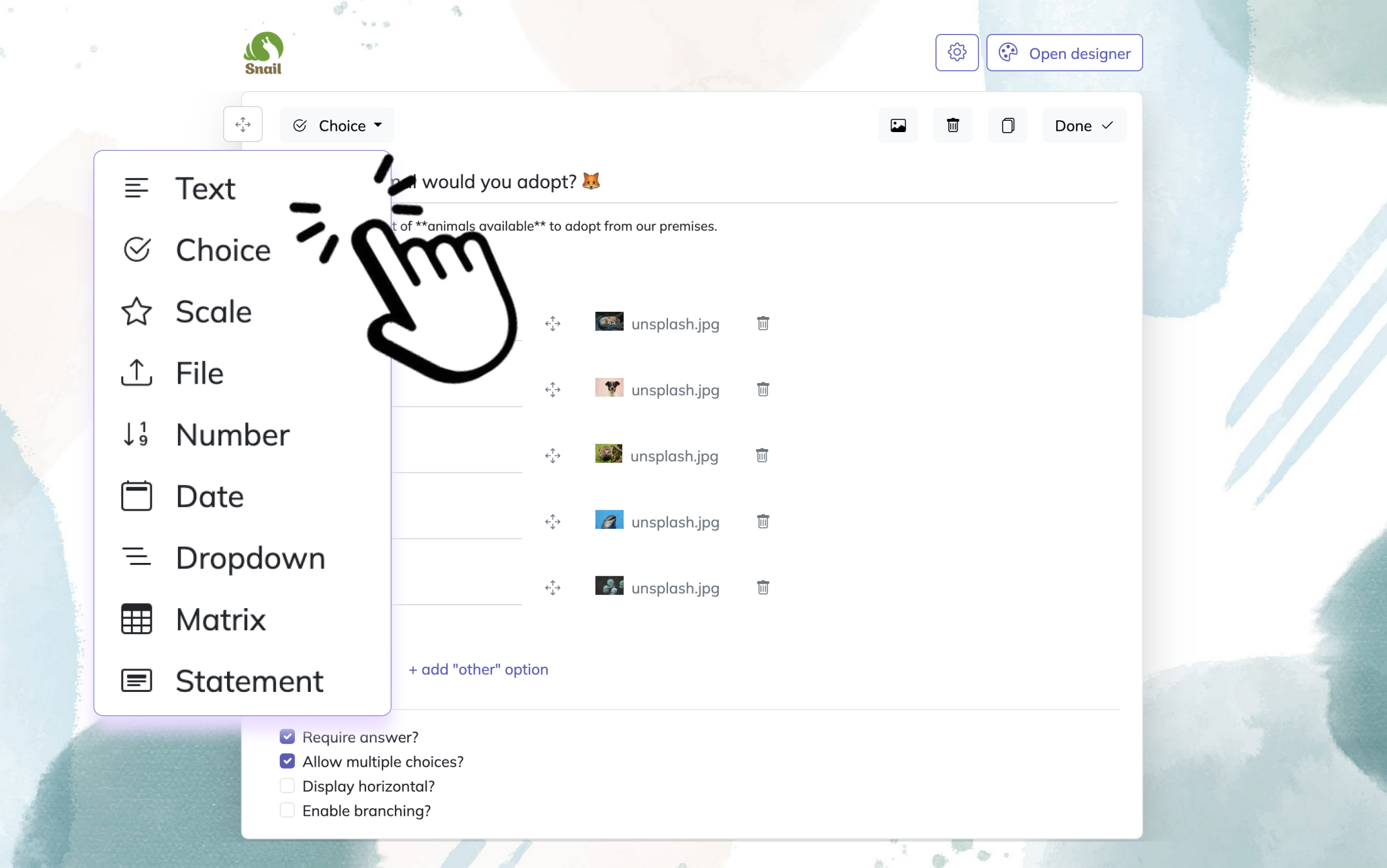

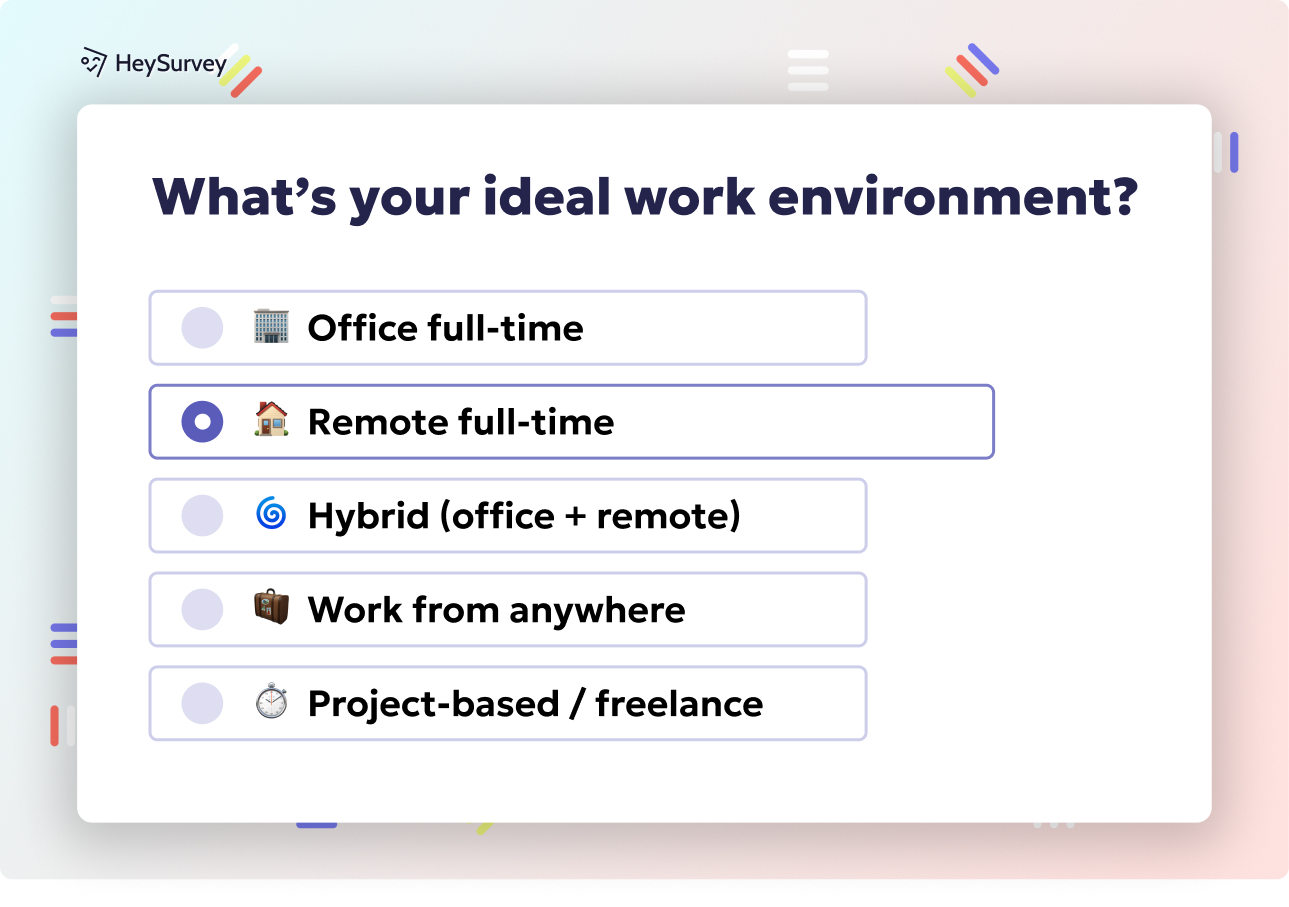

How to create a survey with HeySurvey

1. Create a new survey

Start by opening a template with the button below this guide, or begin from an empty sheet if you want full control. You can also create a survey by typing your questions directly. No account is needed to start building, so you can explore the online survey tool right away. Once your survey opens, you can rename it in the Survey Editor and begin shaping it into the survey you need.

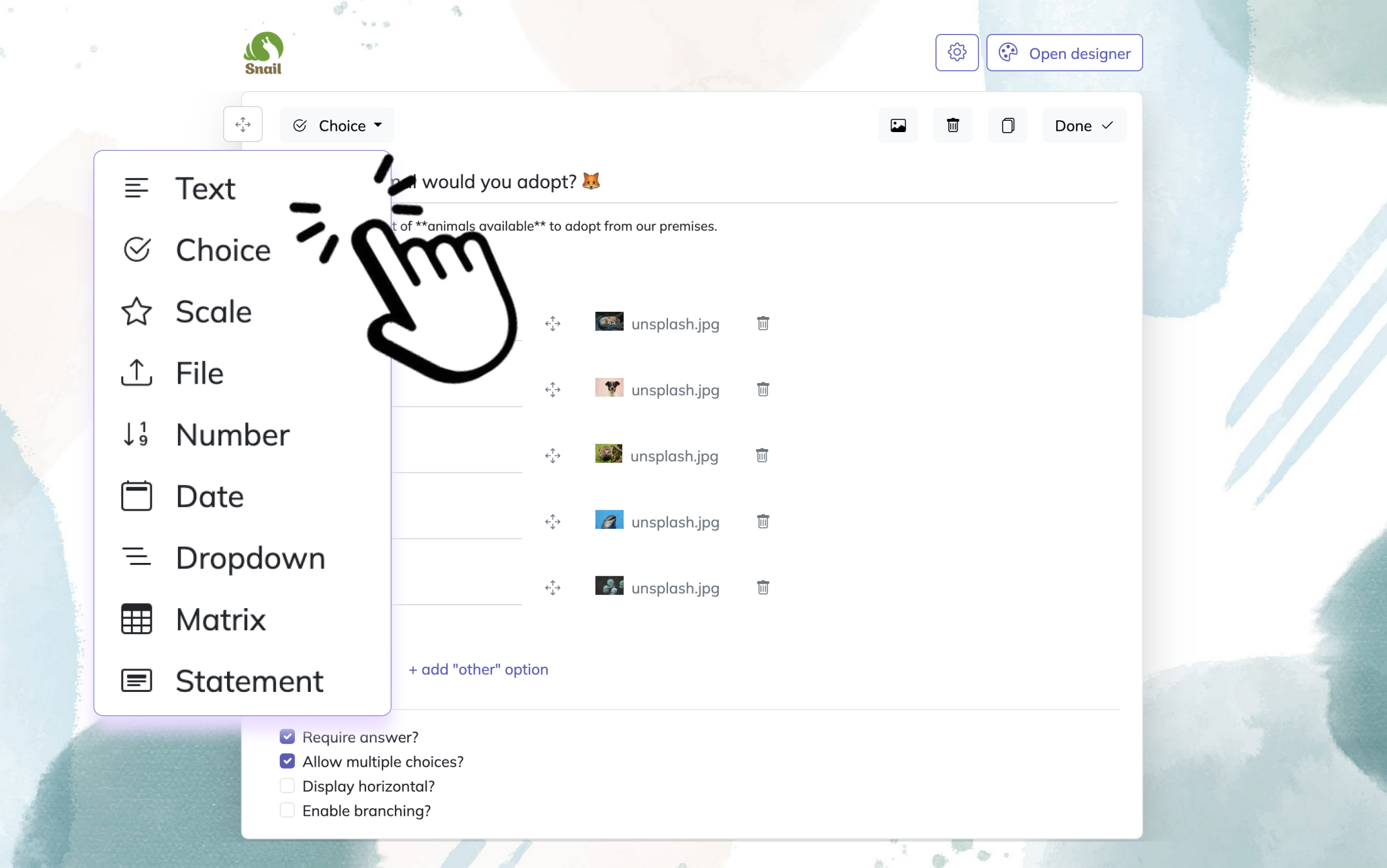

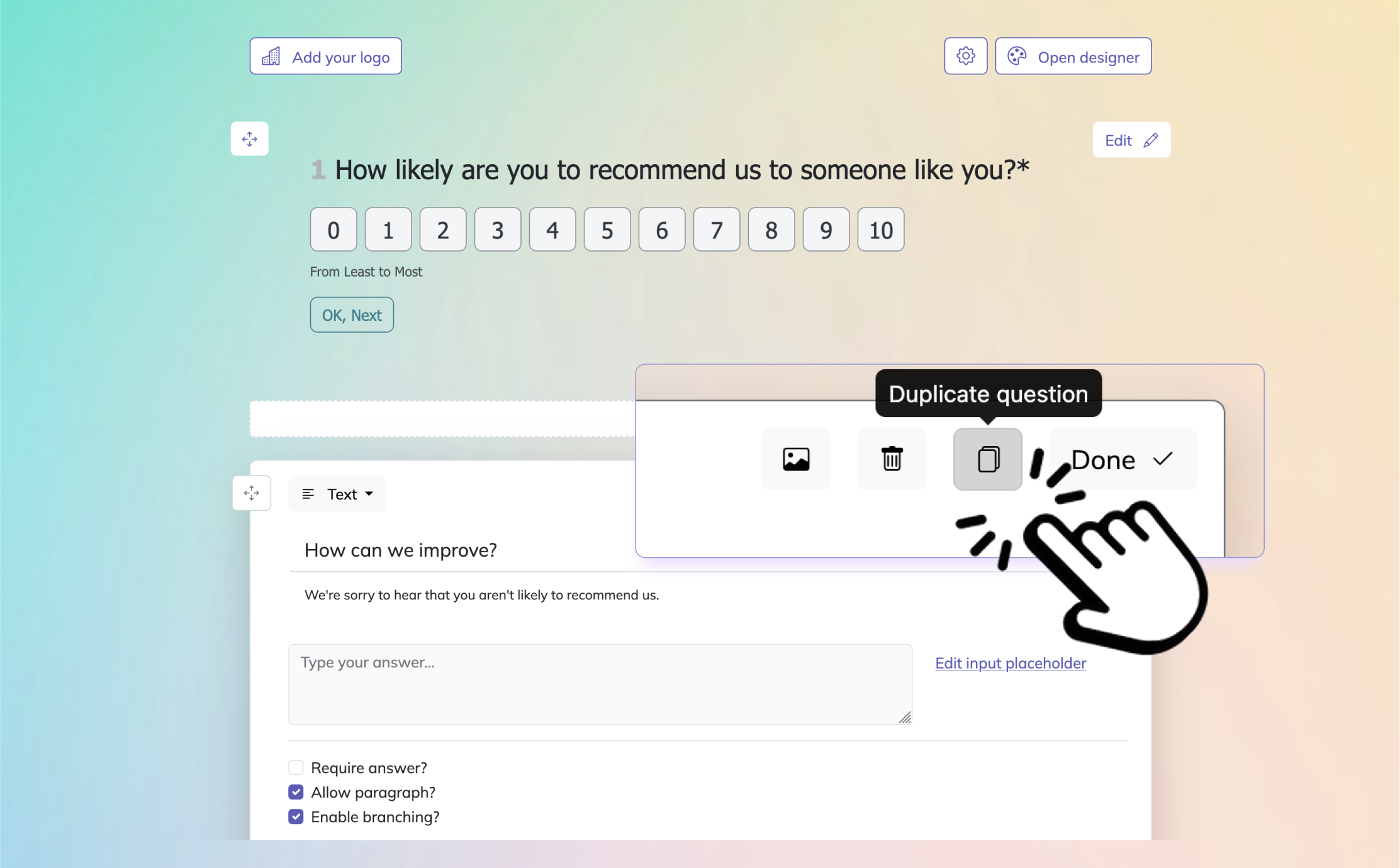

2. Add questions

Click Add Question to insert your first question, then choose the question type that fits your survey best. HeySurvey supports text, multiple choice, scales, numbers, dates, dropdowns, file uploads, and statement blocks. For each question, you can add a title, description, answer options, placeholders, and mark it as required if respondents must answer it before continuing. You can also add images to questions, duplicate questions to save time, and format text with simple markdown for a cleaner layout.

Bonus: Use the Designer Sidebar to apply branding and make the survey look like your organization. Upload your logo, adjust colors, fonts, backgrounds, and question card styles, or choose a one-question-per-page layout for a focused experience. In the Settings panel, you can define start and end dates, response limits, and a redirect URL after completion. If your survey needs it, set up branching so respondents can skip into different question paths based on their answers.

3. Publish survey

Before sharing, preview your survey to check how it looks on desktop or mobile. When everything is ready, click Publish to create a shareable link. Publishing requires an account, so make sure you’re signed in. After publishing, you can send the link to respondents or embed the survey on your website.

Software Adoption & Usability Survey

Why and when to use this survey type

Early adoption feedback catches friction before it becomes habit.

A software adoption and usability survey works best about 2 to 4 weeks after go-live.

That timing gives users enough time to click around, complete real tasks, get mildly annoyed by something, and hopefully recover before your survey lands in their inbox.

This survey is especially useful when rapid adoption drives value.

If you are rolling out SaaS tools, mobile apps, internal dashboards, or workflow systems, early experience matters because poor usability can crush momentum before users ever build confidence.

You are trying to answer a simple but high-stakes question.

Can people actually use the thing without needing a rescue mission from IT every afternoon?

This survey type helps you validate onboarding, task completion, navigation clarity, and feature discoverability.

It also reveals whether your launch materials were helpful or whether users are still guessing where basic actions live.

Here’s the thing, when people struggle early, they build work-arounds fast.

And once those work-arounds settle in, your shiny platform starts collecting digital dust.

That is why software adoption survey questions should focus on real behavior instead of vague impressions alone.

You want to know whether users can finish core tasks, find what they need, and feel more efficient than they did in the old system.

These insights also support your broader survey software implementation checklist.

If the same issue shows up in multiple rollouts, you can fix onboarding, update training, or redesign internal support before the next launch.

For teams asking questions to ask when implementing new software, this survey gives you practical answers grounded in daily use, not hopeful assumptions.

Sample questions

How easy was it to complete your first end-to-end task in the new software?

Which feature, if any, still feels confusing or hard to locate?

On a scale of 1 to 10, how intuitive is the overall interface?

What additional resources, such as videos, guides, or training, would improve your experience?

Compared with the previous system, has your efficiency improved, remained the same, or declined?

How this survey improves the next rollout

This survey helps you identify adoption blockers while they are still fixable.

You can use responses to improve onboarding flows, simplify menus, adjust permissions, or create smarter help content that addresses what users actually struggle with.

Plus, this is one of the most practical sets of implementation questions you can ask because it connects directly to daily behavior.

If users say the interface is intuitive but still cannot complete core workflows, you may have a training issue.

If they say efficiency has declined, you may have a design issue, a migration issue, or a process issue wearing a fake mustache.

Over time, this survey becomes one of the strongest tools in your user adoption strategy.

It helps you track whether launch success was real, or just a temporary burst of polite optimism.

Post-implementation surveys should prioritize user satisfaction and system quality, which strongly predict continued use and perceived net benefits after rollout (source).

Implementation Satisfaction Survey

Why and when to use this survey type

Satisfaction data helps you improve the rollout, not just the result.

An implementation satisfaction survey should be sent immediately after project close-out.

This is the moment when stakeholder memories are still fresh, which is helpful because nobody remembers timeline delays accurately after three months and two new crises.

This survey is different from a usability survey.

It is not mainly about the product experience after adoption.

It is about how the rollout itself was managed, including planning, communication, responsiveness, project governance, milestone tracking, and overall confidence in the implementation team.

That makes it one of the most valuable implementation questions sets for project managers, consultants, internal transformation teams, and operations leaders.

You want to understand whether the rollout felt organized and professional, or whether it felt like everyone was building the plane while trying to serve snacks.

A strong implementation satisfaction survey helps you assess several areas.

How stakeholders felt about the overall process.

Whether the project met expected milestones and timeline commitments.

How clearly changes, risks, and expectations were communicated.

Which parts of the implementation created confidence.

Which parts should be improved in the next rollout.

This kind of feedback supports continuous improvement in a direct way.

If your project technically launched on time but stakeholders felt confused or unsupported, that still matters.

If your team was responsive and flexible during challenges, that matters too because strong execution builds trust for future changes.

Plus, when you compare results across multiple projects, patterns become visible.

You may discover that kickoff alignment is strong but handoff communication is weak, or that training is solid but executive sponsorship feels too quiet.

That is how an implementation satisfaction survey becomes more than a scorecard.

It becomes a repeatable learning tool for every future launch in your survey software implementation checklist.

Sample questions

How satisfied are you with the overall implementation process?

Did the project meet the agreed-upon timeline and milestones?

How effectively did the implementation team communicate changes and expectations?

What aspect of the implementation exceeded your expectations?

What is the one thing we should improve in our next implementation?

How this survey supports future implementations

The best use of this survey is not to admire a good score and move on.

It is to compare satisfaction results against actual delivery outcomes so you can see where perception and project reality differ.

For example, a rollout may meet its deadline but still receive poor marks for communication.

That tells you the issue is not scheduling but stakeholder visibility.

On top of that, the open-ended responses often deliver the best improvement ideas.

You will hear exactly where people felt supported, where they felt left out, and what would make the next experience smoother.

This is especially useful for teams building a reusable questionnaire for CRM implementation, ERP deployment, or internal systems rollout.

Once you capture recurring themes, you can standardize what works and stop repeating what clearly does not.

Training & Support Effectiveness Survey

Why and when to use this survey type

Training only works if people can do the job afterward.

A training and support effectiveness survey should be sent after formal training sessions or at the first major support checkpoint.

That might be a few days after training ends, or after users have had enough time to apply what they learned in real work.

This survey helps you verify whether knowledge transfer actually happened.

That sounds obvious, but plenty of training programs look polished on paper while users still leave wondering where to click first.

The goal is to learn whether training content was clear, relevant, well-timed, and connected to real tasks.

You also want to understand whether the support team is responsive enough once questions start rolling in.

If people cannot get help quickly, even good training starts to lose value.

This survey is especially important in the it survey questions for employees survey software implementation process because support quality heavily influences adoption.

Users may forgive a steep learning curve if help is fast and useful.

They are much less forgiving if every question disappears into a support inbox that feels like a black hole with branding.

This feedback also improves your survey software implementation checklist by highlighting where materials or support systems need refinement.

For example, users might need more role-specific guides, shorter how-to videos, more live practice, or clearer escalation paths.

That kind of detail is gold.

It tells you not only whether training happened, but whether training worked.

And if you are thinking through questions to ask when implementing new software, this survey belongs high on the list because user confidence is one of the strongest leading indicators of long-term success.

Sample questions

How confident do you feel performing daily tasks after the training?

Were the training materials, including videos, manuals, and live sessions, clear and comprehensive?

How responsive has the support team been to your questions?

Which topics require deeper coverage in future sessions?

Rate the usefulness of the implementation checklist provided.

How to use the feedback effectively

Survey responses should shape both your content and your support model.

If users say they understand concepts but still feel unprepared for daily work, your training may be too theoretical.

If they praise the trainer but criticize the materials, then your content library probably needs an upgrade.

Plus, support feedback often reveals operational problems that project teams miss.

Maybe response times are slow, or maybe answers are technically correct but too complex for everyday users.

That happens more than teams like to admit, usually right after someone proudly says the support model is "robust."

You can also segment responses by role.

Managers, power users, and front-line staff often have very different views of training quality, and combining them into one average score can hide important friction.

Used well, this survey helps you build smarter onboarding, stronger documentation, and a support process people trust.

Research shows user training significantly improves software adoption intention and perceived usefulness, making post-implementation confidence and support questions strong success indicators (ScienceDirect).

Change Management & Communication Survey

Why and when to use this survey type

People support change faster when they understand it sooner.

A change management and communication survey helps you understand how well the transition was explained and supported.

It should go to both end-users and managers because the two groups often experience the same rollout in very different ways.

This survey focuses on the human side of implementation.

That includes the clarity of messaging, the visibility of leadership support, the usefulness of communication channels, and the extent to which stakeholders understood the purpose behind the change.

Here’s the thing, people rarely resist change just because it is new.

They usually resist because it feels unclear, inconvenient, or suspiciously announced in a 4:58 p.m. email.

That is why this survey matters.

It tells you whether your communication strategy created alignment or simply created more reading.

A good change management survey can uncover several valuable insights.

Whether users understood why the implementation was happening before rollout.

Whether goals and success measures were communicated clearly.

Which communication channels worked best for updates.

Whether leadership support was visible and credible.

What information people still needed to feel prepared.

This type of feedback is especially important for large-scale system rollouts, process changes, mergers, reorganizations, and any project where adoption depends on trust as much as training.

It also complements implementation satisfaction survey results because even a well-run project can struggle if communication misses the mark.

And for teams building better implementation questions, this survey helps reveal what stakeholders needed to hear earlier, more clearly, or more often.

That insight makes your next rollout smoother before the first training session even begins.

Sample questions

Did you understand why the change was necessary before rollout?

How clear were the goals and success metrics communicated to you?

Which communication channel, such as email, intranet, or town-hall, worked best for updates?

Did leadership visibly support the implementation?

What additional information would have eased the transition?

Why these insights matter long after launch

Communication feedback should not sit in a slide deck and quietly age.

It should feed directly into your rollout playbook so future projects start with stronger messaging and better stakeholder alignment.

For example, if managers say they lacked talking points, you can create manager-ready briefings.

If end-users say email updates were ignored but short intranet videos worked well, you can shift your channel strategy next time.

On top of that, this survey gives you an early warning for adoption risks.

If people never understood the "why," they are more likely to disengage later, even if the product itself works well.

This makes change communication feedback a serious driver of long-term adoption, not just a nice-to-have project wrap-up activity.

Business Impact & ROI Survey

Why and when to use this survey type

ROI surveys connect user experience to business results.

A business impact and ROI survey should usually be sent 3 to 6 months after launch.

By then, users have had enough time to settle into routines, managers have seen performance patterns, and you can start connecting system use to measurable outcomes.

This survey helps answer the question every leadership team eventually asks.

Was the implementation worth it?

That question becomes even more important after major software deployments, CRM rollouts, workflow redesigns, and product launches where cost, time, and internal effort were significant.

It also aligns well with post transaction survey questions and post product launch survey questions because you are looking beyond first impressions and into actual value creation.

You want to identify whether the solution improved productivity, reduced errors, accelerated revenue, lowered support burden, or created other measurable gains.

At the same time, you want to know whether the rollout introduced hidden costs or unintended side effects.

Maybe a tool saved time for one team but created manual cleanup for another.

Maybe sales velocity improved while reporting became more complex.

That is why this survey should include both structured ratings and open text responses.

The numbers show trend direction.

The comments explain what the numbers are trying to say in plain English.

Plus, this survey helps you decide what to optimize next.

If ROI is strong but uneven across departments, your next step may be targeted adoption support.

If the business value is promising but not yet fully realized, you may need additional integration, process refinement, or feature enablement.

In short, this survey helps move the conversation from "Did users like it?" to "Did the business gain from it?"

Sample questions

Have you noticed measurable time savings in your workflow? Please estimate.

Which business metric, such as sales velocity or error rate, has improved the most?

Are there any unintended negative impacts we should address?

Would you recommend continuing to invest in this solution?

What ROI milestones should we target next quarter?

How to turn responses into useful decisions

The biggest mistake with ROI surveys is treating them like opinion polls.

They work best when paired with operational data, such as adoption logs, support ticket volume, cycle times, error rates, or revenue metrics.

That gives you a more complete picture of business impact.

For example, if users report time savings and data also shows faster completion rates, that is strong evidence of value.

If responses are positive but hard metrics have not moved, the benefits may be more perceived than proven, which is still useful to know.

Plus, open responses often uncover the next layer of opportunity.

You may learn that one feature is driving most of the value while another is barely touched.

That insight can shape roadmaps, training plans, and investment priorities.

If you use post transaction survey questions or post product launch survey questions elsewhere in your organization, this survey can also align implementation feedback with broader customer and operational measurement.

That creates a more connected view of performance, which is much nicer than five departments arguing over five different dashboards.

Follow-Up / Long-Term Adoption Survey

Why and when to use this survey type

Long-term adoption tells you whether change actually stuck.

A follow-up or long-term adoption survey should be sent 6 to 12 months after rollout.

This is where you learn whether the implementation delivered lasting behavior change or whether users quietly drifted back to spreadsheets, side tools, and old habits wearing new labels.

This survey is essential because initial adoption can be misleading.

Right after launch, usage often spikes because training is fresh, leadership is watching, and everyone knows the project team still has a pulse.

Months later, real behavior becomes visible.

That is when user adoption survey questions help you understand sustained usage, feature depth, work-arounds, and emerging enhancement needs.

A long-term survey follow up also helps you identify whether users have matured in the platform.

Maybe they are using more advanced features.

Maybe they have abandoned certain workflows.

Maybe they found creative shortcuts that signal either innovation or a system gap big enough to drive a forklift through.

This survey is especially useful for SaaS products, enterprise systems, customer platforms, internal productivity tools, and CRM deployments.

It also works well as part of a reusable questionnaire for CRM implementation because CRM success often depends on long-term discipline, not just early training completion.

At this stage, your goal is to understand the quality of adoption, not just the existence of it.

You want to know what people use, what they ignore, where the system still causes friction, and what improvements would create more value going forward.

That makes this survey a vital part of continuous improvement.

It tells you what happened after the launch excitement faded and normal work took over.

Sample questions

Which three features do you use most frequently today?

Which feature have you stopped using and why?

Have you created any work-arounds outside the platform? Explain.

How has the tool changed your team’s KPIs over the past six months?

What new functionality would deliver the most value going forward?

What this survey reveals that earlier ones cannot

Long-term adoption surveys reveal patterns that shorter-term surveys simply miss.

For example, a feature may seem successful at 30 days because users tried it, but by month eight it may be abandoned due to workflow friction or poor integration.

That kind of delayed truth is incredibly valuable.

This survey also surfaces innovation opportunities.

Users who have lived with a tool for months often know exactly what would make it better, faster, or easier to scale.

Their ideas are usually more practical than the guesses made during rollout planning.

On top of that, repeated survey follow up creates a strong feedback culture.

Users see that implementation is not a one-time event but an evolving process where their input shapes future enhancements.

That alone can improve engagement.

When people know feedback leads to action, response quality usually improves too.

And that means your next round of implementation questions gets even more useful.

How to Distribute and Analyze Post-Implementation Surveys

Choosing the right channel for the right audience

Distribution strategy affects response quality as much as question quality.

You can write brilliant surveys, but if they reach people in the wrong place at the wrong time, response rates will flop like a dramatic fish.

That is why distribution deserves real planning.

The three most common channels are email, in-app pop-ups, and SMS.

Each works best for different user groups and moments in the adoption journey.

Email blasts work well for employees, customers, managers, and project stakeholders who need a little context before answering.

In-app pop-ups are useful when you want feedback tied to a recent action, such as completing onboarding or using a feature.

SMS can work for mobile-first audiences or time-sensitive pulse surveys where speed matters more than detail.

You should also consider user cohort differences.

End-users may respond better to in-app prompts because the experience is fresh.

Executives and managers may prefer email because it gives them space to reflect on timelines, business impact, and implementation satisfaction survey topics.

Response timing, reminders, and useful metrics

Survey timing matters almost as much as survey content.

A good rule is to keep response windows between 5 and 10 business days, depending on audience size and urgency.

You can send one reminder halfway through the window and a final reminder near the close, but do not overdo it unless your goal is to become the most ignored sender in the company.

When responses come in, start with quick-win metrics that are easy to compare over time.

CSAT helps measure general satisfaction with the rollout or support experience.

SUS score gives you a structured usability benchmark for software experiences.

Net Adoption Rate can help you track how many users moved from access to active, meaningful use.

You should also segment results by role, department, geography, or user maturity.

That is where the story gets interesting.

An average score may look fine, but segmentation may reveal that new users are thriving while experienced users are frustrated, or that one team is seeing ROI while another is stuck.

Plus, the best teams feed these findings back into a survey software implementation checklist.

That way, every survey result sharpens future launch planning, communication, training, and support.

Best Practices – Dos and Don’ts for High-Impact Post-Implementation Surveys

What to do if you want better responses and better insight

Great surveys are short, specific, and easy to act on.

If you want useful answers, respect people’s time.

That means keeping surveys under 10 minutes, writing clear questions, and making every item earn its place.

A few strong questions beat a bloated questionnaire every time.

Here are the core dos for high-impact surveys.

Do personalize invitations so people know why their feedback matters.

Do segment questions by role because executives, administrators, and front-line users experience implementations differently.

Do combine ratings with open-ended why prompts so you understand both the score and the reason behind it.

Do close the feedback loop by sharing what you learned and what actions will follow.

Do treat surveying as iterative by using multiple checkpoints instead of a one-and-done approach.

That last point matters a lot.

One survey cannot capture onboarding, training quality, communication effectiveness, and long-term ROI equally well.

Different moments need different implementation questions.

What to avoid if you do not want garbage data

There are also a few common mistakes that weaken results quickly.

Do not use leading language that nudges people toward positive answers.

Do not ignore mobile optimization because many users will answer on a phone, often while doing six other things.

Do not delay sharing results because silence makes people think their feedback disappeared into a corporate sock drawer.

Do not overload surveys with jargon that confuses occasional users.

Do not ask broad questions when a targeted one would work better.

Here’s the thing, survey design is really decision design.

If your questions are vague, your insights will be vague too.

If your survey is long, clunky, or clearly generic, response quality drops fast.

Whether you are building post transaction survey questions, post product launch survey questions, or a questionnaire for CRM implementation, the best practice is the same.

Make it easy to answer, easy to analyze, and easy to convert into action.

Instead of treating feedback as a final checkbox, treat it as part of your operating rhythm.

Related IT Survey Surveys

29 Technology Survey Questions

Explore 25 technology survey questions with sample questions to guide your research on tech trend...

30 Power BI Survey Questions to Boost Insights

Discover 25 Power BI survey questions with sample answers to improve dashboards, analysis, and re...

30 Department Survey Questions for Employees

Explore 25 department survey questions for employees, designed to improve feedback, engagement, a...