27 Survey Questions Mistakes to Avoid

Discover 25 sample questions on survey questions mistakes to avoid common errors, improve responses, and create better surveys fast.

Poorly written survey questions do more than look sloppy. They can quietly bend your results until they tell a comforting story instead of the truth. Pew Research Center notes that question wording and practical difficulties can introduce error or bias into survey findings, and its experiments show that wording and order can change how people answer. (pewresearch.org) If you want reliable customer feedback and smarter decisions, you need to spot the most common survey mistakes before they ship. This guide breaks down seven major survey questions mistakes, with bad examples, quick fixes, and practical do’s and don’ts you can use right away, especially when using an online survey maker.

Leading & Loaded Questions – The Fast Track to Biased Data

Biased wording poisons good data fast.

If you want honest answers, your survey has to stop flirting with the respondent.

Leading questions nudge people toward a preferred answer, while a loaded question sneaks in assumptions or emotionally charged language that can pressure people into responding a certain way.

That difference matters.

A leading question gently pushes.

A loaded question arrives wearing heavy boots and stomps on neutrality.

Pew Research Center emphasizes that question wording sits at the center of sound survey research because poorly worded or leading questions can skew results. (pewresearch.org)

Why & When This Mistake Happens

This is one of the most common survey mistakes because teams often care deeply about the brand, the campaign, or the outcome.

You see it all the time in customer feedback question mistakes, especially when a company writes questions that sound like mini love letters to its own product.

Marketing teams may want validation.

Product teams may want proof.

Leadership may want a dashboard that says everyone is thrilled.

That is how you end up with biased survey questions dressed up as “insight.”

NPS follow-ups are a danger zone here.

So are political polls, employee opinion surveys, and post-purchase forms where the business already assumes the experience was wonderful.

Here’s the thing.

When your question contains praise, blame, or an assumption, respondents stop reporting and start reacting.

Some people will agree just to move on.

Others will push back simply because the wording annoys them.

Either way, the data gets wobbly.

On top of that, loaded wording can make respondents feel judged.

That increases dropout risk and lowers trust, which is one of the sneakiest problems with surveys.

5 Sample Bad Questions To Avoid

How satisfied are you with our amazing customer service?

Don’t you agree our new app is the best on the market?

How much did our outstanding staff improve your visit today?

Why do you oppose harmful plastic straw bans?

Which brilliant features of our product do you value most?

These are classic examples of bad survey questions because they inject praise, assumptions, or emotional framing.

They do not ask for opinion cleanly.

They hint at the “right” answer like a teacher hovering over your shoulder.

Quick Fix / Better Alternatives

The fix is gloriously boring.

Use neutral wording.

Ask about one topic at a time and remove emotional adjectives, implied conclusions, and argumentative phrases.

For example, instead of asking, “How satisfied are you with our amazing customer service?” ask:

- How satisfied or dissatisfied are you with the customer service you received?

That version gives the respondent room to answer honestly.

It also supports cleaner reporting because “amazing” is not doing the statistical heavy lifting anymore.

If you want richer detail, add a neutral follow-up:

- What, if anything, influenced your rating?

Plus, train everyone involved in survey production to scan for hidden persuasion.

If a question sounds like it belongs in an ad, it does not belong in a survey.

Pew Research Center says poorly worded or leading survey questions can skew results, making neutral wording central to sound survey research (source)

How to create this survey in HeySurvey

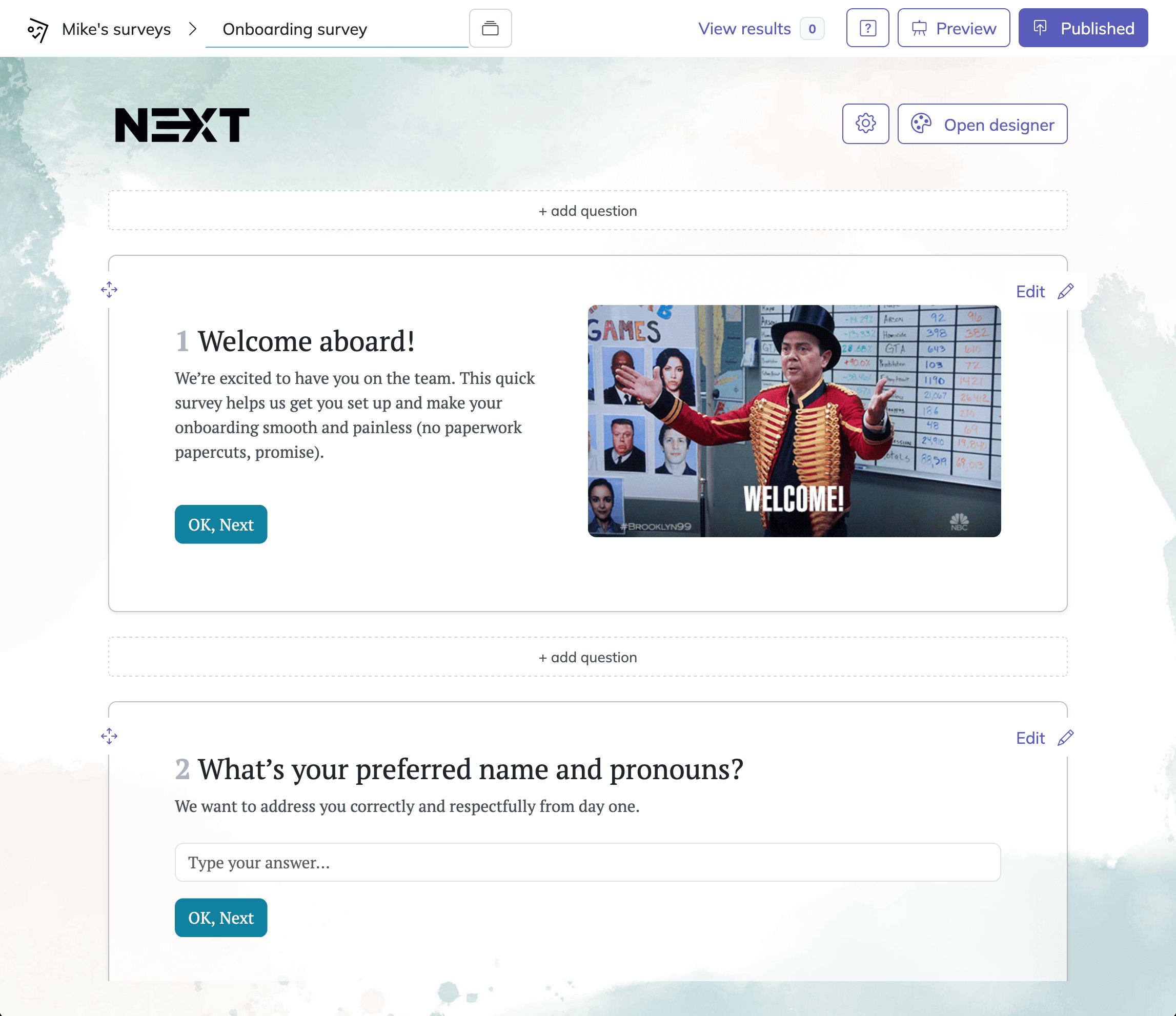

You can start right away by opening a template with the button below, or begin from a blank survey if you want full control. HeySurvey works in your browser, so there’s nothing to install.

1. Create a new survey

Click Create survey and choose how you want to begin: use a template, start with an empty sheet, or paste in questions using text input. Once the editor opens, you can rename the survey to match your project. If you already have a suitable template, opening it is the fastest way to get started.

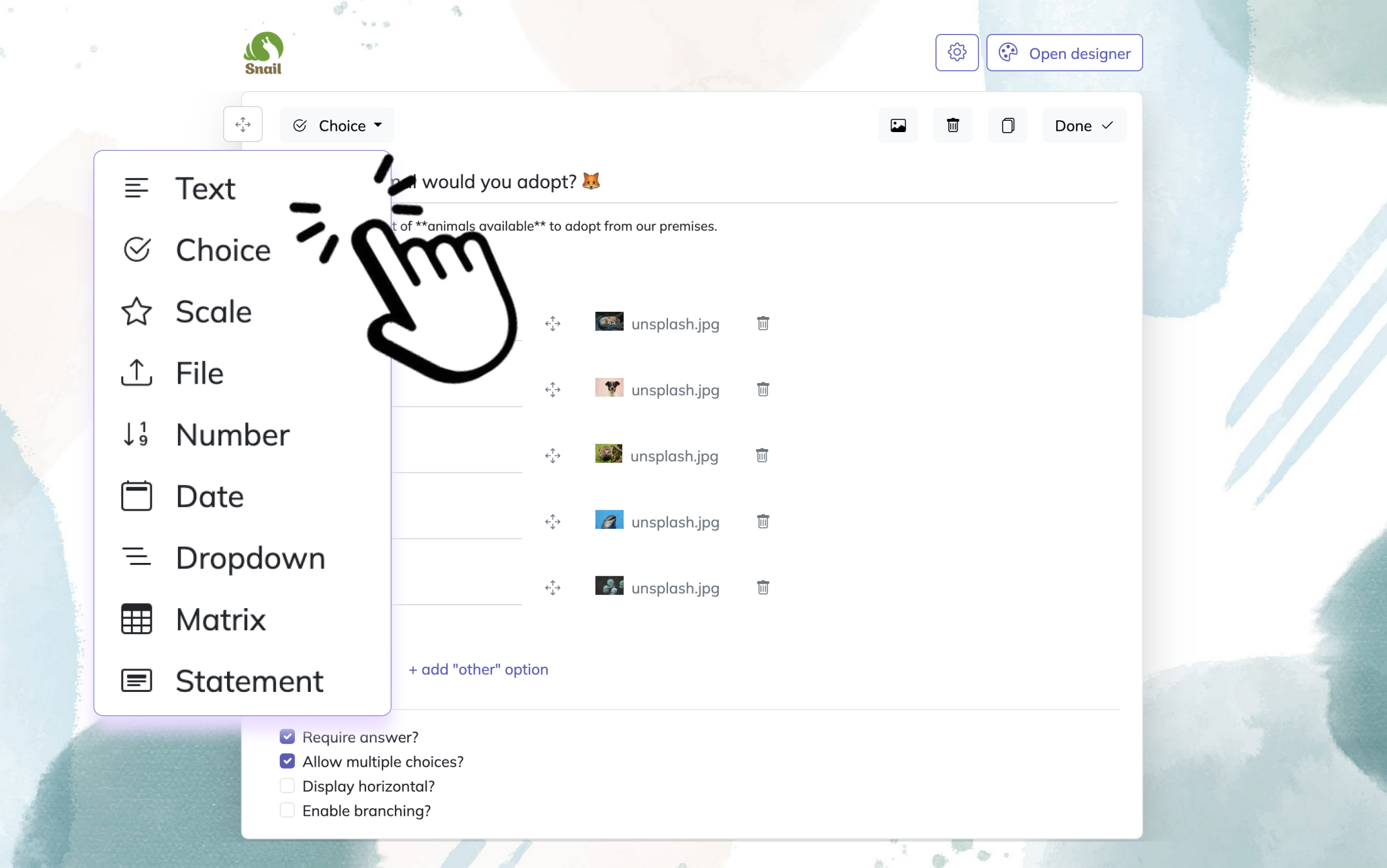

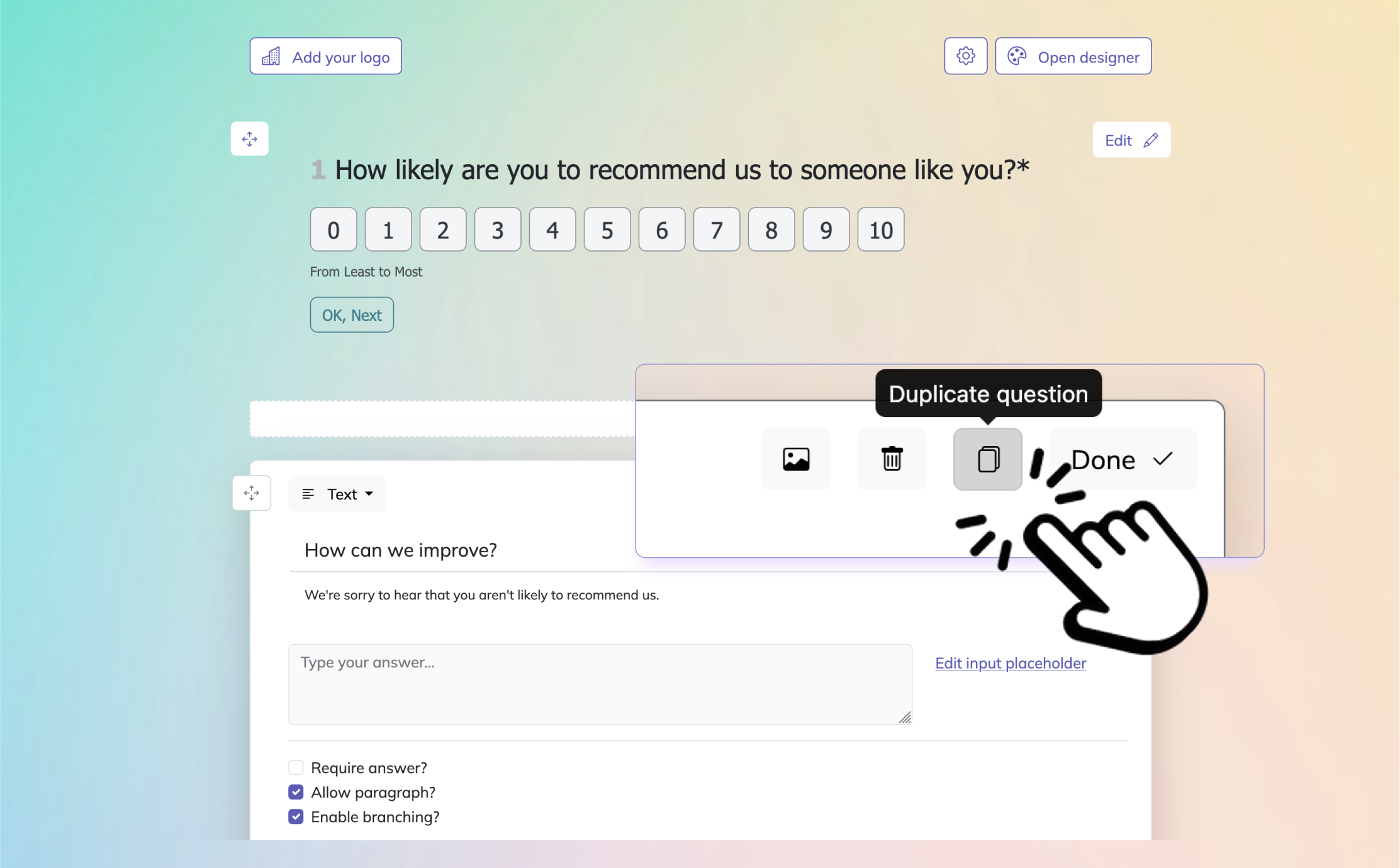

2. Add questions

Click Add Question to insert your first question, then continue building the survey one step at a time. HeySurvey supports common question types such as text, multiple choice, scale, dropdown, number, date, and more. You can mark questions as required, add descriptions, duplicate questions, and attach images if needed. If your survey needs a custom flow, use branching to send respondents to different questions based on their answers.

Bonus steps: open the Designer Sidebar to apply branding like your logo, colors, fonts, and background. In Settings, you can define start and end dates, response limits, redirect URLs, and whether respondents can view results.

3. Publish your survey

Before sharing, use Preview to check how the survey looks on desktop and mobile. When everything is ready, click Publish to generate a shareable link. Publishing requires an account, and once it’s live, you can send the link, embed the survey on your website, and start collecting responses.

Double-Barreled Questions – One Query, Two Variables, Zero Clarity

One question should measure one thing.

Double-barreled questions ask about two ideas at once and then demand one answer.

That might save a little space, but it wrecks clarity.

If a respondent loves one part and dislikes the other, what are they supposed to choose.

Flip a coin?

This is one of the most common survey design mistakes to avoid because it creates data you cannot interpret with confidence.

Pew’s guidance on survey writing stresses careful wording and exact phrasing because survey science depends on respondents understanding what is being asked. (pewresearch.org)

Why & When This Mistake Happens

This mistake usually appears when someone tries to make a survey shorter.

You will see it in employee engagement surveys, onboarding questionnaires, feature feedback forms, and customer satisfaction studies where teams are trying to “be efficient.”

Efficiency is nice.

But vague efficiency is not your friend.

A question like “How satisfied are you with your salary and career progression?” sounds tidy, yet salary and career growth are not the same thing.

One can be strong while the other is weak.

When you force both into one answer, you end up with mushy data.

These are the kinds of problems with surveys that make reports sound confident and useless at the same time.

Double-barreled wording also spreads confusion unevenly.

Some respondents average their opinions.

Some answer based on the first part only.

Some answer based on whatever annoyed them most that day, which is very human and very inconvenient.

5 Sample Bad Questions To Avoid

How satisfied are you with your salary and career progression?

How easy and enjoyable was the checkout process?

Do you find your manager supportive and effective?

How satisfied are you with the product’s price and quality?

Was the training clear and relevant to your role?

Each of these includes two variables.

That means each answer could hide two different realities.

These are textbook bad survey questions because the result cannot tell you which part caused the rating.

Quick Fix / Better Alternatives

Split the question.

Yes, the survey may become a little longer.

No, that is not a crisis.

Better to have six clear questions than three mysterious ones that produce decorative nonsense.

For example:

How satisfied are you with your salary?

How satisfied are you with your opportunities for career progression?

That simple split improves analysis immediately.

You can now identify where the issue actually lives.

The same rule works across product, HR, and customer research.

If a question contains “and,” stop and inspect it.

Not every “and” is guilty, but many are tiny chaos agents.

If survey length is a concern, cut lower-value questions instead of merging unrelated ideas into one.

Short surveys are good.

Short and muddy surveys are just faster ways to collect confusion.

Research shows double-barreled survey questions reduce measurement quality because respondents must answer multiple concepts with one response, increasing ambiguity and error (SAGE study).

Ambiguous & Vague Wording – The Root of Confusing Survey Questions

Specific language beats clever language every time.

Ambiguous wording leaves too much room for interpretation.

That means different respondents answer different questions in their heads, even though they are all reading the same sentence.

And just like that, your survey turns into a group project with no instructions.

Pew Research Center notes that question wording can affect how people respond, and cognitive interviewing is often used to identify when respondents interpret terms differently than researchers intended. (pewresearch.org)

Why & When This Mistake Happens

Vagueness sneaks in when writers assume everyone shares the same frame of reference.

They use fuzzy time frames like “recently,” soft frequency words like “regularly,” or jargon that feels obvious inside the company but means very little outside it.

That is how examples of confusing survey questions are born.

Global customer feedback surveys are especially vulnerable.

A phrase that seems harmless in one region may be interpreted very differently elsewhere.

Even inside one country, words like “often,” “local,” “premium,” or “affordable” can mean wildly different things depending on age, income, culture, and context.

Ambiguous survey questions also break trend tracking.

If your team changes wording from “in the past month” to “recently,” you may think you are measuring the same behavior when you are not.

The data may still look neat in a dashboard, but neat is not the same as true.

Here’s the thing.

Respondents are usually generous.

If a question is vague, they will still try to answer it.

That effort can make the problem harder to spot, because the response rate looks fine while the meaning underneath is drifting around like a shopping cart with one broken wheel.

5 Sample Bad Questions To Avoid

Do you shop here regularly?

Have you used our service recently?

Is our pricing affordable?

Was the issue resolved quickly?

Do you think the platform is user-friendly?

These are ambiguous survey questions because key terms are undefined.

“Regularly,” “recently,” “affordable,” “quickly,” and “user-friendly” all invite guesswork.

Quick Fix / Better Alternatives

The cure is precision.

Define the time frame, define the action, and define any term that could stretch in five directions.

For example, instead of “Do you shop here regularly?” ask:

- In the past 30 days, how many times have you shopped with us?

Or instead of “Was the issue resolved quickly?” ask:

- How many days did it take for your issue to be resolved?

If you must use a technical term, define it in plain language.

If you are surveying an international audience, test wording across markets before launch.

Plus, pilot testing is your best friend here.

If respondents ask, “What do you mean by that?” your survey is giving you a gift.

Take the hint and rewrite.

Unbalanced or Skewed Response Scales – When the Numbers Lie

Bad scales can fake good news.

You can write a decent question and still ruin it with a crooked response scale.

This is one of the nastiest common survey mistakes because the question looks harmless, but the answer options quietly push respondents toward a preferred direction.

Pew Research Center highlights the importance of response option order and often randomizes options to reduce order effects, while its research has found measurable differences between formats such as select-all and forced-choice. (pewresearch.org)

Why & When This Mistake Happens

Unbalanced scales often happen because teams want to measure positivity, not reality.

So they create a scale with four favorable options and one negative option, or they use labels that make positive responses sound more reasonable than critical ones.

That is not measurement.

That is a pep rally with checkboxes.

NPS misuse can also create trouble.

The score itself has a standard 0 to 10 scale, but many teams wrap it in biased intro copy, inconsistent follow-ups, or dashboards that over-interpret tiny shifts.

The problem is not numbers.

The problem is pretending numbers are neutral when the design behind them is doing gymnastics.

Scale design also goes wrong when the midpoint is removed without a good reason.

Forced choice can be useful in some cases, but removing neutrality just to suppress “middle” answers can inflate positive sentiment artificially.

These are exactly the kinds of survey production mistakes that make executives smile and researchers sigh into their coffee.

5 Sample Bad Questions To Avoid

How would you rate our support? Excellent / Very good / Good / Fair / Poor

How satisfied are you with your delivery experience? Extremely satisfied / Very satisfied / Satisfied / Slightly satisfied / Dissatisfied

Our website is easy to use. Strongly agree / Agree / Somewhat agree / Slightly agree / Disagree

How likely are you to recommend us? Definitely / Probably / Maybe / Unlikely

Please rate your onboarding. Amazing / Great / Good / Not great / Bad

These are close ended survey questions at the scale level because the answer sets are tilted.

They give positivity more shades and negativity less room to breathe.

Quick Fix / Better Alternatives

Use balanced scales with symmetrical options.

A simple 5-point version works well in many cases:

Very satisfied

Somewhat satisfied

Neither satisfied nor dissatisfied

Somewhat dissatisfied

Very dissatisfied

That structure gives both positive and negative responses equal footing.

If you use a 7-point scale, keep labels balanced there too.

On top of that, think carefully before removing the midpoint.

Sometimes a neutral option reflects a real opinion, not laziness.

And if you randomize response order where appropriate, document it clearly and stay consistent.

Good scale design is not flashy.

It just stops your numbers from telling tiny lies with great confidence.

Balanced, symmetric response scales reduce measurement bias because skewed answer options can systematically push survey results toward a preferred direction (AAPOR Best Practices).

Jargon-Heavy & Complex Questions – Poorly Worded Survey Pitfalls

If people need a decoder ring, rewrite the question.

Complex language is one of the easiest ways to lose honest responses.

When a survey sounds like a white paper trying to impress a conference room, respondents either guess, skip, or abandon ship.

None of those outcomes are ideal, unless your research goal is sadness.

Pew’s work on survey wording and cognitive interviewing shows why clarity matters so much: respondents can interpret terms differently, and better wording improves consistency in understanding. (pewresearch.org)

Why & When This Mistake Happens

This mistake shows up a lot in tech, medical, finance, and B2B SaaS surveys.

Subject matter experts know the field deeply, which is useful, but they can forget what ordinary respondents actually call things.

So instead of “software tools working together,” the survey asks about “interoperability across middleware environments.”

That might be accurate.

It also sounds like the questionnaire swallowed a manual.

Jargon-heavy items increase cognitive load.

Respondents spend more effort decoding the language than answering the question.

That creates noise, straight-lining, and drop-off.

It also leads to one of the most overlooked common survey design mistakes to avoid: confusing expertise with clarity.

You are not dumbing the survey down when you simplify language.

You are making it measurable.

Complexity can also hide inside long sentences.

Even plain words can become hard to process when the sentence packs in multiple clauses, conditions, or technical qualifiers.

If the respondent has to reread it twice, you already have a quality problem.

5 Sample Bad Questions To Avoid

Rate the interoperability of the API middleware within your current tech stack.

How satisfied are you with the efficacy of our multichannel care coordination protocols?

To what extent has our platform optimized your cross-functional workflow synergies?

How intuitive is the implementation architecture for non-native administrative users?

Please assess the scalability and modularity of the embedded analytics environment.

These are clear examples of bad surveys in action because the wording asks respondents to parse specialist language before they can answer.

Quick Fix / Better Alternatives

Use a Grade 8 readability mindset.

That does not mean every respondent is in middle school.

It means your wording should be clear enough that reading effort does not distort the answer.

For example, instead of “Rate the interoperability of the API middleware within your current tech stack,” ask:

- How easy was it to connect our product with the other tools your team uses?

That version measures the same idea in human language.

If a technical term is truly necessary, provide a brief definition or glossary link.

Keep sentences short.

Ask one thing at a time.

And read each question out loud.

If it sounds like something no actual customer has ever said, it probably belongs in your internal documentation, not your survey.

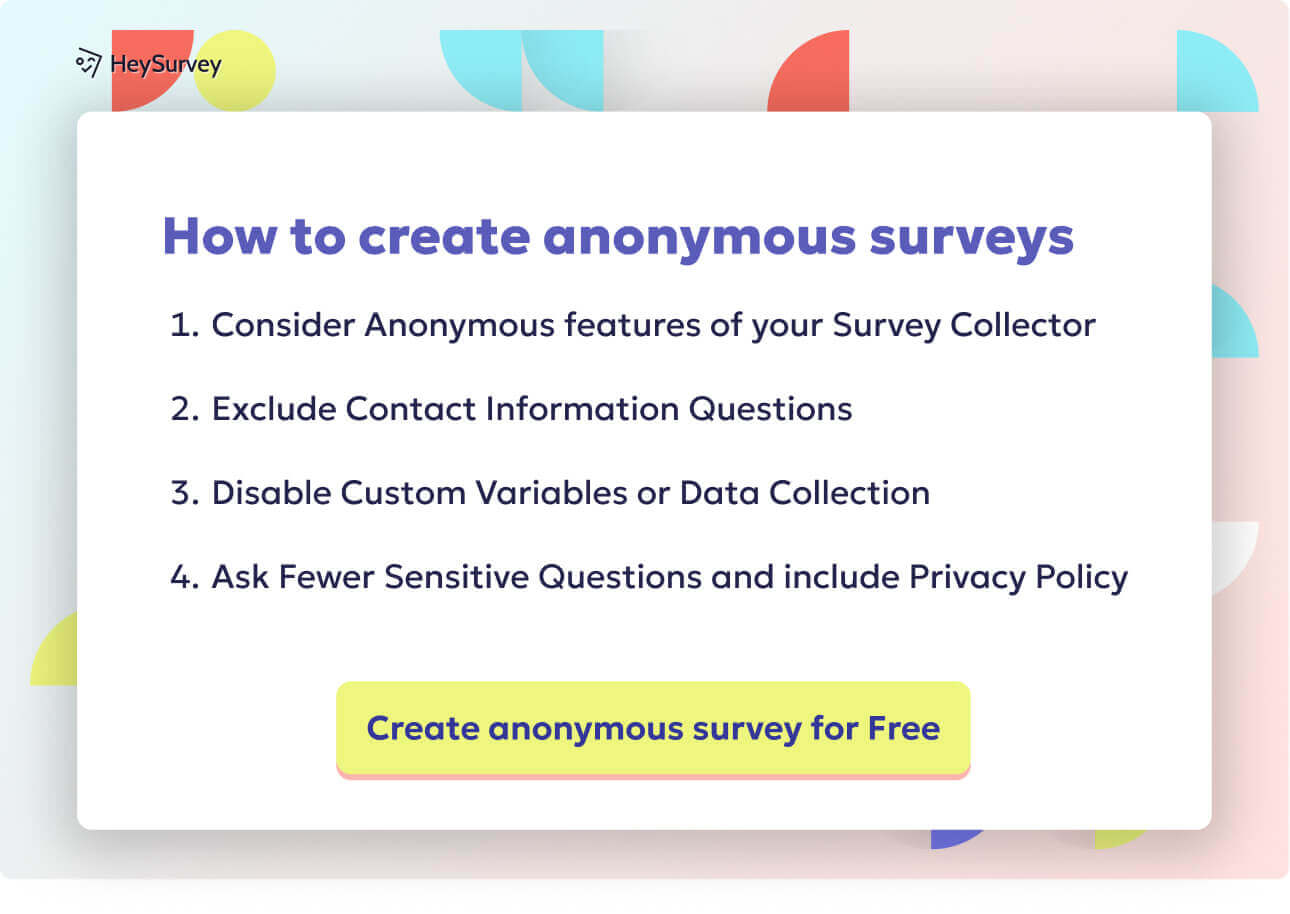

Sensitive or Personal Questions Without Proper Context

Trust is part of survey design.

Sensitive questions are not automatically bad.

Sometimes you genuinely need demographic, financial, health, or workplace information to interpret results correctly.

The problem starts when those questions appear too early, sound too intrusive, or offer no explanation for why the information is being requested.

That is when respondents start side-eyeing the form like it just asked for their blood type and favorite pizza topping in the same breath.

Why & When This Mistake Happens

This is common in HR surveys, healthcare intake forms, DEI studies, and customer research where segmentation matters.

Teams know the data would be useful, so they ask for it without enough context, without anonymity assurances, or without making the field optional when it should be.

That can lower completion rates and distort results because some respondents skip the question while others give vague or inaccurate answers.

It also raises practical concerns.

Privacy expectations matter.

And if you collect personal data, you need to handle it carefully and transparently.

Questions about identity, income, age, disability, health status, or household makeup should never feel casual or careless.

Even when legally permissible, awkward timing can still damage response quality.

Put a highly personal question at the top of a survey and you risk losing the respondent before the useful stuff even begins.

That is one of those customer feedback question mistakes that looks tiny on paper and expensive in practice.

5 Sample Bad Questions To Avoid

What is your household income?

What is your age and ethnicity?

Have you ever had a mental health condition?

What is your exact job title and salary?

Are you pregnant or planning to become pregnant?

These become bad survey questions when they are asked too early, without context, without an opt-out, or in a survey that does not clearly justify the need.

Quick Fix / Better Alternatives

Start with purpose and protection.

Tell respondents why you are asking, how the data will be used, and whether responses are anonymous or confidential.

Place sensitive questions later in the survey unless they are needed for branching.

Use optional response fields where appropriate.

For example:

- Which of the following income ranges best describes your household income? This question is optional and helps us compare responses across different customer groups.

That is gentler and more respectful.

You can also use branching logic so only relevant respondents see the question.

On top of that, avoid demanding exact values when ranges will do.

People are far more likely to respond honestly when the request feels proportionate.

Respect gets better data.

It is not just the nice thing to do.

It is the smart thing too.

Question Order & Context Effects – Bias Hidden in Sequencing

Order changes answers more than most people expect.

Even if every individual question is well written, the sequence can still influence responses.

Earlier questions create context for later ones, and that context can tilt interpretation, memory, and judgment.

Pew Research Center explicitly warns that the order in which questions are asked can influence responses, and it describes both contrast effects and assimilation effects caused by earlier items. (pewresearch.org)

Why & When This Mistake Happens

Order effects often appear in long customer experience forms, brand trackers, and political or public opinion surveys.

A broad satisfaction question at the top can color later responses about specific features.

A series of negative questions can prime criticism.

A flattering introduction can prime positivity.

That is not just theory.

Pew notes that asking a specific question before a general one can change responses, and it uses techniques like randomization to reduce these effects where appropriate. (pewresearch.org)

This is one of the sneakiest survey production mistakes because the questions themselves may look perfectly fine in isolation.

But surveys are not isolated.

They are experiences.

Respondents carry the mood and meaning of earlier items into later ones, often without realizing it.

Primacy and recency effects can also shape how people respond to answer options or lists.

If the same options always appear in the same order, some respondents may favor what they see first, while others remember what they saw last.

Tiny design choice.

Big ripple.

5 Sample Bad Questions To Avoid

How amazing was your experience with our brand? Followed by: How satisfied were you with delivery speed?

How frustrated were you by your recent support issue? Followed by: How likely are you to recommend us?

How trustworthy is our company? Followed by: How would you rate product quality?

Which of these service failures upset you most? Followed by: Overall, how satisfied are you with your visit?

Do you agree our pricing offers excellent value? Followed by: How fair was our pricing?

These are not always bad because of wording alone.

They are examples of bad survey questions in sequence because earlier framing contaminates later answers.

Quick Fix / Better Alternatives

Use a logical funnel.

Start broad, then move to specific, unless you have a tested reason to do the reverse.

Group similar topics together without letting one item “coach” the next.

Randomize response options where appropriate, and randomize question order for certain blocks if order effects are a risk.

If you ask both overall and detailed questions, pilot test the sequence.

Try two versions and compare.

Plus, avoid emotionally loaded intros right before evaluative items.

If the survey opens with applause, outrage, or suspicion, the rest of the questionnaire will feel that weather.

Clean sequencing helps respondents answer the question you meant to ask, not the mood you accidentally created.

Survey Question Dos and Don’ts: A Quick-Scan Best-Practice Checklist

Good surveys are built on small disciplined choices.

By now, you have seen how leading wording, vague phrasing, bad scales, and poor order create avoidable problems with surveys.

The good news is that the fix is not magic.

It is method.

Pew’s survey guidance repeatedly emphasizes careful wording, consistent context, thoughtful ordering, and testing because those details shape response quality. (pewresearch.org)

Do

Do use neutral wording that does not hint at a preferred answer.

Do ask one thing at a time.

Do define time frames clearly, such as “in the past 30 days.”

Do use balanced response scales with equal positive and negative options.

Do keep language simple and concrete.

Do pilot test your survey before sending it widely.

Do review questions for ambiguity, jargon, and hidden assumptions.

Do place sensitive questions later unless they are required for branching.

Do explain why personal information is being collected.

Do randomize options or question blocks when order effects are likely.

Do keep surveys as short as possible without merging separate ideas.

Do compare each question against your real decision-making needs.

These are the practical do’s and don’ts of survey questions that protect your data before analysis even begins.

Don’t

Don’t use praise words like “amazing,” “brilliant,” or “outstanding” in the question.

Don’t ask double-barreled questions with two variables in one item.

Don’t rely on vague words like “often,” “recently,” or “affordable” without defining them.

Don’t use unbalanced scales that give positivity more room than negativity.

Don’t remove a neutral midpoint just to force stronger opinions.

Don’t write for internal experts if your respondents are ordinary users.

Don’t place intrusive demographic questions at the top without context.

Don’t ask for exact figures when ranges are enough.

Don’t let early questions emotionally prime later answers.

Don’t keep changing wording if you want to compare results over time.

Don’t use double negatives.

Don’t skip a pilot test because the survey “looks fine.”

If you already have a broader internal resource on survey design, this is the perfect place to direct readers to your complete guide.

And if you offer a printable checklist or template, invite them to grab it.

People love a checklist because it lets them feel organized before they accidentally write, “How delighted were you by our revolutionary billing portal?”

FAQ

What are common survey mistakes?

Common survey mistakes include leading questions, double-barreled questions, ambiguous wording, unbalanced response scales, jargon-heavy phrasing, intrusive personal questions without context, and poor question order. These issues can bias responses or make answers hard to interpret. (pewresearch.org)

Why are leading and loaded questions a problem?

They push respondents toward a preferred answer or build assumptions into the wording. That weakens objectivity and can skew customer feedback, opinion polling, and satisfaction data. (pewresearch.org)

What are examples of confusing survey questions?

Questions like “Do you shop here regularly?” or “Was the issue resolved quickly?” are confusing because key terms are undefined. Different respondents may interpret “regularly” or “quickly” in very different ways. (pewresearch.org)

How can you avoid common survey design mistakes?

Use neutral language, define terms clearly, ask one idea per question, balance your scales, test order effects, and pilot test the survey with real users before launch. Those steps catch many common survey design mistakes to avoid before they damage your results. (pewresearch.org)

Bad surveys rarely fail in dramatic ways.

They fail politely, with tidy charts and shaky meaning.

If you want better data, focus on the boring basics: neutral wording, clear structure, balanced scales, and respectful context. Fix those, and your survey stops guessing what people mean and starts learning what they actually think.

Survey Question Design Best Practices – Dos & Don’ts

Designing flawless surveys is an art and a science—a few survey question best practices stand between you and clean, actionable data. Start with a pilot test. Get fresh eyes on your survey to unearth tricky wording, ambiguous language, or accidental bias.

Keep every question neutral and direct. Ban adjectives that lead, scale options that lean, and binary “always/never” phrasing. Each query should home in on a single idea, not two. Hop back to the double-barreled section if you’re tempted to combine.

Response options should be balanced, exhaustive, and mutually exclusive. Offer true coverage for all possible answers, and make sure no overlap leaves respondents guessing. Always add an “Other (please specify)” for those you haven’t anticipated.

Protect privacy for sensitive questions. State anonymity clearly, and use indirect phrasing if you’re asking about anything sticky or personal. This applies to topics from income to health to integrity.

Your quick checklist for survey perfection:

- Pilot test with real people before launch

- Phrase every question in neutral language

- Address only one topic per question

- Build balanced, symmetrical response scales

- Ensure all answer choices are exhaustive and non-overlapping

- Add an “Other” option or a write-in when needed

- Guarantee respondent anonymity on sensitive issues

Every misstep above—leading, double-barreled, loaded, vague, absolute, unbalanced, non-exhaustive, or too-personal—has a simple fix. Take time to revise, review, and reflect on every phrase. Knowing how to fix survey question mistakes is half the battle; putting it into practice is where the magic happens. Smart surveys mean smarter decisions, and smart decisions win every time.

Related Question Design Surveys

29 Quantitative Survey Research Questions Survey Questions Example

Explore 25 quantitative survey research questions with survey questions example to enhance your n...

31 Good Survey Questions for Better Feedback

Explore 25 good survey questions to boost response quality, gather insights, and improve feedback...

31 Fun Survey Questions Ideas to Boost Engagement

Explore 25 fun survey questions ideas with sample questions to inspire engaging surveys, boost re...