29 Nonresponse vs Voluntary Response Survey Questions

Explore 25 sample questions on nonresponse vs voluntary response survey questions, with clear examples, insights, and practical guidance.

Surveys can go sideways fast when the wrong people answer, or do not answer at all. If you have ever mixed up nonresponse with a voluntary response bias problem, you are very much not alone.

This guide helps you compare nonresponse bias vs voluntary response bias, spot a shaky voluntary response sample, and understand what is a voluntary response sample without drowning in theory. Plus, whether you work in research, marketing, CX, HR, or you are just trying to survive stats class, you will learn how to choose better survey approaches and write questions that get smarter answers with an online survey tool.

Nonresponse Survey Questions

Sample questions

How satisfied are you with your recent experience with our service?

What was the main reason you chose not to complete your purchase today?

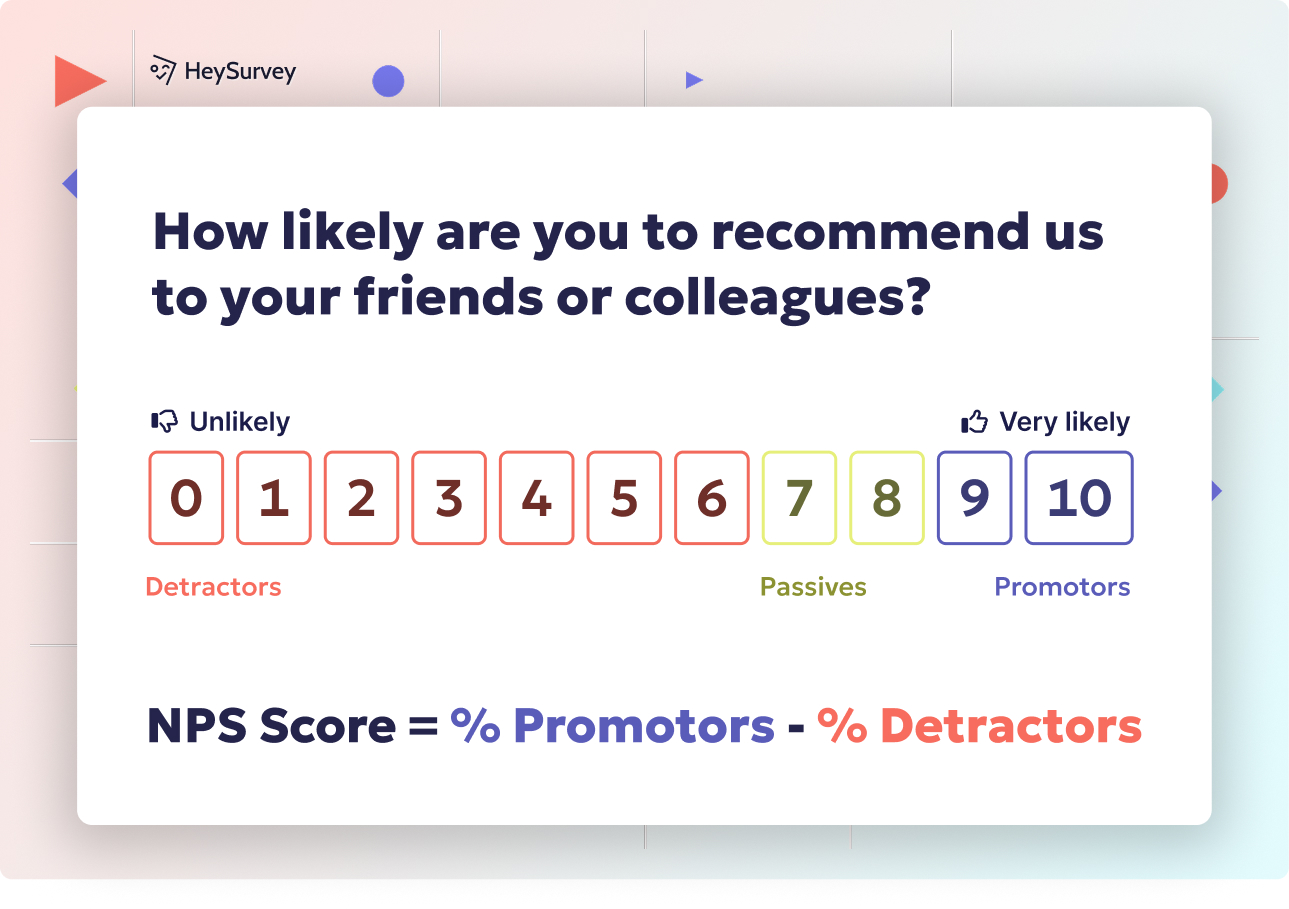

How likely are you to recommend our company to a friend or colleague?

Which of the following best describes your overall experience with our support team?

What could we do to make participating in this survey easier for you?

These questions go to a defined sample, not just whoever feels like chiming in.

Why & When to Use

You use nonresponse survey questions when you already have a selected group of people you expect to hear from, but some of them never reply. That is different from a voluntary response sample, where people opt in on their own and voluntary response bias can show up because the loudest opinions rush in first.

This setup is common in customer feedback, employee engagement, healthcare, academic research, and post-event surveys. Here's the thing: the problem is not just the wording of the question, but the missing answers from parts of the sample.

If certain groups answer less often, your results can lean off course and create nonresponse bias. Plus, that is why quality assurance survey questions matters so much, because both can skew results, but they start in different ways.

It also helps to separate total survey nonresponse from skipped questions inside completed surveys. One means the person never answered at all, and the other means they left specific items blank, which is annoying in its own special way.

To make this approach more reliable, you can use:

follow-up emails

reminder texts

shorter surveys

clearer timing and purpose

On top of that, these steps help you reduce missing responses before the data starts acting suspicious.

Pew research finds nonresponse can substantially bias defined-sample surveys, while voluntary-response samples risk self-selection from unusually engaged participants (source)

How to create a nonresponse vs. voluntary response survey in HeySurvey

1. Create a new survey

Start by opening a template with the button below, or choose a blank survey if you want to build it from scratch in our online survey maker. Give your survey a clear internal name, such as “Nonresponse vs. Voluntary Response Survey,” so it’s easy to find later.

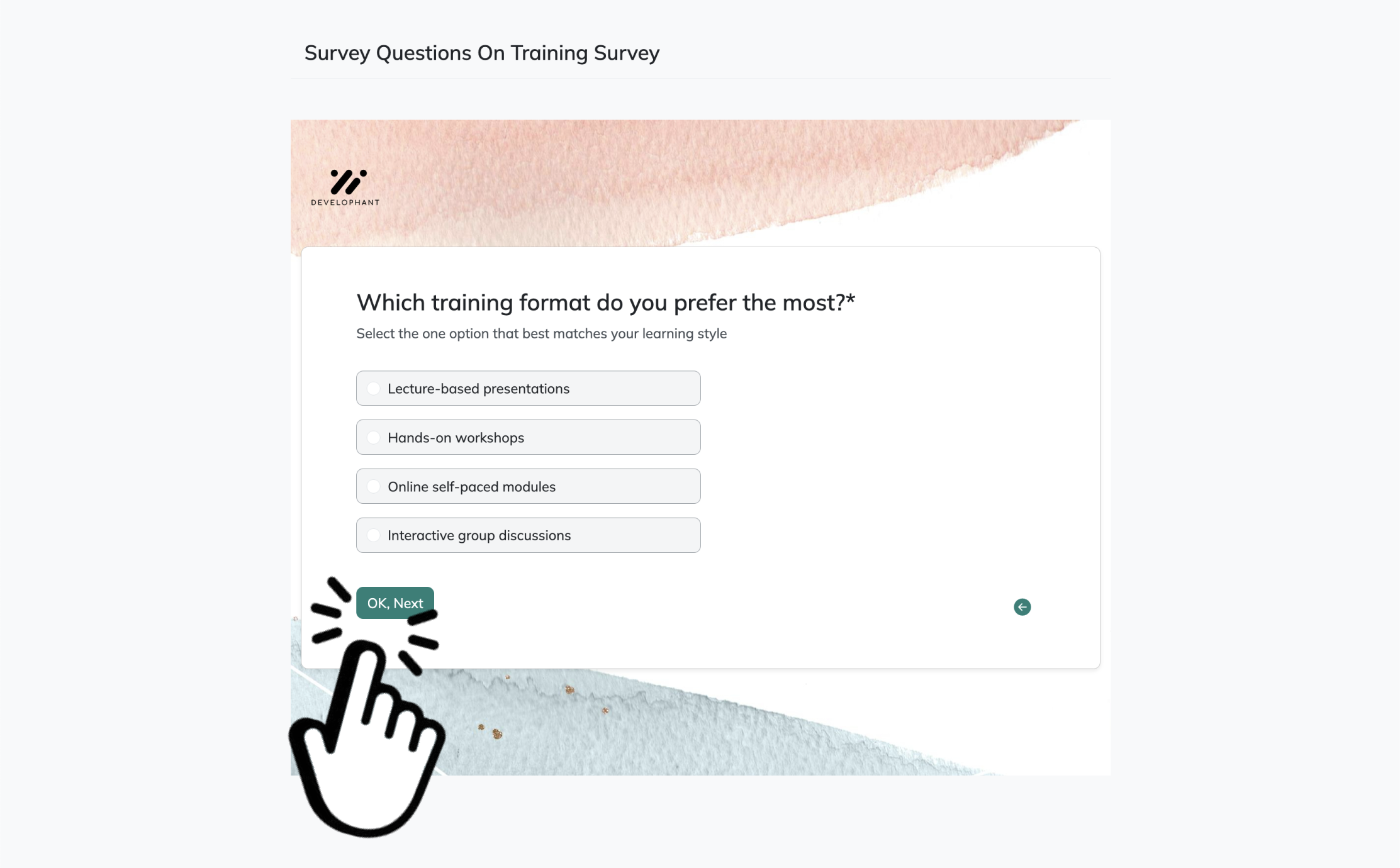

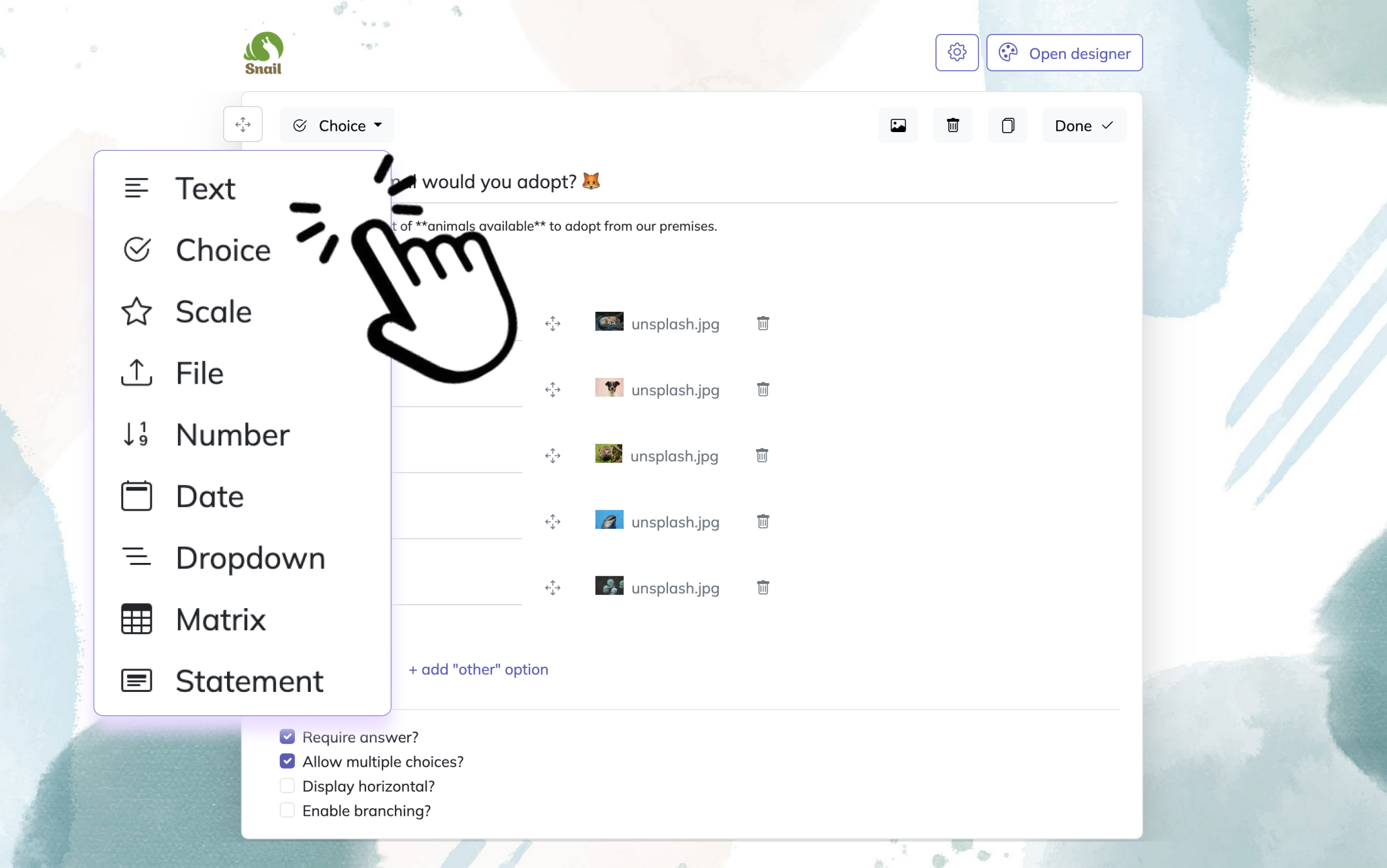

2. Add questions

Click Add Question and include the questions you want to compare or explain both survey types. Use Choice questions for simple answer options, Text questions for open-ended feedback, and Statement questions to add instructions or definitions. You can also mark key questions as required if every respondent should answer them. If needed, add branching so later questions change based on earlier answers.

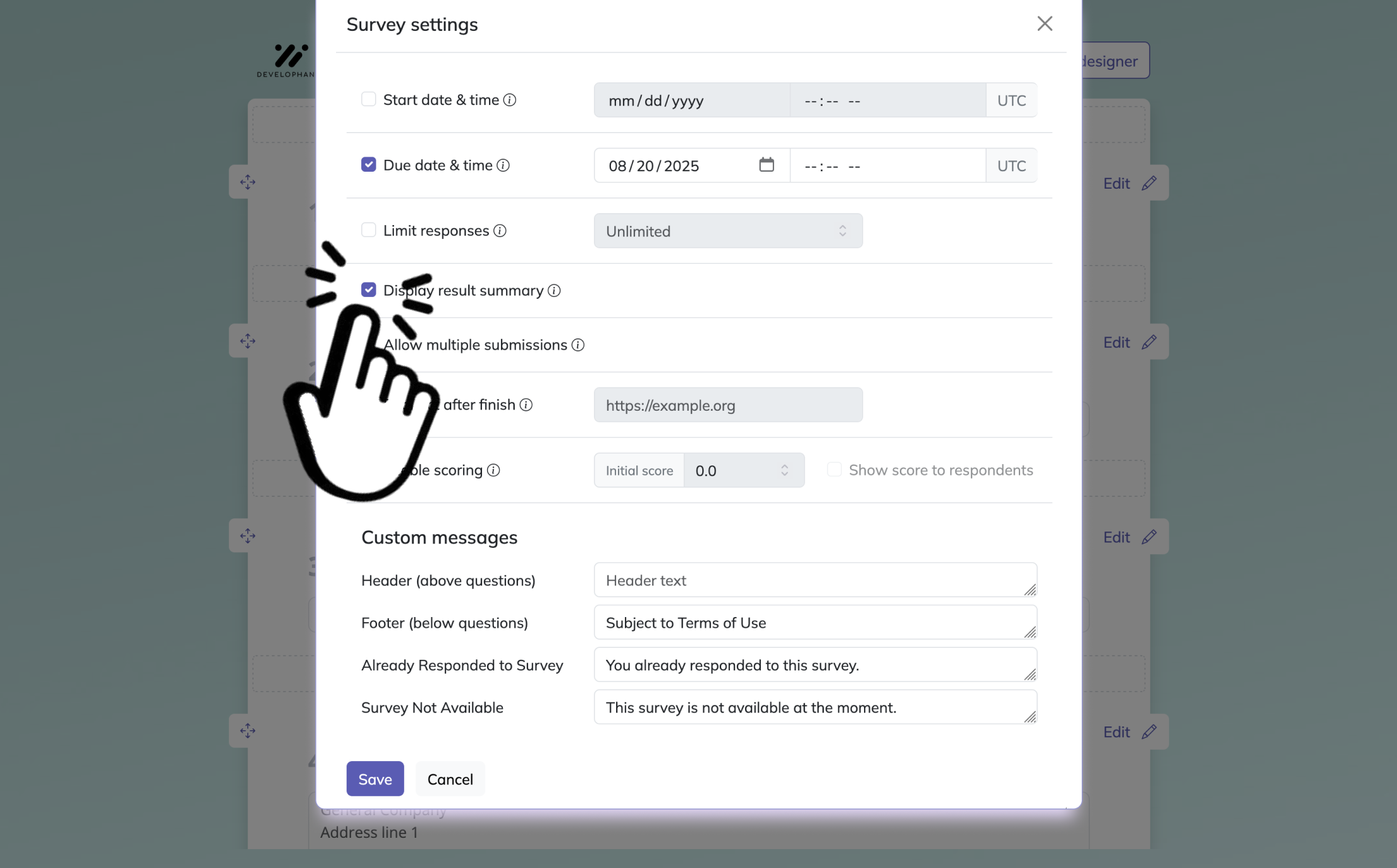

3. Publish your survey

Preview the survey first to check that everything looks right on desktop and mobile. When you’re ready, click Publish to create a shareable link. Then send the link to your audience and start collecting responses.

Voluntary Response Survey Questions

Sample questions

What do you think about our new product launch?

Should our company add more self-service support options?

How would you rate your experience with our website today?

What feature would you most like us to improve next?

Do you agree or disagree with our recent policy change?

These questions invite anyone interested to jump in, which is exactly how a voluntary response sample forms.

Why & When to Use

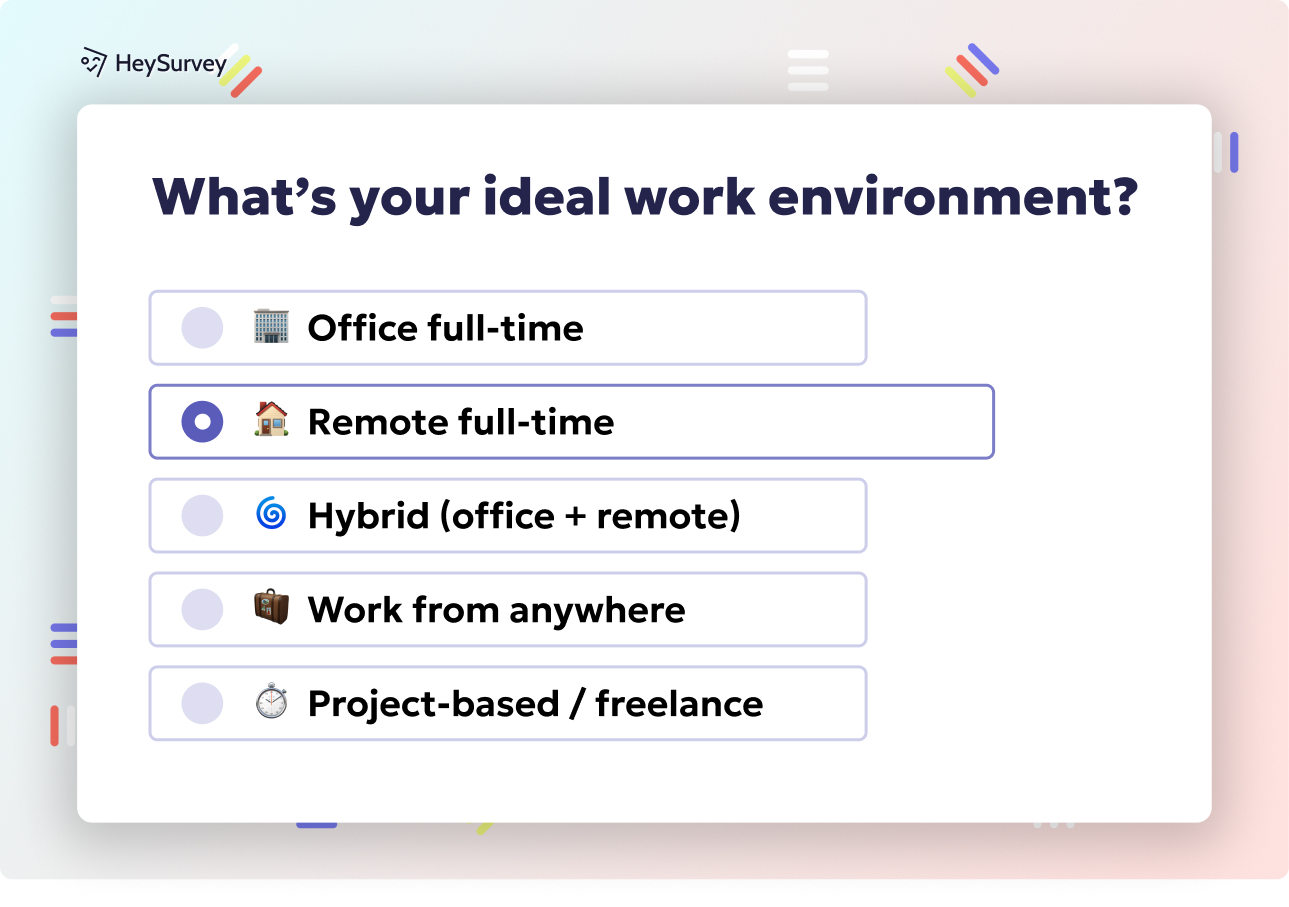

Voluntary response survey questions are shown to people who decide for themselves whether to answer. In simple terms, the voluntary response definition is this: participation is optional, so the people who reply create a voluntary response sample instead of coming from a tightly controlled sample.

You see this style all over website polls, social media surveys, public news polls, open feedback forms, and email links shared with a broad audience. Here's the thing: voluntary response sampling is fast, cheap, and wonderfully easy to launch before your coffee gets cold.

This approach works well when you want quick reactions, strong opinions, or fresh ideas from people motivated to speak up. Plus, if you are asking what is a voluntary response sample, it is basically a group made up of self-selected respondents rather than people chosen through stricter sampling methods.

That convenience comes with a catch. Voluntary response bias often shows up because people with very positive or very negative feelings are more likely to answer, while quieter middle-of-the-road folks scroll away.

Use this method when you want speed and direction, not precise representation.

gather fast feedback

spot hot-button issues

collect suggestions and comments

test interest in a topic

On top of that, do not present a voluntary response sample as representative unless you have real sampling controls in place.

Nonresponse bias arises when selected respondents differ from nonrespondents, while voluntary response bias comes from self-selected participants who choose to opt in (NSF NCSES).

Customer Satisfaction Survey Questions: Comparing Nonresponse vs Voluntary Response

Sample questions

How satisfied were you with your recent purchase experience?

Did our product meet your expectations?

What was the biggest challenge you faced while using our service?

How easy was it to get the help you needed?

What is one change that would most improve your experience?

The same questions can tell very different stories depending on who chooses to answer and who never replies.

Why & When to Use

Customer satisfaction surveys can be run in two very different ways. You can send them to a defined group of recent customers, or you can post an open form and let anyone respond through a voluntary response sample.

Here’s the thing: the questions may stay the same, but the research value changes a lot. That is why understanding voluntary response bias vs nonresponse bias matters if you want results you can actually use.

Use a controlled outreach when you survey people after key moments like these:

after a purchase

after a support interaction

after onboarding

after a renewal

This design helps you measure customer experience more consistently, but nonresponse bias can still creep in if certain customers ignore the survey.

Use voluntary response when you want fast ideas from people motivated to speak up. It works well for website feedback, app suggestion forms, and always-on comment boxes where a voluntary response sample is the goal, not a surprise guest.

A practical rule: if you need better representativeness, choose controlled outreach. If you need speed, lower cost, and broad feedback, voluntary response works, but watch for voluntary response bias because the loudest voices often grab the mic first.

Employee Feedback Survey Questions: Comparing Nonresponse vs Voluntary Response

Sample questions

Do you feel your work is valued by your team and manager?

How satisfied are you with communication from leadership?

What barriers make it harder for you to do your job effectively?

How comfortable do you feel sharing honest feedback at work?

What is one change that would improve your employee experience?

Employee surveys work best when people feel safe enough to answer honestly, not just loud enough to answer first.

Why & When to Use

Employee feedback usually gives you better data when you send a survey to a defined internal group instead of relying on a purely voluntary response sample.

Here’s the thing: in workplace settings, a voluntary response form can attract people with very strong opinions, which raises the risk of voluntary response bias and can skew what looks like the “main” issue.

At the same time, nonresponse can be just as risky. If disengaged, burned-out, or frustrated employees skip the survey, low response rates can hide serious problems that leadership really needs to see.

That is why nonresponse bias vs voluntary response bias matters so much in employee research. One hides unhappy voices through silence, and the other can overamplify extreme views through open participation.

Use structured employee surveys when you need clearer, broader insight across teams, departments, or locations.

This works especially well for topics like:

engagement tracking

manager feedback

communication from leadership

workplace culture

retention risk

Plus, open voluntary response channels still have real value when you want ongoing ideas or quick comments.

They fit well for:

anonymous idea boxes

pulse survey comments

culture suggestions

always-open feedback forms

On top of that, confidentiality, trust, and survey fatigue shape response quality more than most teams expect. If employees think feedback is not private or useful, the survey can go quieter than the office microwave at 5 p.m.

Research shows nonresponse can systematically exclude dissatisfied groups, while open nonprobability surveys often overrepresent people with strong opinions, biasing results in different ways. Source

Market Research Survey Questions: Comparing Sample Quality and Bias

Sample questions

Which of these brands have you heard of before today?

How likely are you to consider buying this product in the next 30 days?

What price range feels most reasonable for this product?

Which product feature matters most to you when making a purchase decision?

What would stop you from choosing this brand over competitors?

Good market research needs more than eager answers. It needs the right people in the room, too.

Why & When to Use

In market research, you usually want insight you can apply to a wider audience, not just to the people most excited to click a survey link.

That is why controlled sampling often matters more here than in casual feedback collection.

If you use a voluntary response sample, the respondents may be more engaged, more opinionated, or more brand-aware than the average customer. Plus, that can create voluntary response bias and make your results look stronger, harsher, or more certain than they really are.

A simple voluntary response sampling example is posting a product poll on social media and letting anyone answer. The people who respond are self-selected, so they may not reflect your real target market.

A plain voluntary response bias example is asking for opinions on a new feature in your fan newsletter. Your biggest fans may love it, but the broader market might shrug and keep scrolling.

Use structured sampling when you need statistically credible insight for things like:

audience segmentation

product testing

brand perception

pricing studies

purchase intent research

Here’s the thing: if the goal is population-level conclusions, voluntary response should be handled carefully. Great for quick reactions, yes. For serious market decisions, not so much.

Sensitive Topic Survey Questions: Reducing Nonresponse and Self-Selection Bias

Sample questions

How comfortable are you discussing this topic in a confidential survey?

Have you experienced difficulty accessing the support or resources you need?

Which concerns, if any, make you hesitant to answer questions on this topic?

How safe do you feel providing honest feedback about this issue?

What is the most important improvement you would like to see in this area?

Sensitive surveys need more than careful questions. They need safety, trust, and a setup that does not accidentally invite only the loudest or silence everyone else.

Why & When to Use

When you survey people about health, finances, workplace misconduct, political opinions, identity, or personal behavior, response patterns can get tricky fast.

Some people skip the survey entirely, which can create nonresponse bias, while others jump in because of a strong personal experience, which can create voluntary response bias.

Here’s the thing: this is where nonresponse bias vs voluntary response bias really matters.

If people stay silent because the topic feels risky, embarrassing, or invasive, you may miss important groups. If only highly motivated people answer, your voluntary response sample may overrepresent intense experiences, strong opinions, or recent problems.

That is the heart of voluntary response bias vs nonresponse bias in sensitive research.

Carefully designed anonymous surveys work well when you need honest feedback and can protect identity, reduce pressure, and explain clearly how responses will be used. Open public polling is usually a bad fit here because it can magnify self-selection and make a voluntary response sample even less representative. Basically, it is like asking for whisper-level honesty through a megaphone.

To reduce bias, you should:

reassure people about privacy and confidentiality

use neutral, nonjudgmental wording

avoid loaded or leading questions

let people skip questions when needed

explain why their responses matter

On top of that, question framing, anonymity, and audience trust directly shape who responds, who drops out, and what they feel safe saying.

Best Practices for Avoiding Nonresponse Bias and Voluntary Response Bias

Sample questions

Who is being invited to respond, and who might be left out of this survey?

What might stop important groups from participating, finishing, or answering honestly?

Are you using a voluntary response sample for a decision that needs representative data?

Do your questions feel neutral, clear, and easy to answer without extra effort?

How will you follow up with nonrespondents or balance self-selection effects afterward?

Think of these prompts as a pre-launch survey checkup, not a script to copy word for word.

Why & When to Use

Use this section as your practical checklist before you hit send, post the link, or unleash a survey on the world like a confetti cannon with opinions.

Here’s the thing: preventing voluntary response bias and nonresponse bias starts before the first answer comes in.

If your survey depends on a voluntary response sample, you should pause and ask whether that method fits your goal. For quick feedback or idea gathering, voluntary response can work fine. For decisions that need representative data, it often falls short.

This is where voluntary response bias vs nonresponse bias becomes useful in plain English. Voluntary response bias happens when highly motivated people opt in, while nonresponse bias happens when certain people stay out, skip, or drop off.

Before launch, you should:

define who your target audience actually is

check whether one channel excludes part of that audience

shorten the survey and improve timing to reduce drop-off

write neutral, specific wording that does not attract only strong opinions

plan reminders or follow-ups for nonrespondents

compare respondent traits with your intended audience when possible

Plus, do not treat an open poll as automatically representative. If you use voluntary response sampling, say so clearly. Quick rule: if the stakes are high and representativeness matters, do not rely on a voluntary response sample alone.

How to Interpret Survey Results When Response Bias Is Likely

Sample questions

Which audience segments are underrepresented in the results?

Do the respondents reflect the full population we intended to study?

Are the strongest opinions overpowering the middle ground?

What outside data can help validate or challenge these findings?

What decisions are safe to make from this survey, and which require more evidence?

Treat survey results like clues, not a courtroom verdict.

Why & When to Use

Many real-world surveys are messy, and that does not make them useless. It just means you need a smart framework before you treat a voluntary response sample like the final word.

Here’s the thing: when voluntary response bias is likely, your job is not to panic. Your job is to interpret carefully, name the limits, and avoid turning a noisy signal into a shiny overconfident conclusion.

Watch for warning signs like these:

unusually low response rates

respondent profiles that look heavily skewed by age, role, location, or interest level

sharply polarized answers with very little middle ground

survey findings that clash with sales data, usage data, or observed behavior

If those patterns show up, say so plainly. A voluntary response sample can still help you spot themes, complaints, and strong preferences, but it may not tell you what the full population thinks.

Plus, this is where voluntary response bias vs nonresponse bias matters. In a voluntary response survey, eager people jump in. With nonresponse bias, some groups stay quiet, which can bend results in a different way.

Rate your confidence honestly:

high confidence for directional feedback or issue spotting

medium confidence for rough patterns

low confidence for population-wide claims

On top of that, validate with follow-up research when the decision is important. A second survey, interviews, or behavioral data can keep your conclusions from doing jazz hands without evidence.

Turn Survey Insights Into Action

Sample questions

What is the clearest insight we can act on right now?

Which findings need validation before making a major decision?

What follow-up survey or outreach should we run next?

How can we improve future participation from missing audience groups?

What business, customer, or employee action should come from these results first?

Good surveys do not end with data. They end with better decisions.

Why & When to Use

This is the moment where your survey earns its keep. A voluntary response sample can absolutely help you move forward, but only if you match the decision to the quality of the evidence.

Here’s the thing: not every result deserves the same level of confidence. If you are using a voluntary response survey for exploratory listening, it can surface themes, complaints, and ideas fast.

If you want directional feedback, a voluntary response sample may still help, especially when paired with other signals. But for high-stakes choices, representative research matters more than a loud inbox and a hopeful shrug.

Keep your next step simple:

use voluntary response for quick input and idea spotting

use stronger sampling methods for decisions that affect budgets, policy, or broad strategy

validate big findings when voluntary response bias or nonresponse bias may be distorting the picture

improve outreach so missing groups are more likely to participate next time

Plus, this is where voluntary response bias vs nonresponse bias becomes practical, not just academic. One means the most motivated people raised their hands, and the other means key people stayed silent, which is not exactly a dream team for accuracy.

The core takeaway is simple: even the best-written questions cannot rescue a flawed response process. Choose the right survey format, improve participation, and treat every finding with the honesty it deserves.

Best Practices: Dos and Don’ts to Minimize Nonresponse & Voluntary Response Bias

Smart survey habits make your data way more trustworthy.

Do:

Keep Surveys Concise: You respect your respondents' time when you keep your questions focused and necessary.

Personalize Invites: You make participants feel valued when you use their name and speak directly to their interests.

Test Incentives: You can experiment with rewards to see what actually motivates your specific audience.

Diversify Channels: You reach people where they already are when you use email, social media, SMS, and other platforms.

Monitor Drop-Off Points: You quickly spot where participants lose interest when you track where they stop answering.

Don’t:

Ignore Reminder Timing: You avoid annoying or losing your audience when you time reminders thoughtfully instead of bombarding or neglecting them.

Overload with Open-Ended Questions: You keep people from burning out when you balance open-ended items with easier, quicker questions.

Rely on a Single Mode: You connect with more types of respondents when you mix survey modes instead of using only one.

Forget Mobile Optimization: You make your survey accessible for busy people on the go when you ensure it works well on all devices.

Skip Bias Analysis: You protect your results from sneaky distortions when you always check for potential biases.

On top of that, when you understand and address nonresponse bias and voluntary response bias, you can design surveys that deliver accurate, actionable insights. Remember, the quality of your data shapes the quality of your decisions, so treat each survey like it matters, because it does.

Related Business Survey Surveys

29 SWOT Survey Questions for Better Analysis

Explore 25 SWOT survey questions with examples to help assess strengths, weaknesses, opportunitie...

32 Quality Assurance Survey Questions

Explore 25 quality assurance survey questions covering product, process, and service feedback to ...

28 Sustainability Survey Questions for Meaningful Insights

Discover 25 top sustainability survey questions to assess your organization's eco-friendly practi...