31 Lessons Learned Survey Questions for Better Insights

Explore 25 lessons learned survey questions with sample questions to improve feedback, capture insights, and strengthen future project outcomes.

A lessons learned survey is a simple way to turn project experience into smarter future decisions, whether you call it a bank of project lessons learned questions, a retrospective form, or a source of practical lessons learned examples. You usually deploy these surveys at the end of a project, at key phase gates, after major incidents, or during regular review cycles when you want honest feedback before memory gets fuzzy. The real magic is choosing the right survey format, because different moments call for different lessons learned questions, and the six types below help you uncover insights you can actually use instead of filing away in the corporate attic, especially when you're using an online survey tool to collect responses.

Post-Project Retrospective Survey

Why & When to Use

Classic end-of-project reflection

The post-project retrospective survey is the old reliable of the lessons-learned world, and honestly, it earned that reputation.

You use it when the work is complete, the deliverables are out the door, and the team finally has enough distance to see the whole picture without arguing about yesterday’s status update.

This type of lessons learned survey works best when you want broad, balanced feedback from everyone involved.

That includes project managers, team members, cross-functional partners, and sometimes leadership if they played an active role in delivery.

Because the project is finished, people can evaluate outcomes against the original goals, which makes the feedback more grounded and less emotional.

Here’s the thing, this survey is where many of your best lessons learned examples are born.

It helps you spot what actually worked, what quietly caused chaos, and what should never be allowed near the next project unless you enjoy avoidable pain.

A good retrospective survey gives you a clean snapshot of the full journey.

What happened at kickoff.

What changed during execution.

What helped the team perform.

What blocked progress when pressure increased.

What should become standard practice next time.

This is also one of the best places to ask direct project lessons learned questions, because the team can compare plans to actual outcomes with real evidence instead of guesswork.

Plus, when you collect responses across roles, patterns pop out fast.

One person may mention timeline slippage, while five others point to unclear approvals, and suddenly you are no longer guessing where the real issue lived.

If you run projects often, this survey can become a dependable engine for collecting example lessons learned that improve planning, staffing, budgeting, communication, and governance.

And unlike dramatic postmortems in movies, this version usually involves fewer explosions and more spreadsheets.

Sample Questions

Use questions like these to pull out specific, useful answers instead of vague comments that sound wise but change nothing.

What objectives were fully met, partially met, or unmet in this project?

Which processes slowed progress and why?

What contributed most to team success?

Where did scope, schedule, or budget deviate from plan?

What should we start, stop, or continue doing on future projects?

These questions work because they cover outcomes, process, performance, constraints, and future action in one compact set.

They also help you build a reusable library of lessons learned survey questions that can be adapted across many teams and project sizes.

When people answer clearly, you get more than opinions.

You get evidence you can use to improve templates, meeting rhythms, approval paths, resource plans, and stakeholder communication.

On top of that, this survey type encourages whole-project thinking.

That matters because projects rarely fail because of one dramatic mistake.

More often, they wobble because of ten small things that nobody wrote down at the right time.

Intel’s retrospective research found response rates exceeded 70% when teams used short web surveys with multiple reminders and at least two weeks to respond (source).

How to create a survey in HeySurvey

1. Create a new survey

Start by opening a template with the button below, or begin from scratch if you want full control. HeySurvey lets you create a survey without signing up first, so you can explore the editor right away. Once your survey opens, you can give it an internal name and choose the basic structure that fits your goal. If you want a fast start, a pre-built template is often the easiest option.

2. Add questions

Click Add Question to insert your first question, then continue building your survey one question at a time. You can choose from text, multiple choice, scale, number, date, dropdown, file upload, or statement questions. For each question, write the text, add a description if needed, and mark it as required if respondents must answer before continuing. You can also add images, duplicate questions, and use branching so that different answers lead to different next questions. If you want a smoother experience, you can keep the survey to one question per page or show multiple questions on a single page.

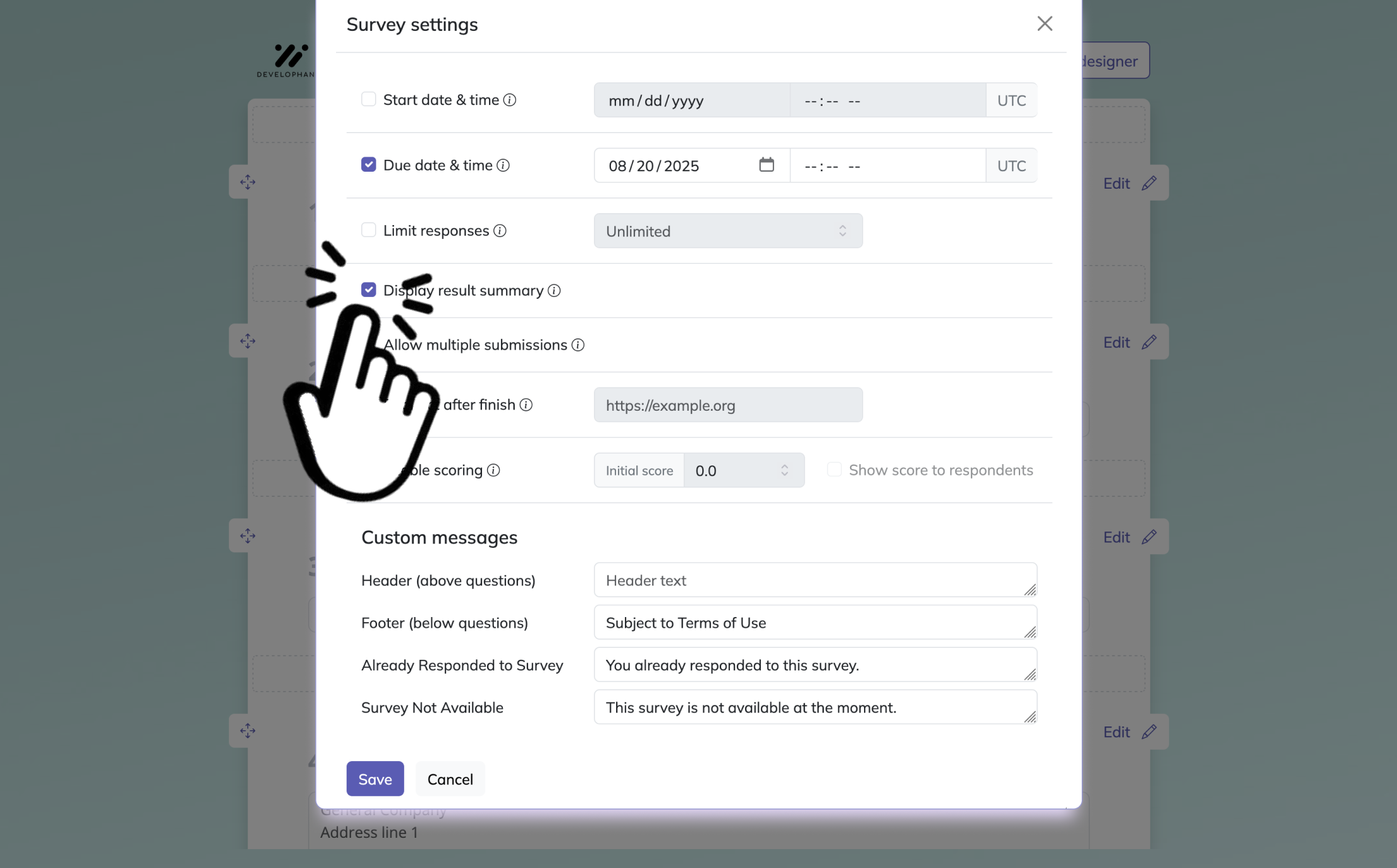

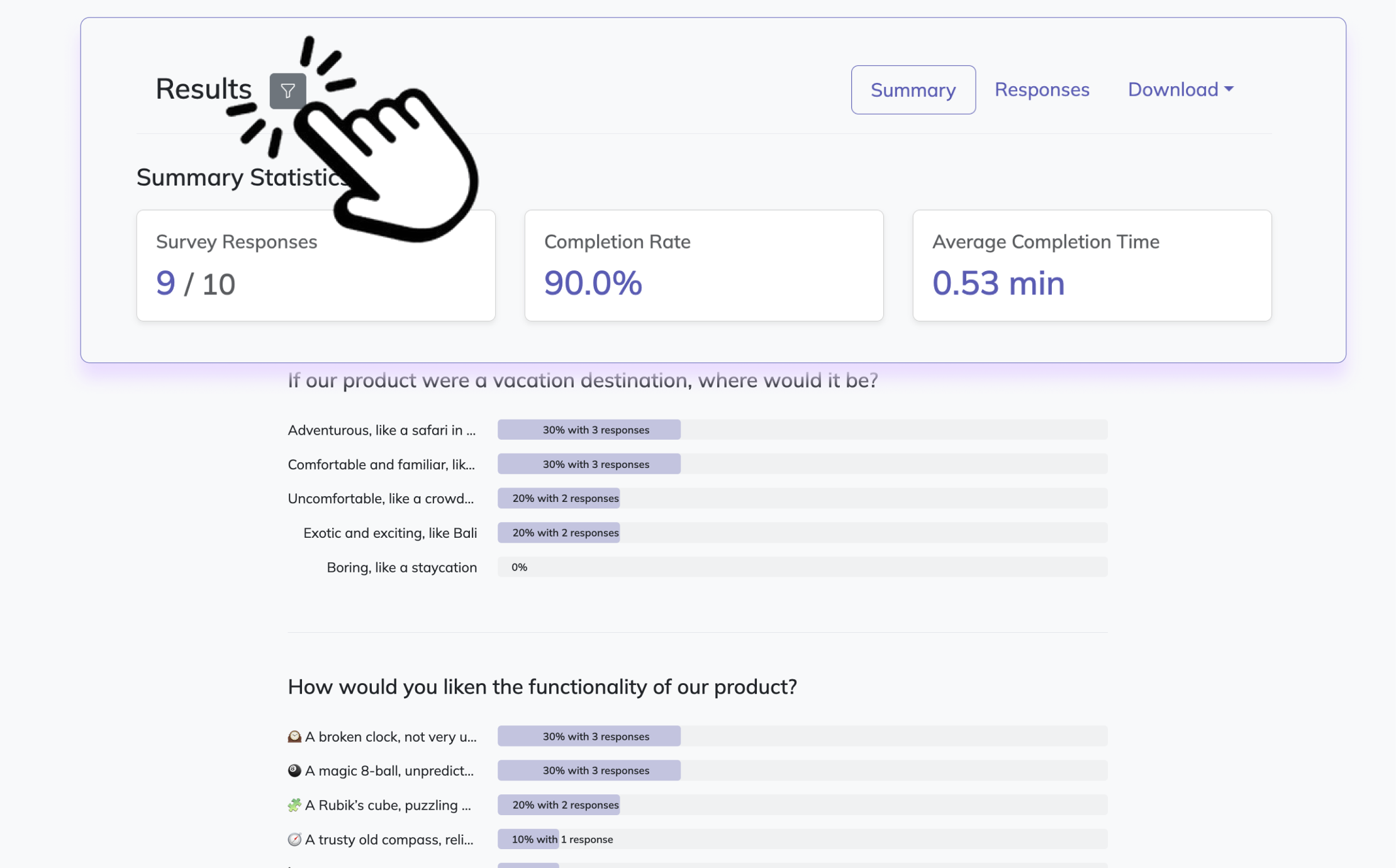

Bonus: Apply branding and settings

Before publishing, you can customize the look and behavior of your survey. Add your logo, change colors, fonts, backgrounds, and question card styles in the Designer Sidebar. In Settings, define start and end dates, response limits, or a redirect URL after completion. You can also choose whether respondents may view results.

3. Publish your survey

When everything looks right, preview your survey to test the flow, then click Publish to generate a shareable link. Publishing requires an account, so responses can be saved and viewed later. After publishing, you can share the link or embed the survey on your website.

Mid-Project Pulse Survey

Why & When to Use

Real-time course correction

A mid-project pulse survey is your chance to learn while the project still has a pulse, which is much more useful than discovering the obvious after the deadline has already sprinted past you.

You use this survey during execution, often at a milestone, phase checkpoint, monthly review, or whenever the team starts giving that subtle “we are fine” energy that usually means something is not fine.

This type of survey is built for in-the-moment lesson learned questions.

Instead of waiting until the end, you gather feedback while the team can still fix communication gaps, unclear priorities, workflow bottlenecks, or leadership support issues.

That makes this format especially valuable for longer projects, multi-phase programs, and fast-moving work where small issues become expensive if they linger.

The biggest strength of a mid-project pulse survey is speed.

You can identify risks while they are still just risks and not yet full-blown entries in a future presentation titled “What Went Wrong.”

A strong pulse survey helps you uncover:

Current blockers that threaten the next milestone.

Recent improvements worth repeating right away.

Team concerns that may not surface in meetings.

Confidence levels about near-term delivery.

Support needs from managers or sponsors.

Because the survey happens during active work, the wording should stay practical and immediate.

You are not asking people to write a memoir.

You are asking them to point at what needs attention now, what is helping now, and what should change now.

This survey also creates a healthy habit of reflection.

When teams know you regularly ask smart lessons learned questions, they start noticing patterns sooner and speaking up earlier.

Plus, pulse surveys send a useful message.

They show that feedback is not a ceremonial exercise saved for project funerals, but part of how you steer the work while it is still alive and kicking.

Sample Questions

The best mid-project surveys are short, focused, and aimed at decisions you can make immediately.

Which current risks could derail project goals if left unaddressed?

What recent process tweak improved efficiency the most?

Where are communication breakdowns occurring right now?

What immediate support do you need from leadership to succeed?

How confident are you that we will meet the next milestone?

These questions invite fast, specific answers.

They also make it easier to surface practical lesson learned examples before problems harden into habits.

When you review the responses, look for repeated themes rather than one-off complaints.

If several people mention approval delays, role confusion, or shifting priorities, you likely found a genuine control point for improvement.

On top of that, confidence questions are especially useful.

If the team says it is only moderately confident about the next milestone, that is your cue to investigate dependencies, staffing, scope clarity, and decision speed.

A mid-project pulse survey may not feel dramatic, but it often delivers some of the most valuable example lessons learned because the feedback arrives while action is still possible.

And that, politely speaking, is a better time to learn.

PMI guidance recommends using a project survey before lessons-learned sessions to capture issues across communication, resources, requirements, and implementation for actionable improvement. Source

After-Action Review (AAR) Survey for Critical Events

Why & When to Use

Fast feedback after high-stakes moments

An After-Action Review survey is designed for speed, clarity, and fresh memory, which makes it ideal after a critical event such as a launch, outage, sprint, incident, or high-pressure release.

You usually send it within 24 to 48 hours, before the details fade and before the story gets polished into something suspiciously tidy.

This survey type is not about broad project reflection.

It is about one event, one intense period, or one important outcome that deserves immediate analysis.

Because the timing is so close to the event, people can often describe what happened with better detail and less hindsight revision.

That makes AARs a rich source of concrete lessons learned examples.

They capture the difference between what was planned, what actually happened, and what influenced the result under real conditions.

That trio is gold if you want useful learning instead of generic “communication could improve” filler.

An effective AAR survey helps you uncover:

Whether assumptions matched reality.

What decisions helped in the moment.

Where resources or tools fell short.

What slowed response or execution.

What should change before a similar event happens again.

Here’s the thing, critical events reveal systems under pressure.

A process that looks fine during calm weeks may wobble instantly during a launch or incident, and that is exactly why this survey matters.

It helps you turn stressful moments into usable example lessons learned rather than repeated headaches.

And yes, if the event was messy, people may have strong opinions.

That is not a flaw.

That is the raw material.

Your job is to channel that energy into structured lessons learned questions that produce clear takeaways, not a group reenactment of the disaster.

Sample Questions

Use focused AAR questions that compare plan and reality, because that gap is often where the best learning lives.

What was expected to happen versus what actually occurred?

Why did the outcome differ from the plan?

What single action had the greatest positive impact?

Which resource gap most limited performance?

What would you do differently if the same event happened tomorrow?

These questions are powerful because they move quickly from observation to explanation to action.

They also encourage incident-based storytelling, which is one of the most effective ways to collect memorable lessons learned examples.

When someone explains how a missing approval path delayed a launch, or how one well-timed escalation saved a sprint, you gain more than commentary.

You gain real operational learning that can shape playbooks, escalation rules, staffing models, and contingency planning.

Plus, the final question creates instant relevance.

It asks people to imagine a repeat scenario right away, which usually leads to sharper, more practical answers than abstract reflection does.

That is why AARs are so useful in technical teams, operations groups, delivery functions, and any environment where fast action matters.

If an event gets your heart rate up, it probably deserves an AAR survey.

Stakeholder Satisfaction Lessons Learned Survey

Why & When to Use

The outside view you really need

A stakeholder satisfaction lessons learned survey gives you the perspective your internal team cannot fully supply, because clients, sponsors, end users, and partners see the project from a different seat.

And sometimes that seat has a much clearer view of what landed well and what made everyone quietly sigh into a calendar invite.

You use this survey after a deliverable, phase completion, launch, or major handoff when you want external feedback on both outcomes and experience.

Internal teams often focus on execution details, but stakeholders tend to notice usefulness, responsiveness, clarity, value, and trust.

That makes this survey especially important if your work depends on repeat business, executive sponsorship, or user adoption.

A strong lessons learned survey for stakeholders helps you understand not just whether the project was completed, but whether it created the experience and value people expected.

That can reveal gaps that internal reviews miss.

For example, your team may celebrate finishing on time, while stakeholders remember confusing communication, too many revisions, or a final handoff that felt rushed.

The survey can surface insight across several areas:

Satisfaction with quality and usability.

Perception of team responsiveness.

Communication effectiveness.

Feature or capability priorities.

Willingness to work together again.

This type of feedback is especially useful because it often leads to more audience-centered lesson learned examples.

Instead of asking only what the team learned about itself, you discover what customers or sponsors learned about working with you.

That is a powerful distinction.

Plus, external feedback can validate what your internal team suspects.

If both groups point to unclear status communication or approval delays, you are no longer dealing with isolated opinions.

You are looking at a pattern that deserves action.

Sample Questions

Keep the questions simple, respectful, and easy for busy stakeholders to answer without needing a project archaeology session.

How satisfied are you with the final deliverable’s quality and usability?

Which project interactions exceeded your expectations?

Where did communication fall short of your needs?

What value-adding features should we prioritise next time?

Would you recommend working with this team again? Why or why not?

These questions help you capture both measurable satisfaction and richer narrative feedback.

They also produce practical example lessons learned that connect project execution to customer experience.

When you review the responses, pay close attention to patterns in language.

If stakeholders repeatedly mention clarity, speed, accessibility, flexibility, or trust, those words point toward the qualities they value most.

On top of that, the recommendation question is quietly powerful.

It does not just ask whether people were satisfied.

It asks whether they would choose the relationship again, which often reveals the true emotional residue of the project.

That makes this survey useful not only for delivery improvement, but also for account management, service design, and reputation building.

Sometimes the best lessons learned examples come from the people who were never inside your daily workflow at all.

Research shows stakeholder satisfaction rises when projects emphasize clear objectives, effective communication, and strong collaboration across stakeholders (MDPI).

Process-Improvement Lessons Learned Survey

Why & When to Use

Fixing the system, not just the symptom

A process-improvement lessons learned survey is less about one project and more about the machinery behind many projects.

You use it periodically across teams, functions, or business units when you want to uncover recurring process friction, identify repeatable wins, and improve how work flows from one project to the next.

This survey is especially valuable in organizations that run similar projects repeatedly.

If the same bottleneck appears across multiple efforts, then the issue is probably not individual performance.

It is likely a structural process problem wearing different hats in different meetings.

That is why this survey helps you move from isolated frustration to systematic improvement.

It gives you a way to gather cross-project lessons learned questions that expose patterns across approvals, handoffs, tools, documentation, governance, and workflow design.

A well-built survey can reveal:

Which standard practices no longer earn their keep.

Which repeatable tactics create strong results.

Where handoffs consistently fail.

Which tools help or hinder execution.

What should become part of the official playbook.

Here’s the thing, teams often normalize inefficient processes because “that is just how we do it.”

A process-improvement survey politely challenges that logic and asks whether the current system is actually useful or just historically famous.

This survey also helps create more strategic lessons learned examples.

Instead of documenting one-off project stories, you build a broader knowledge base about how your operating model performs over time.

That can influence policy, templates, training, tooling, resource allocation, and governance design.

Plus, this format is great for organizations trying to scale.

When project volume increases, weak processes get louder fast.

A recurring process survey gives you a practical way to hear those weak spots before they become everyone’s full-time hobby.

Sample Questions

Use these questions to move the conversation from complaint to redesign.

Which standard procedure adds the least value and should be re-engineered?

What repeatable tactic has delivered the highest ROI across projects?

Where do hand-offs typically break down?

Which tools accelerate work vs. slow it down?

What new best practice should become part of our playbook?

These questions work because they ask people to compare process effort with process value.

That helps you identify where time is being spent without enough return.

They also make it easier to gather high-quality lessons learned survey questions across recurring operations.

If one team says a tool saves hours while another says it creates duplicate work, that is a clue worth investigating.

If several respondents identify the same handoff issue, that points to a process redesign opportunity rather than a one-time annoyance.

On top of that, questions about repeatable tactics are especially useful because they help you scale success, not just reduce pain.

Many organizations are very good at documenting what broke.

Fewer are equally disciplined about documenting what quietly worked brilliantly and deserves a permanent place in the playbook.

That is where some of the strongest example lessons learned come from.

Not from dramatic failures, but from humble systems that made work smoother, faster, and less likely to inspire emergency meetings.

Comparative Cross-Project Survey (Benchmarking)

Why & When to Use

Finding trends across multiple projects

A comparative cross-project survey is designed for PMOs, quality teams, operations leaders, and anyone else who wants to compare projects side by side instead of reviewing each one in isolation.

You use it after two or more projects are complete, especially when you want to benchmark performance, identify recurring risks, and discover which practices scale well across a portfolio.

This survey is powerful because isolated project reviews can miss broader patterns.

One project may appear unusually strong or unusually messy, but only comparison shows whether the result was a one-off or part of a trend.

That makes this format one of the richest sources of analytical lessons learned examples.

It helps you connect outcomes across schedule performance, risk response, stakeholder satisfaction, execution quality, and delivery consistency.

A comparative survey is useful when you want to answer questions like:

Which teams consistently deliver on time and why.

Which failure points show up across different project types.

Which risk controls actually work repeatedly.

How stakeholder experience changes from project to project.

What portfolio-wide change could improve results broadly.

This type of review is especially helpful in mature organizations that already collect project-level feedback but want to turn it into strategic intelligence.

You are no longer just asking what happened on one project.

You are asking what the collection of projects is trying to teach you, sometimes loudly, sometimes with the subtlety of a printer jam.

Because this survey compares multiple data points, it works well when paired with metrics.

Still, the value is not only in the numbers.

The survey adds explanation and context, helping you understand why one project outperformed another and which lesson learned examples can be transferred across teams.

Sample Questions

The best comparative surveys mix performance comparison with pattern recognition.

Which project achieved the best schedule performance index and why?

What common failure point appears in multiple projects?

Which risk-mitigation strategy consistently works across projects?

How do stakeholder satisfaction scores differ between projects?

What universal improvement could lift success metrics portfolio-wide?

These questions help you move from isolated storytelling to evidence-based learning.

They also support stronger benchmarking, because they prompt people to compare causes, not just outcomes.

For example, if one project had stronger schedule performance because decision rights were clearer, that insight may be more valuable than the schedule metric itself.

If several projects struggled with similar approval delays, vendor dependencies, or unclear scope boundaries, you have found a repeatable issue that deserves portfolio-level action.

Plus, comparative responses often generate some of the best lessons learned examples for leadership.

They are easier to defend because they are based on patterns across multiple projects, not just one team’s opinion after a rough week.

That makes this survey especially useful when you want to improve governance, standardize successful methods, or justify investment in process change.

In short, comparison gives your learning sharper teeth.

Best Practices: Dos and Don’ts for Crafting Lessons Learned Survey Questions

Dos and Don’ts

Good questions create useful action

The quality of your survey results depends heavily on the quality of your questions, which is both obvious and surprisingly easy to ignore when someone is rushing to send a form before lunch.

If your wording is vague, loaded, confusing, or too long, the answers will usually be vague, defensive, confused, or suspiciously short.

The best lessons learned questions are specific enough to guide useful answers, but open enough to invite honest reflection.

They connect feedback to a decision, a behavior, a process, or an outcome you can actually improve.

That is the difference between a survey that creates change and one that creates a PDF nobody opens again.

When crafting questions, keep these dos in mind:

Be specific so respondents know exactly what part of the work you want them to reflect on.

Tie each question to a decision point, action, or process you may change later.

Keep wording neutral so people feel invited to share rather than pushed toward one answer.

Place the most critical questions first in case respondents do not finish the full survey.

Promise follow-up actions and actually share what you learned and what you will do next.

Those habits help you collect stronger example lessons learned because people can respond with real detail instead of trying to decode what the question means.

On top of that, they build trust.

When teams see that survey input leads to visible action, they are more likely to answer thoughtfully next time.

Now for the don’ts, because a badly written survey can make people feel like they are being cross-examined by a spreadsheet.

Avoid blame-focused language that singles out people instead of examining systems or decisions.

Avoid leading questions that suggest the “right” answer before people respond.

Avoid excessive length, because long surveys lower completion rates and drain the quality of later answers.

Avoid technical jargon without context, especially if the audience includes stakeholders outside the delivery team.

Avoid ignoring anonymity and privacy, particularly when the feedback touches conflict, leadership support, or failure points.

Here’s the thing, the goal is not to create dramatic answers.

The goal is to create usable ones.

Strong lessons learned survey questions feel fair, clear, and relevant, and they make it easier to collect practical lessons learned examples, memorable lesson learned examples, and future-ready project lessons learned questions that keep your improvement efforts grounded in real experience.

Good survey design is not flashy.

But it quietly determines whether your learning process becomes useful or decorative.

You want useful.

Always useful.

Acting on feedback is what makes it count

Each survey type serves a different moment: retrospectives help you reflect at the end, pulse surveys help you adjust midstream, AARs help you learn fast after critical events, stakeholder surveys bring in external perspective, process-improvement surveys reveal systemic issues, and comparative surveys expose portfolio-wide patterns. The real value appears when you act on what people tell you, turning responses into better decisions, stronger workflows, and a growing library of lessons learned examples you can reuse. Plus, you do not need to choose only one format, because many teams get the best results by combining approaches across the project lifecycle. Tailor the sample questions to your environment, keep collecting clear lessons learned questions, and build a practical repository of example lessons learned that future teams will thank you for.

Best Practices, Dos & Don’ts for Crafting Lessons-Learned Survey Questions

Writing effective survey questions is half science, half art. The right mix keeps your feedback honest, thorough, and truly actionable. Balance open-ended prompts and scaled questions for a survey that delivers both stories and stats.

Keep these best-practice tips at heart: - Use a blend of quantitative (rating scale) and qualitative (open text) items for well-rounded insights. - Limit your surveys to 10–15 high-impact questions to fend off fatigue and boost participation. - Always tailor language to the audience. Ditch jargon when surveying broad or mixed groups—nobody loves a pop quiz in corporate acronyms!

If feedback could be sensitive, absolutely prioritize anonymity and psychological safety—don’t offer it unless you can genuinely protect identities. Trust is everything.

Track trends over time by leveraging tools with analytics capabilities. These turn survey responses into data-driven project improvement opportunities.

Above all, remember: - Every question should be clear, necessary, and actionable. - Surveys are a dialogue, not a data dump. They should spark better future projects, not just fill a reporting quota.

In summary, lessons-learned surveys are your ticket to repeatable success and agile, informed teams. Crafted well and timed right, they're easy, fun, and essential for anyone who wants more win and less facepalm the next time around. Remember: feedback isn’t just about pointing out what broke. It’s your route to building something even greater—every single time.

Conclusion

Lessons-learned surveys light the way forward in any organization. When crafted with care, they empower teams to evolve, dodge repeat mistakes, and celebrate surprising wins. Apply the right questions at just the right moment to turn hindsight into foresight. With each survey, you’re rewriting your organization’s “how we get better” playbook. Let your next lesson be your greatest leap forward!

Related Feedback Survey Surveys

29 Catering Survey Questions to Improve Feedback

Explore 25 catering survey questions with sample responses to improve feedback, service quality, ...

28 User Feedback Survey Questions

Explore 25 user feedback survey questions with sample answers, examples, and tips to improve resp...

27 Environment Survey Questions

Explore 25 environment survey questions with sample answers to assess awareness, habits, and opin...