31 Beta Testing Survey Feedback Questions Form Template

Explore 25 beta testing survey feedback questions form template sample questions to gather actionable user insights and improve product testing.

Beta testing is the moment when your product stops living in a cozy internal bubble and starts meeting real people with real opinions. Whether you are looking for a beta testing example, building a beta survey, or creating a beta tester application form, the goal is the same: collect feedback you can actually use. A smart survey process helps you validate the product, test market fit, and spot friction before launch day turns into panic day. This guide gives you a ready-to-use beta testing feedback form template across the full beta journey, using an online survey tool.

Mapping the Beta Feedback Journey: The 5 Essential Survey Types

When each survey fits in the beta cycle

The right survey at the right moment makes beta testing feel organized instead of chaotic.

Here’s the thing, most teams do not fail because they ask for feedback. They fail because they ask for the wrong feedback at the wrong time.

If you send a pricing question before a tester has even touched the product, you will get fluff. If you wait until the end to ask about onboarding friction, you will get vague memories and the digital version of a shrug.

A useful beta testing template follows the natural journey of your testers from first contact to final verdict. That journey usually looks like this:

Recruitment: You screen and select the right testers.

Pre-use: You capture expectations, habits, and pain points.

In-use: You gather feature reactions, usability feedback, and bug reports.

Post-beta: You measure satisfaction, value, and likelihood to continue.

Competitive benchmark: You compare your product against current alternatives.

Think of it like setting up five checkpoints instead of one giant feedback dump. Your future self, product team, and probably your sanity will all be grateful.

A quick way to picture the flow is this simple table:

| Beta stage | Survey type | Main purpose | Best timing | |---|---|---|---| | Recruitment | Beta Tester Application & Screening Survey | Select the right participants | Before access is granted | | Pre-use | Pre-Use Expectations & Baseline Survey | Understand needs and current habits | Right after acceptance | | In-use | In-Product Feature & Usability Survey | Capture fresh reactions during use | During key workflows | | Post-beta | Post-Beta Satisfaction, NPS & Feature Prioritization Survey | Measure value and loyalty | At the end of the beta period | | Competitive benchmark | Competitive & Market Benchmark Survey | Compare against alternatives | Near the end or just after beta |

Plus, each survey type in this article includes three things you can use immediately:

Why and when to send it.

Sample questions you can copy into a form.

Practical language built for real beta testing feedback.

That means you are not just getting theory. You are getting a beta testing feedback form template system that works as one continuous loop.

If you have ever searched for “questions to ask beta testers” and ended up with generic fluff like “Did you like the product?”, this article is here to rescue you. Nicely, of course.

A 2024 state-of-practice study found teams use in-product surveys, beta tests, and incident reports to validate requirements and bug fixes, supporting stage-specific beta feedback forms (ResearchGate).

How to create a survey with HeySurvey

You can begin with a template below or start from scratch. If you do not have an account yet, you can still build your survey first and publish it later after signing in.

1. Create a new survey

Open HeySurvey and choose how you want to start: a blank survey, a pre-built template, or text-based creation. If you’re using a template, it gives you a ready-made structure that you can quickly adapt. Once the survey opens in the editor, you can also rename it so it is easy to find later.

Bonus: Before you continue, you may want to apply branding. Upload your logo, choose colors and fonts in the Designer sidebar, and adjust the background or question card style to match your organization.

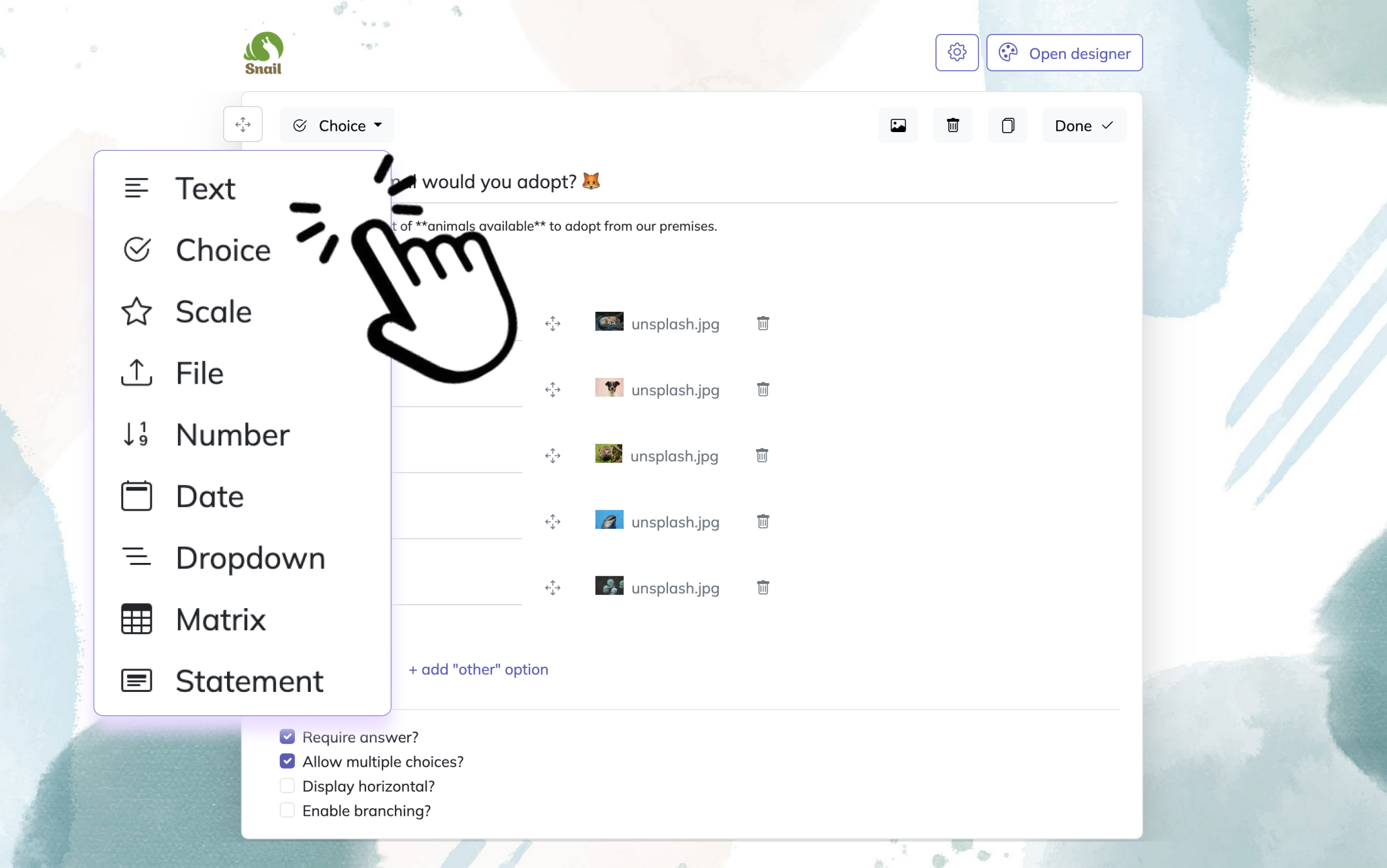

2. Add questions

Click Add Question to insert your first question, then keep adding more wherever needed. HeySurvey supports common question types like text, multiple choice, scale, number, date, dropdown, file upload, and statement. You can mark questions as required, add descriptions, and include images if helpful. For choice questions, you can also add an “Other” option or allow multiple answers.

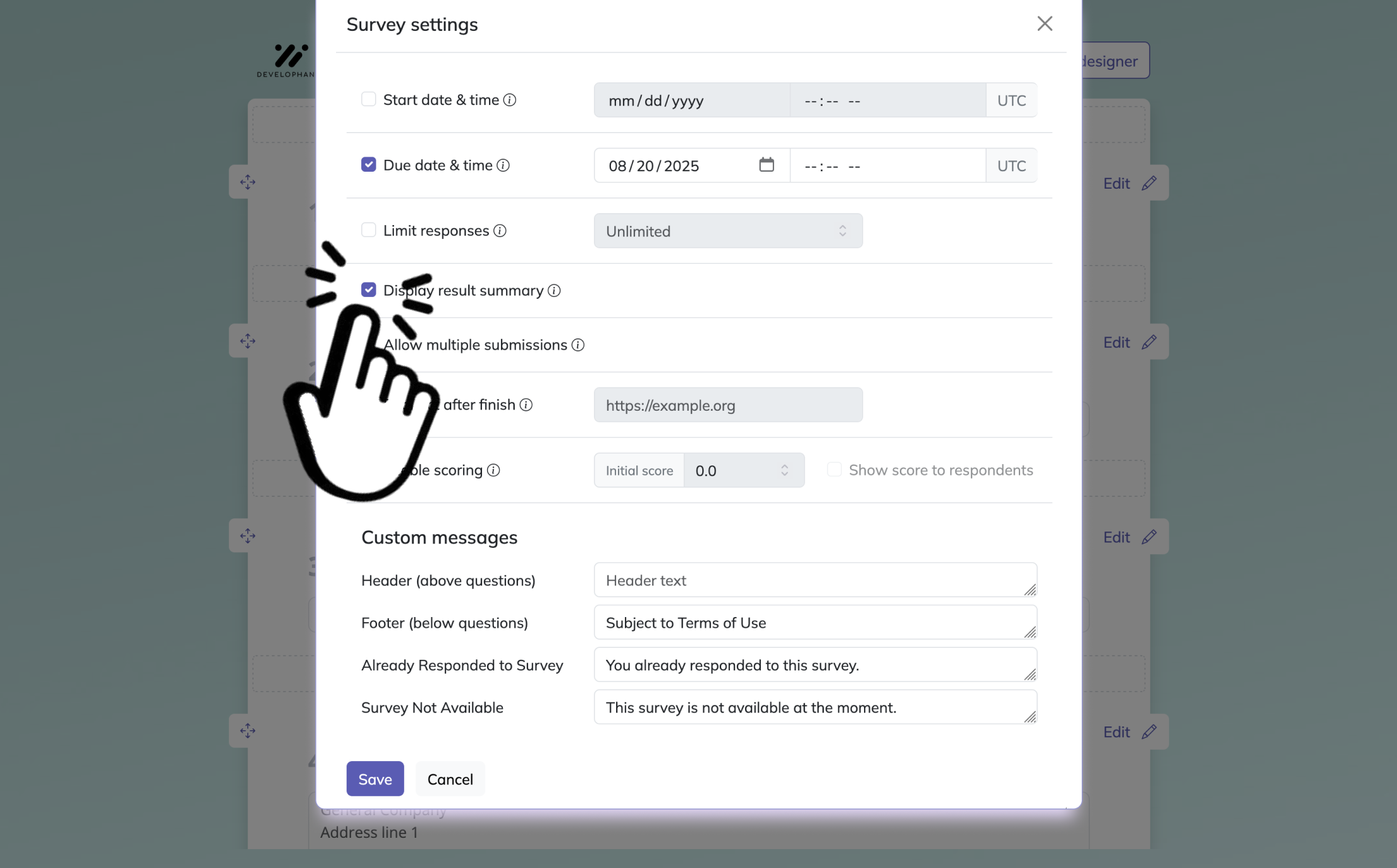

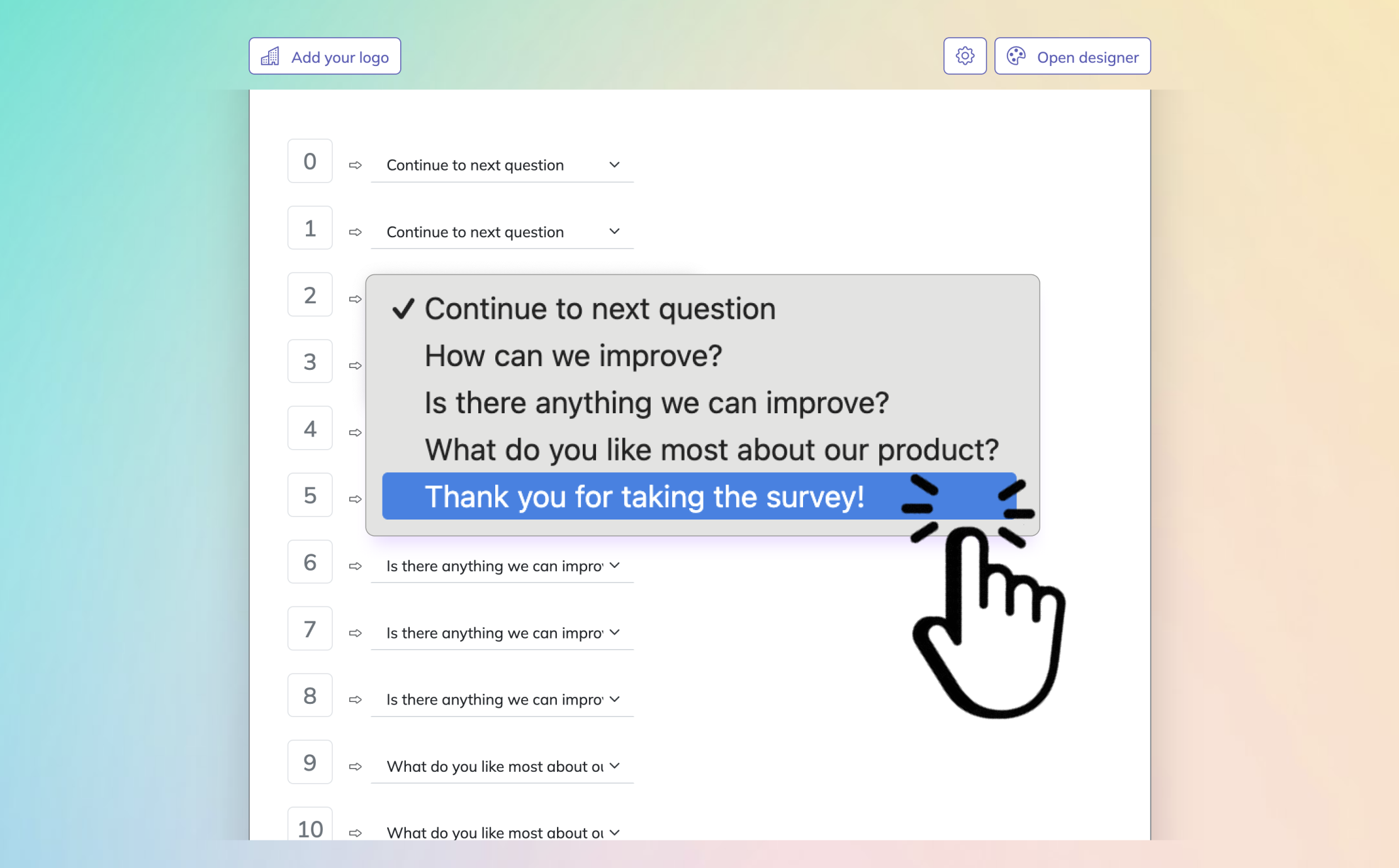

Bonus: Use the settings panel to define your survey rules, such as start and end dates, response limits, or a redirect URL after completion. If your survey needs different paths for different answers, set up branching so respondents skip to the next relevant question.

3. Publish your survey

Before publishing, preview the survey to check the flow, design, and wording. When everything looks right, click Publish to generate a shareable link. After publishing, your survey is ready to send to respondents and collect answers.

If you want, you can also embed the survey on your website with an iframe.

Survey Type #1 – Beta Tester Application & Screening Survey

Why and When to Use

A strong screening survey saves you from collecting feedback from the wrong crowd.

Before anyone enters your beta, you need to know whether they match your ideal user, have the right setup, and are likely to participate. This is where the beta tester application form earns its keep.

Your screening survey should go out before access is granted. It helps you filter applicants based on role, company size, use case, technical comfort, device type, and motivation.

This matters because not every eager sign-up is a useful tester. Some people love free access but vanish faster than office donuts on a Monday morning.

A good application survey helps you answer simple but important questions. Does this person represent your target market, and will they give detailed feedback instead of one mysterious line that says “it was okay”?

It also helps you segment testers from the beginning. You may want a mix of beginners, power users, mobile users, desktop users, or people switching from a competitor.

On top of that, this survey lets you set expectations. You can ask how much time they can commit, whether they are comfortable reporting bugs, and what motivates them to join.

That makes this survey useful for both selection and planning. It becomes your first working beta testing example of how structure leads to better insight.

Sample Questions to Include

Use these in your beta tester application form and adjust the wording to fit your product:

What is your job role, and how would you describe your day-to-day responsibilities?

Which industry do you work in, and how large is your company or team?

What made you interested in joining this beta program?

Have you used similar products before? If yes, which ones?

What device, browser, operating system, or technical setup do you plan to use during the beta?

How often do you expect to use this product during the beta period?

How comfortable are you with reporting bugs, sharing screenshots, or describing issues in detail?

What problem are you hoping this product will help you solve?

Have you participated in a beta test before? If yes, what did you like or dislike about that experience?

Are you willing to complete short follow-up surveys during the beta period?

This survey should stay focused, but not bare-bones. You want enough detail to screen effectively without making the form feel like a tax audit in disguise.

When done well, this first survey sets the tone for everything that follows. It tells testers that your beta is structured, thoughtful, and worth their time.

Careful screening surveys that verify target-user fit, technical setup, and motivation improve participant quality and reduce noisy feedback in beta and user research studies (source).

Survey Type #2 – Pre-Use Expectations & Baseline Survey

Why and When to Use

Baseline feedback gives you a before picture, which makes the after picture far more useful.

Once you accept testers, do not send them straight into the product with zero context gathering. First, ask what they expect, what pain points they face now, and what tools they already use.

This survey should be sent right after acceptance and before meaningful product use begins. Its main job is to capture expectations and current behavior so you can compare those answers against post-beta responses later.

Without a baseline, you are guessing at impact. With one, you can clearly see if your product changed perceptions, solved real problems, or simply looked pretty while doing very little.

This is the sweet spot for a beta product feedback survey template that focuses on needs instead of reactions. At this stage, testers have hopes, assumptions, and maybe a few unrealistic dreams, which is actually very useful data.

You also learn the language your audience uses to describe their problems. That helps with messaging, onboarding, and feature prioritization.

Plus, you can spot patterns before the beta even starts. If half your testers want one core outcome and your product delivers something else, that disconnect is worth knowing early.

This survey is especially helpful if you want to validate product-market fit. It ties your beta testing process to actual demand, not just feature opinions.

Sample Questions

These beta questions work well in a pre-use survey:

What is the biggest challenge you currently face in this area of your workflow?

How are you solving this problem today?

Which tools, products, or workarounds are you currently using?

What is the most frustrating part of your current solution?

What features or outcomes are you hoping to get from this product?

How important is solving this problem for you or your team right now?

What would a successful experience with this product look like for you?

Which feature are you most excited to try first?

How much time or money does your current process cost you?

If this product works well, what would improve most in your day-to-day work?

Here’s the thing, this survey is not just about collecting opinions. It is about setting up a measurable story.

Later, when you ask whether the product delivered value, you can compare the answer against what users wanted in the first place. That gives your beta testing template far more depth than a random set of end-of-test questions.

And yes, expectations can be wildly optimistic. That is fine. Sometimes users expect a magic wand, and your job is to figure out whether they at least got a really solid toolbox.

Survey Type #3 – In-Product Feature & Usability Survey

Why and When to Use

Fresh feedback beats foggy memory almost every time.

Your in-product survey is where you capture what users think while they are actively using the product. This is often the most tactical and actionable part of the full beta testing feedback form template.

Send these surveys during or immediately after key workflows. You can trigger them after onboarding, after a feature is used, after a task is completed, or when a user exits a page where friction often happens.

This is where you learn whether a feature is easy to find, simple to use, and worth keeping. You also uncover usability issues, broken flows, unclear labels, and bugs before they harden into launch-day problems.

If your team works in software development, this is the survey type that gives you the most direct answers to the classic question: what are the best types of questions to ask for beta testing software development? The answer is simple. Ask about actions, effort, confusion, and outcomes.

Do not rely on one giant open text box that says “Share feedback.” That invites vague comments like “it feels weird,” which is technically feedback but not the kind your product team can fix before lunch.

Instead, ask focused questions tied to real tasks. Mix quick scales with one or two open-ended prompts.

This is also the ideal place for bug collection. If you ask for reproduction steps while the issue is fresh, your odds of getting useful detail go way up.

Sample Questions

Use these beta testing survey questions during active product use:

What task were you trying to complete when you used this feature?

How easy or difficult was it to complete that task?

Were you able to find this feature without help? Why or why not?

Which part of this experience felt confusing, slow, or frustrating?

Did anything behave unexpectedly or appear broken during this task?

If you encountered a bug, what steps led to it?

How severe was the issue you experienced on a scale from minor annoyance to complete blocker?

What would have made this feature easier to use?

How confident do you feel using this feature again on your own?

If you could change one thing about this flow right now, what would it be?

You can also break these into smaller micro-surveys instead of one longer form. That usually improves response quality because people are more willing to answer two or three questions in the moment than a surprise wall of text.

On top of that, context matters. If someone reports confusion right after using a feature, you can trust that feedback far more than a vague memory shared three weeks later.

This is where your beta survey starts acting like a product improvement engine. Tiny fixes found here often create huge gains later.

And yes, this is the point where users discover that the button you thought was obvious is apparently hiding in plain sight like a ninja with a tooltip.

Research shows contextual inquiry reduces recall problems by capturing feedback in users’ actual environment, making in-product surveys more reliable for actionable usability insights (GOV.UK).

Survey Type #4 – Post-Beta Satisfaction, NPS & Feature Prioritization Survey

Why and When to Use

End-of-beta surveys turn scattered impressions into a clear product verdict.

After testers have spent enough time with the product, you need to zoom out. This survey measures how they feel overall, whether they would keep using the product, and which features matter most.

Send this at the end of the beta period, once users have enough experience to judge the product fairly. If you send it too early, you get half-formed reactions instead of a full picture.

This survey helps you answer the biggest launch questions. Did users get value, would they recommend the product, would they pay for it, and what should you improve first?

That makes it one of the most strategic pieces of your beta testing feedback process. It is no longer just about bugs and friction. It is about satisfaction, loyalty, and commercial potential.

This is the right place to include NPS, retention intent, and feature prioritization. It helps you separate “nice feature” from “must-have feature,” which is a very important distinction if time and resources are limited.

Plus, if you compare these results against your baseline survey, you can measure change. Did the product solve the original pain point, exceed expectations, or leave users politely unimpressed?

The answers here can shape launch messaging, onboarding changes, pricing strategy, and roadmap decisions. In other words, this survey is where your beta graduates from test mode to business mode.

Sample Questions

Use these in your beta testing feedback form template for post-beta analysis:

On a scale from 0 to 10, how likely are you to recommend this product to a friend or colleague?

Overall, how satisfied are you with your experience during the beta?

How likely are you to continue using this product if it becomes publicly available?

How likely would you be to pay for this product based on your current experience?

Which three features did you like the most?

Which three features did you like the least or find least valuable?

What is the main reason for the score you gave above?

Which feature had the biggest positive impact on your workflow?

What is the one improvement that would most increase your likelihood to keep using the product?

If this product launched today, how disappointed would you be if you could no longer use it?

A post-beta survey should blend numeric ratings with open responses. The ratings show patterns, while the written answers tell you why those patterns exist.

Here’s the thing, a strong NPS score is great, but it does not tell you which feature made people care. You need both the score and the explanation.

This is also the best moment to ask about future intent. Users now have enough context to judge value with more realism.

If they still say they would pay, refer, or switch, that is strong evidence your beta testing template is doing its job. If not, at least you found out before the launch confetti cannon misfired.

Survey Type #5 – Competitive & Market Benchmark Survey

Why and When to Use

Benchmark feedback shows whether your product is merely good or actually better.

A product can earn positive feedback and still lose in the market if competitors solve the same problem more clearly, cheaply, or completely. That is why the competitive benchmark survey matters.

This survey works best near the end of the beta or immediately after it. By then, testers have enough hands-on experience with your product to compare it against what they already use.

The goal is not to fish for compliments. The goal is to understand how your product stacks up in the real world.

Ask users what alternative they would choose if your product disappeared tomorrow. Ask what your product does better, worse, and not at all.

This gives you market context, which raw satisfaction scores alone cannot provide. A product can score well in isolation and still fail if switching feels too hard or the value gap is too small.

This survey is especially helpful for teams entering a crowded category. It can also reveal whether your strongest edge is feature depth, ease of use, onboarding speed, collaboration, reporting, automation, or price perception.

On top of that, it gives you sharp language for positioning. Users often explain differences in plain English better than internal teams do.

You do not need a giant strategy deck to start. A practical beta test example can begin with a few focused comparison questions and grow from there.

Sample Questions

Use these prompts in your competitive survey, or adapt them into your own beta testing template:

Which product, tool, or workaround would you most likely use if this product were not available?

Compared with your current solution, how satisfied are you with this product overall?

What does this product do better than the alternative you currently use?

What does the alternative do better than this product today?

Which missing feature or capability would make switching easier for you?

How difficult would it be for you or your team to switch from your current solution to this product?

How would you rate this product’s value for money compared with other options you know?

What concerns would stop you from adopting this product fully?

Which feature feels like a must-win advantage for this product in the market?

If you had to choose one product today, what would you choose and why?

If you want richer answers, ask users to compare one specific workflow instead of the whole product. Broad comparisons can get fuzzy, while specific ones reveal actionable insight.

Plus, competitive feedback often uncovers hidden blockers. Sometimes the issue is not your feature set. It is migration effort, trust, integration needs, or pricing assumptions.

This survey gives your beta testing feedback commercial sharpness. It helps you understand whether your product is just promising or truly competitive.

And yes, sometimes users will admit they stay with an inferior tool because “everyone already knows it.” Annoying, but useful.

Best Practices: Dos and Don’ts for Collecting Actionable Beta Feedback

What to do and what to avoid

Small survey improvements create much better feedback quality.

Even the best questions will underperform if your process is sloppy. Good beta feedback depends on timing, clarity, structure, and follow-through.

The first rule is simple. Keep surveys short enough that people will actually finish them.

If every form feels like a side quest, response rates will sink. Most testers are willing to help, but they still have jobs, lives, and at least twelve browser tabs open.

The second rule is to match the survey to the moment. Ask expectation questions before use, usability questions during use, and satisfaction questions after enough experience has built up.

Third, mix quantitative and qualitative questions. Numbers reveal patterns, while comments explain those patterns.

Fourth, offer a small incentive if the beta asks for meaningful effort. It could be early access, gift cards, discounts, or product perks.

Fifth, close the feedback loop. If testers report something useful and later see it fixed, trust goes up fast.

Here is a skim-friendly checklist of best practices for beta tester feedback:

Keep each survey focused on one stage of the beta journey.

Use clear language instead of internal product jargon.

Ask questions tied to real behavior, not vague feelings alone.

Time surveys close to the experience you want feedback on.

Include at least one open text field for unexpected insights.

Make forms mobile-friendly and quick to complete.

Segment responses by user type, device, plan, or experience level.

Thank testers and show them their input matters.

Just as important are the things you should not do:

Do not ask leading questions that push users toward praise.

Do not overload one survey with every question your team has ever imagined.

Do not ignore negative feedback because it stings a little.

Do not bury bug reporting inside unrelated satisfaction forms.

Do not forget to test your own form before sending it out.

Here’s the thing, bad survey design can make good products look confusing and weak. Good survey design helps you see what is actually happening.

A polished beta testing example is not about sounding smart. It is about making it easy for testers to tell you the truth.

Plug-and-Play Beta Testing Survey Feedback Form Template

How to stitch the five survey blocks into one usable system

A complete beta testing survey system works best when each form has a single job.

If you want an end-to-end beta testing feedback form template, the smartest move is to combine the five survey types into one structured workflow. You are not building one giant mega-form. You are building a sequence.

Start with the application form to screen testers. Then send the baseline survey after acceptance, followed by short in-product surveys during use, then the post-beta survey, and finally the competitive benchmark survey.

That sequence gives you a clean story from user fit to user outcome. It also helps you compare expectations against actual experience.

You can build this as Google Forms, Typeform, or any survey tool that supports logic, hidden fields, and response tagging. If your tool allows conditional branching, use it.

For example, if a tester says they experienced a bug, you can show follow-up questions for steps, severity, and screenshots. If they say they have never used a competitor, skip the competitive comparison questions.

Here is a practical field structure for your plug-and-play beta testing template:

Form 1: Tester name, email, role, industry, current tools, motivation, technical setup, time commitment.

Form 2: Current pain points, existing workflow, expected benefits, desired features, urgency of problem.

Form 3: Feature used, task attempted, ease rating, confusion points, bug details, suggested improvement.

Form 4: NPS score, satisfaction score, value perception, willingness to pay, favorite features, least favorite features, retention intent.

Form 5: Current alternative, comparison rating, switching barriers, must-win features, price perception, final choice.

You can also customize the forms with branding, short intros, and logic based on persona. Keep the wording simple and consistent so testers do not feel like each form came from a different planet.

Plus, label your scales clearly. If you use ratings, explain what the endpoints mean.

If you want a copy-ready format, you can paste the exact sample questions from each section into your form builder and group them by stage. That instantly gives you a working beta testing feedback form template with room to tailor based on product type, audience, and beta length.

On top of that, consider adding one short thank-you screen after each survey. It sounds small, but it reinforces that this is a thoughtful program, not a feedback vacuum.

So yes, you can absolutely build a solid beta survey system without overcomplicating it. Keep each form focused, send it at the right moment, and use the answers to make decisions, not just collect documents that sit quietly in a folder pretending to be useful.

A strong beta process is really a series of smart questions asked at smart times. With the right beta testing template, you get clearer signals, better product decisions, and fewer last-minute surprises. Copy the survey blocks, customize them to your workflow, and make your next beta feel less like guesswork and more like a plan. Your testers will thank you, and your product team might even smile on a Monday.

Related Product Survey Surveys

29 Product Testing Survey Questions

Explore 25 product testing survey questions with sample questions to improve feedback, refine pro...

31 Product Market Fit Survey Questions

Explore 25 product market fit survey questions with sample questions to help validate demand, ref...

29 New Product Survey Questions Examples

Explore 25 new product survey questions examples to guide launches, gather feedback, and improve ...