30 Bad Survey Questions Examples to Avoid

Discover 25 bad survey questions examples to avoid biased results, improve surveys, and spot common mistakes in questionnaire design.

Bad survey questions do more than create messy spreadsheets. They quietly sabotage decisions, waste budget, and make you feel oddly confident about data that should have gone straight to the trash. In practice, “bad” can mean leading, vague, loaded, confusing, overlapping, or too absolute. If you want reliable insights, you need to spot these traps early, because bad survey questions can turn even a smart research project into a very polished guessing game with an online survey tool.

Leading Survey Questions – How They Sway Respondents

Definition & Impact

Leading questions push people toward the answer you want, not the answer they actually believe.

That happens when your wording suggests the “right” response through praise, assumptions, emotional framing, or social pressure.

A question like “How much did you enjoy our excellent customer service?” already tells respondents what they are supposed to think.

That makes your results look cleaner than reality, which is great for your ego and terrible for your data.

If you use leading survey questions, you are not measuring opinion as much as you are measuring compliance.

People often follow the cue because they want to be agreeable, move quickly, or avoid sounding negative.

Plus, even a small nudge can distort findings when the survey is sent to a large audience.

This is why leading survey questions examples matter so much when you are training teams or reviewing drafts.

If you want trustworthy feedback, your wording has to stay neutral even when your brand team is very proud of itself.

Here’s the thing: a survey should behave like a mirror, not a hype reel.

Why & When This Question Type Still Shows Up

Leading questions usually sneak in when you are rushing.

A tight deadline makes people focus on finishing the form, not stress-testing every line for bias.

They also appear when stakeholders already have a preferred answer and want the survey to “confirm” it.

That can happen in product launches, employee engagement studies, political polling, donor outreach, and customer satisfaction research.

Sometimes the issue is less dramatic.

A writer may simply know too much about the topic and accidentally frame the question from an insider viewpoint.

On top of that, teams often confuse enthusiasm with clarity.

If your company loves a feature, you may describe it with flattering language without realizing you are steering respondents.

That is how biased survey questions get born in respectable offices with nice coffee machines.

5 Sample Leading Questions to Avoid

Don’t you agree our product is the best option on the market?

How helpful was our amazing support team during your issue?

Why do you prefer our fast and reliable delivery service over competitors?

Most smart shoppers choose eco-friendly packaging. Do you support our new packaging update?

How much did our improved app make your work easier?

These are classic examples of bad survey questions because each one plants an assumption before the respondent even answers.

Some assume agreement.

Some assume a positive experience.

Some use flattering or social language to make disagreement feel awkward.

A Better Way to Ask

You can fix most leading questions by removing opinion words and baked-in assumptions.

Try simple, direct wording like:

How would you rate our customer support?

Which delivery service do you prefer, if any?

How has the app update affected your work, if at all?

Clean language gives respondents room to think for themselves.

And that is the whole point.

A 2024 survey study found that negative wording and structurally or contextually leading questions increase measurement error in survey responses (Springer).

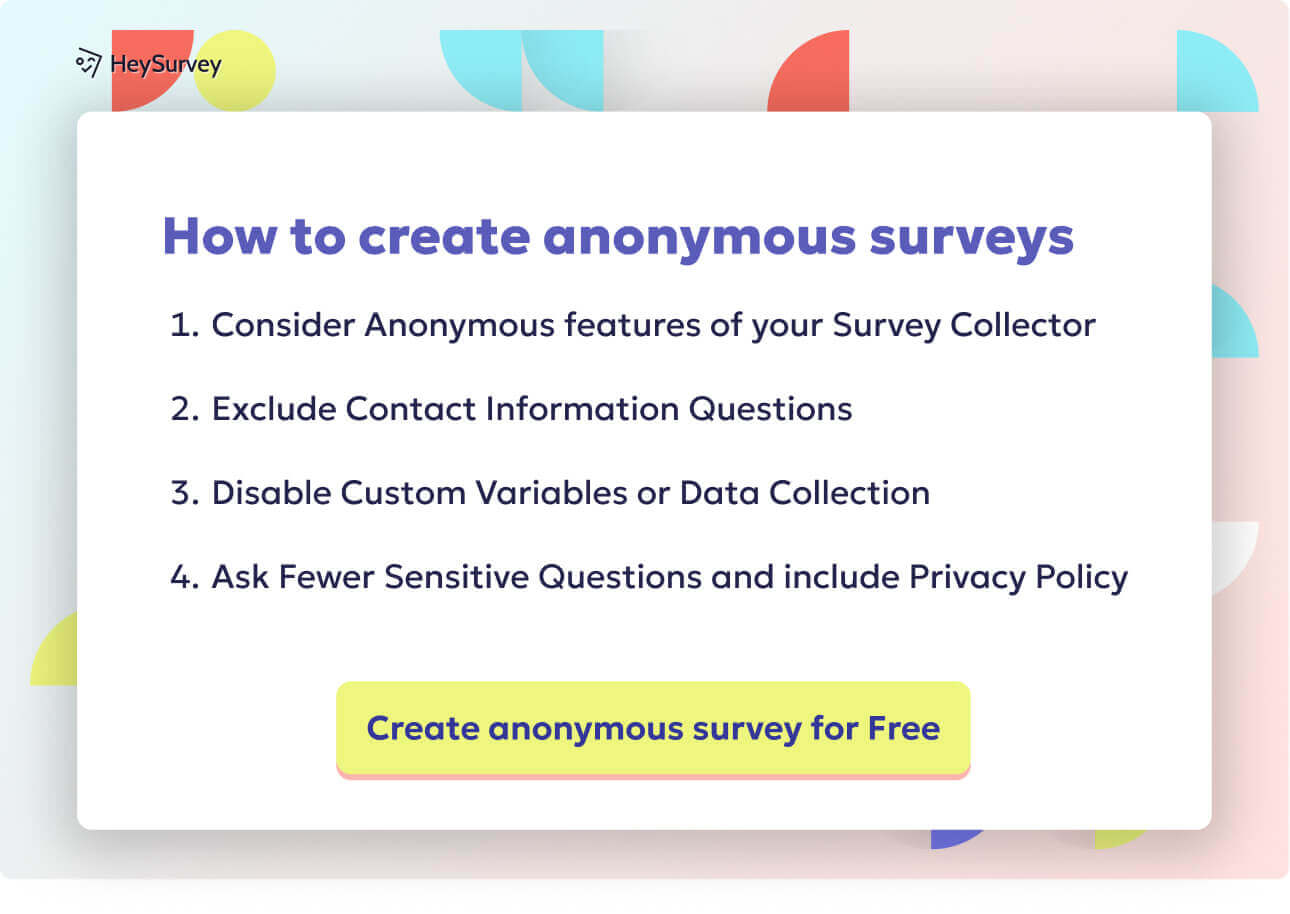

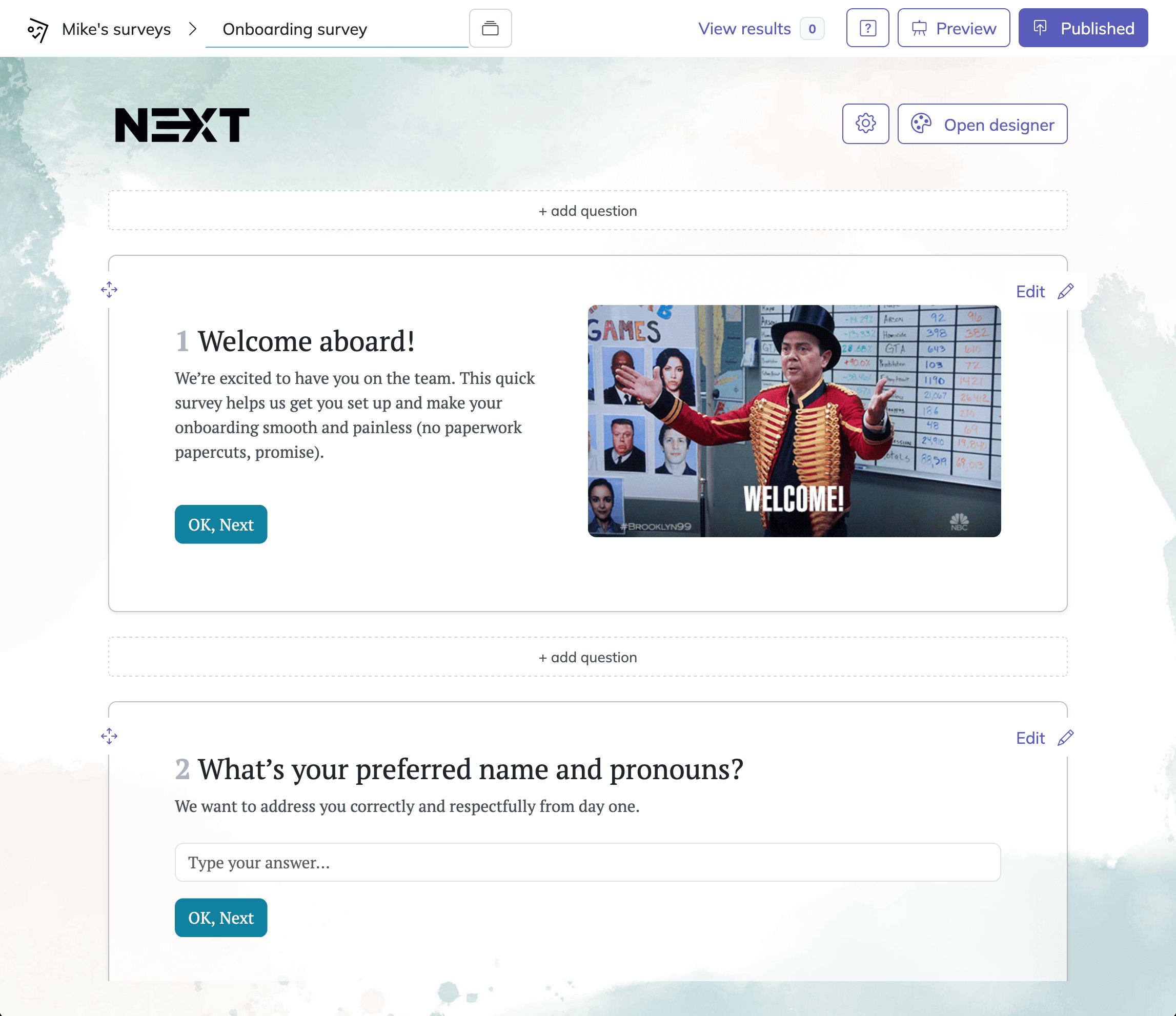

Creating a survey in HeySurvey is quick and easy, even if you’ve never used a online survey tool before. You can start from scratch or open a ready-made template using the button below these instructions.

Step 1: Create a new survey

Click Create New Survey or open a template to get started. If you choose a template, it gives you a head start with a simple structure you can edit. HeySurvey opens the survey editor, where you can give your survey a name and begin building it right away. You do not need an account to start editing, but you will need one to publish your survey and collect responses.

Step 2: Add questions

Use the Add Question button to include the questions you want to ask. HeySurvey supports many question types, such as text fields, multiple choice, scales, dropdowns, numbers, dates, and file uploads. You can mark questions as required, add descriptions, duplicate questions, and even include images. For a smooth survey experience, keep the questions clear and simple. If needed, you can also add branching so respondents go to different next questions based on their answers.

Bonus: Apply branding and settings

Before publishing, you can customize the survey’s look and behavior. Add your logo, choose colors and fonts, or adjust the background in the designer. In the settings panel, you can set start and end dates, response limits, and redirect URLs after completion.

Step 3: Publish your survey

When everything looks good, click Preview to test the survey, then Publish to make it live. HeySurvey will give you a shareable link you can send to respondents or embed on your website.

Double-Barreled Survey Questions – Two Questions Masquerading as One

Definition & Impact

A double-barreled question asks about two different things but allows only one answer.

It sounds efficient, but it creates a mess.

When you ask, “How satisfied are you with our pricing and product quality?” you are combining two separate ideas that may produce two very different opinions.

A respondent might love the quality and hate the pricing.

But your single response field forces them to pick one answer that cannot accurately represent both views.

That means the data becomes blurry before analysis even begins.

This is one of the most common examples of good survey questions because it often looks harmless at first glance.

You may think you are shortening the survey.

In reality, you are compressing meaning until it stops being useful.

If you collect enough double-barreled items, you end up with results that are impossible to interpret confidently.

You cannot tell what people are reacting to.

Is the score low because of service, speed, price, convenience, or all of the above?

Your chart may look tidy, but the insight underneath is wearing clown shoes.

Why & When Researchers Accidentally Use Them

This problem usually shows up when you are trying to save space.

Teams want fewer questions, faster completion rates, and a cleaner-looking survey, so they merge related ideas into one item.

It also happens when concepts feel naturally linked.

For example, “staff friendliness and professionalism” sounds like one neat package, but respondents may judge those traits differently.

Researchers also miss double-barreled wording when they are too close to the topic.

If you already think two ideas belong together, you may not notice that respondents need separate ways to answer.

On top of that, surveys built from old templates often inherit these issues.

One unclear question gets copied into another project, then another, and suddenly confusion has a long career.

These are classic double-barreled question examples because they ask for one answer to do the work of two.

5 Sample Double-Barreled Questions

How satisfied are you with our website speed and design?

Do you find our onboarding process clear and useful?

How would you rate your manager’s communication and leadership?

Was the event well organized and enjoyable?

How happy are you with the product’s price and durability?

Each one blends separate concepts into a single response.

That makes it impossible to know which part the respondent is evaluating.

A Better Way to Ask

The fix is wonderfully boring.

Split the question.

Ask one thing at a time, even if the topics feel closely related.

For example:

How satisfied are you with our website speed?

How satisfied are you with our website design?

How clear was the onboarding process?

How useful was the onboarding process?

You will use more lines in the survey.

But you will also get data that means something, which is a pretty good trade.

Pew Research Center notes that double-barreled questions force respondents to evaluate multiple concepts at once, making answers difficult to interpret accurately (source).

Loaded or Biased Survey Questions – The Emotional Trap

Definition & Impact

Loaded questions use emotional or one-sided wording that pressures people before they answer.

Unlike mild leading questions, loaded wording often carries judgment, fear, praise, blame, or moral weight.

That emotional charge can shape responses in powerful ways.

A question such as “Do you support the wasteful spending behind this policy?” does not invite neutral feedback.

It tells people how to feel first.

This category includes some of the most obvious bad survey examples because the bias sits right in the language.

These questions are especially risky in research tied to politics, social issues, public opinion, or fundraising.

People react strongly to words that imply harm, unfairness, selfishness, irresponsibility, or virtue.

If your survey uses that kind of framing, you are collecting reactions to wording as much as reactions to the topic itself.

And yes, wording really can hijack an otherwise serious study with the grace of a raccoon in a snack cupboard.

Why & When They Creep In

Loaded questions often show up when the survey has an agenda.

An advocacy group may want to persuade as much as measure.

A campaign team may want dramatic numbers for a report.

A fundraiser may want respondents to feel urgency or guilt.

In internal settings, a biased question can appear when leaders feel defensive.

If they want proof that employees support a change, they may describe alternatives in a negative way to protect the preferred decision.

Sometimes writers simply do not notice their own assumptions.

When a topic feels morally obvious to you, it becomes harder to write neutral wording.

That is why reviewing biased survey questions examples is useful.

It trains your eye to catch emotional framing before it sneaks into the field.

5 Sample Loaded/Biased Questions

Do you support the company’s fair and responsible plan to reduce unnecessary staff costs?

Should the city stop wasting taxpayer money on this underused program?

How concerned are you about the dangerous effects of competitors’ low-quality products?

Do you agree that responsible parents should limit children’s screen time to under one hour daily?

Should donors continue helping this vital program instead of letting vulnerable families suffer?

These are examples of bad surveys in miniature.

The wording is not just descriptive.

It is persuasive.

A Better Way to Ask

To fix a loaded question, remove the emotional framing and name the topic plainly.

Try wording like:

Do you support or oppose the proposed staff reduction plan?

How do you view the city program’s value for public funding?

What is your opinion of children’s daily screen time limits?

Neutral phrasing may feel less exciting.

Good.

Your survey is not trying to win an argument.

It is trying to learn something.

Ambiguous & Vague Survey Questions – When Meaning Gets Muddy

Definition & Impact

Vague questions sound clear until you realize different people can interpret them in completely different ways.

That is the problem.

If respondents do not share the same understanding of a word, phrase, timeframe, or concept, their answers cannot be compared cleanly.

Take a question like “Do you use our service regularly?”

What counts as “regularly”?

Daily, weekly, monthly, or whenever Mercury is in retrograde?

This is why ambiguous questions examples are so useful in survey training.

The danger is not that people refuse to answer.

The danger is that they answer confidently, but each person answers a different question in their head.

That creates inconsistent data that looks valid on the surface.

Vague wording also makes it harder to identify trends over time.

If one respondent thinks “often” means twice a week and another thinks it means twice a month, your percentages lose meaning fast.

Among the most common examples of bad survey questions, vague items are especially sneaky because they can sound perfectly normal in conversation.

Why & When They Occur

Ambiguity often comes from everyday language.

Writers use words like “usually,” “recently,” “high quality,” “easy,” or “affordable” because they sound natural, but those terms are subjective.

Jargon creates another problem.

Internal teams may write for themselves instead of for respondents, assuming everyone understands company terms, product labels, or technical language.

Poor translation can also introduce ambiguity.

A phrase that feels precise in one language may become fuzzy in another.

On top of that, surveys that skip pilot testing miss the chance to catch confusion before launch.

If nobody tries the survey in advance, vague wording gets a free pass.

These are classic examples of vague questions because they leave too much room for interpretation.

5 Sample Ambiguous/Vague Questions

How often do you use our platform regularly?

Was your recent experience with our team good?

Do you think our pricing is affordable?

Is the app easy to use?

How satisfied are you with the quality of our service lately?

Each question includes words that sound harmless but lack shared meaning.

That makes the answers unstable.

A Better Way to Ask

You can improve vague questions by defining terms, adding timeframes, and using concrete language.

For example:

In the past 30 days, how many times have you used our platform?

How would you rate your most recent interaction with our support team?

How easy or difficult was it to complete your task in the app today?

Specific wording helps respondents answer the same question, not five slightly different versions of it.

And that is how you turn fuzzy opinions into usable data.

Research on survey design shows wording is critical to ensure all respondents interpret a question the same way, reducing ambiguity-driven measurement error. Source

Negatively Worded & Double-Negative Survey Questions – The Cognitive Speed-Bump

Definition & Impact

Negatively worded questions force respondents to slow down and mentally untangle what you mean.

That extra effort increases mistakes.

A question like “Do you disagree that the new policy is not helpful?” makes people pause, reread, and sometimes guess.

Double negatives are even worse.

They require respondents to decode the sentence before they can even decide on an opinion.

This raises error rates and contributes to survey fatigue.

When people feel mentally overloaded, they start clicking faster and thinking less carefully.

That creates noise in your results.

Among the more frustrating examples of bad test questions and survey items, negative wording is famous for making smart people feel like they suddenly forgot how language works.

If a respondent needs a grammar referee to answer your survey, something has gone wrong.

Why & When They Slip In

These questions often come from attempts to “balance” a scale.

Writers may include positive and negative statements to reduce response patterns, especially in academic or formal settings.

That goal sounds reasonable.

The problem is that negative wording does not just add variety.

It adds confusion.

Some teams also imitate language from older questionnaires or research instruments without adjusting it for plain readability.

Others use negative forms because they sound more formal or analytical.

Sadly, formal is not the same as clear.

Looking at negatively worded questions examples helps because it shows how easily readable surveys can become mentally slippery.

Double negatives, in particular, tend to appear when someone edits a sentence halfway and forgets to rescue it.

5 Sample Negatively Worded/Double-Negative Questions

Do you disagree that our checkout process is not confusing?

I do not find the new dashboard unhelpful. How much do you agree?

Should employees not be allowed to avoid mandatory training?

Do you think it is uncommon for our support team to be unresponsive?

I am not dissatisfied with the speed of delivery. How strongly do you agree?

These are not clever.

They are traps.

And your respondents did not sign up for a grammar obstacle course.

A Better Way to Ask

Rewrite negative items into direct, positive language whenever possible.

For example:

How clear or confusing is our checkout process?

How helpful is the new dashboard?

Should mandatory training be required for employees?

Simple language lowers mental effort.

That means respondents spend their energy thinking about the topic, not untangling the sentence.

Unbalanced or Overlapping Scale Questions – Skewed Response Options

Definition & Impact

Bad response scales can bias answers even when the question itself is perfectly fine.

That is what makes this issue so sneaky.

An unbalanced scale gives more room to one side than the other, while an overlapping scale makes options unclear or mutually messy.

For example, a scale with “Excellent, Very Good, Good, Fair” has three positive choices and only one negative-ish option.

That nudges respondents toward favorable answers.

Overlapping options create a different problem.

If answer ranges include “1 to 5 years” and “5 to 10 years,” a person at exactly five years gets stuck choosing between two technically valid answers.

These are some of the most practical bad survey question examples because the flaw may not be in the wording at all.

It may be in the structure of the answers.

If response options are skewed or confusing, your final numbers reflect poor design rather than true opinion.

It is like using a crooked ruler and then acting surprised when the measurements look weird.

Why & When They Arise

These issues often come from templates.

Someone copies an old survey, updates the topic, and never checks whether the scale is balanced or logically clean.

Lack of statistical training also plays a role.

A question writer may understand content well but not realize how scale design affects measurement.

Sometimes the team simply wants faster analysis.

Prebuilt choices feel efficient, so they get reused without much thought.

On top of that, overlapping options often appear when ranges are written quickly and nobody tests edge cases.

This is why overlapping options survey problems are common in forms built under pressure.

They are easy to miss until a respondent hits the awkward middle value and wonders if they need a coin flip.

5 Sample Unbalanced/Overlapping Questions

How would you rate our service: Excellent, Very Good, Good, Fair?

How long have you been a customer: 0 to 1 year, 1 to 3 years, 3 to 5 years, 5+ years?

How satisfied are you: Extremely satisfied, Very satisfied, Satisfied, Neutral?

What is your age: 18 to 25, 25 to 35, 35 to 45, 45+?

How many times did you visit last month: 0 to 2, 2 to 4, 4 to 6, 6 or more?

Each example creates bias or confusion through the answer options.

That means the question can fail even if the prompt looks innocent.

A Better Way to Ask

Use balanced scales with clear, non-overlapping options.

For instance:

Very dissatisfied, Dissatisfied, Neutral, Satisfied, Very satisfied

0 to 1 years, More than 1 to 3 years, More than 3 to 5 years, More than 5 years

18 to 24, 25 to 34, 35 to 44, 45+

The goal is simple.

Every respondent should know exactly where they fit, and every option should carry equal design weight.

Absolute Yes/No Questions – The “Always/Never” Problem

Definition & Impact

Absolute questions use words like “always,” “never,” “every,” or “only,” which force complex behavior into black-and-white answers.

That makes them risky.

Human behavior is full of exceptions, context, and annoying little details.

A question such as “Do you always read product labels before buying?” sounds straightforward, but many people do that sometimes, often, or only for certain products.

Yes or no cannot capture that nuance.

As a result, respondents may choose the “least wrong” answer rather than a truly accurate one.

This reduces precision and can make your audience seem more extreme or consistent than they really are.

Among examples of bad test questions and examples of bad survey questions, absolute wording is common because it looks efficient.

It promises neat categories.

But neat categories are not the same as truthful measurement.

And when a survey asks whether someone “never” does something, many respondents suddenly start remembering that one weird Tuesday when they absolutely did.

Why & When They Pop Up

Absolute yes or no questions often appear because they are easy to analyze.

You get tidy counts, clean percentages, and no need to wrestle with scales.

They also save space in short surveys.

If you have limited room, a simple binary format can feel appealing.

The trouble is that simplicity can erase reality.

These questions are especially common in screeners, quizzes, employee forms, and customer behavior surveys where teams want fast, decisive answers.

Sometimes they are borrowed from tests or checklists, which is why they overlap with examples of bad test questions in broader assessment design.

If the behavior or attitude exists on a spectrum, a strict yes or no item will usually flatten important differences.

5 Sample Absolute Questions

Do you always compare prices before making a purchase?

Have you never experienced issues with our mobile app?

Do you only shop online for groceries?

Do you always follow every update from our company?

Have you never skipped reading our terms and conditions?

These questions sound crisp.

But they ignore normal human variation.

A Better Way to Ask

Instead of forcing absolutes, use frequency-based or scaled responses.

For example:

How often do you compare prices before making a purchase?

How often have you experienced issues with our mobile app?

Which best describes how you shop for groceries?

This gives respondents room to answer honestly.

And honest data beats dramatic data every time.

Dos and Don’ts: Transforming Bad Survey Questions into High-Quality Data

Universal Best Practices

The best survey questions are clear, neutral, specific, and easy to answer without mental gymnastics.

That sounds simple because it is simple.

The hard part is maintaining that standard when deadlines, opinions, and old templates start crowding your draft.

Pilot testing is one of the strongest defenses against bad survey questions.

Even a small test group can reveal where respondents hesitate, misread, or interpret terms differently.

Neutral wording matters just as much.

If your sentence sounds like it is trying to sell, defend, accuse, flatter, or corner the respondent, rewrite it.

Single-concept questions are essential too.

One item should measure one idea.

Balanced scales round out the basics by making response options fair, complete, and easy to understand.

If you are comparing examples of good and bad research questions, these are the habits that usually separate them.

Good survey design is not about sounding smart.

It is about making sure your respondents never have to guess what you meant.

Quick Do and Don’t List for Each Pitfall

Here is a practical checklist you can use when reviewing examples of bad survey questions or rewriting your own draft.

Leading questions

- Do use neutral wording that does not hint at the desired answer.

- Don’t describe your product, service, or policy with praise inside the question.

Double-barreled questions

- Do ask about one concept at a time.

- Don’t combine related topics just to save space.

Loaded or biased questions

- Do state the issue plainly and evenly.

- Don’t use emotional, moral, or politically charged wording to push a response.

Ambiguous or vague questions

- Do define timeframes and use concrete terms.

- Don’t rely on fuzzy words like “often,” “affordable,” or “good” without context.

Negatively worded questions

- Do write direct, positive statements when possible.

- Don’t make respondents decode layers of negation.

Unbalanced or overlapping scales

- Do create response options that are symmetrical and non-overlapping.

- Don’t leave edge cases hanging between two answers.

Absolute yes or no questions

- Do use frequency scales when behavior varies.

- Don’t force “always” and “never” when real life is messier.

Final Thought on Better Survey Design

If you want better data, your review process has to be ruthless in a friendly way.

Read each item out loud.

Ask what assumption it makes, what concept it measures, and whether two respondents would interpret it the same way.

That habit alone can eliminate a surprising number of bad questionnaire example moments before they ever reach your audience.

Good surveys are not magical.

They are just carefully edited.

Best Practices & Dos and Don’ts for Avoiding Bad Survey Questions

When you want data you can trust, these best practices help you dodge common survey pitfalls. Here’s a quick-scan checklist you can keep handy for every survey project.

Dos

Always pilot test your survey with a small sample before full launch.

Use plain language and keep sentences short.

Provide balanced answer scales—let respondents choose from clear, logically ordered options.

Ensure your answer sets are mutually exclusive and collectively exhaustive (MECE).

Design with a respondent-first mindset—ask, “Would I find this question easy and comfortable to answer?”

Don’ts

Don’t lead with bias or insert opinions into your questions.

Don’t combine unrelated ideas or cram double-barrels.

Avoid jargon, acronyms, or technical language that might confuse people.

Never use double negatives or complicated logic structures.

Don’t pry into sensitive topics without offering an opt-out or explaining why you need the data.

Great survey writers aren’t born—they’re made through continual learning, regular A/B testing of wording, and inviting outside experts to review your drafts. Every question is an opportunity to learn and refine your approach. Trust in good survey design, and your data (and business decisions) will thank you.

Ready to level-up your surveys? Download our free “Good Question Template” or subscribe for more research wisdom. Your respondents—and your future self—will be glad you did!

Related Question Design Surveys

29 Quantitative Survey Research Questions Survey Questions Example

Explore 25 quantitative survey research questions with survey questions example to enhance your n...

31 Good Survey Questions for Better Feedback

Explore 25 good survey questions to boost response quality, gather insights, and improve feedback...

27 Survey Questions Mistakes to Avoid

Discover 25 sample questions on survey questions mistakes to avoid common errors, improve respons...