29 Bad Survey Questions: Examples You Should Avoid

Discover 25 examples of bad survey questions with expert tips on what makes them ineffective. Improve your surveys by avoiding these pitfalls.

Ever wonder why your survey results just don’t add up?

Sometimes, it’s not the audience, it’s the questions.

Bad survey questions sneak into even your best forms, including biased survey questions, double negatives, or ambiguous “what do you mean?” moments.

These bad apples confuse, mislead, and annoy, leading to lower response rates and twisted data.

People search terms like “biased survey questions,” “ambiguous questions examples,” and “double barreled question example” because everyone has run into these pitfalls.

Plus, when you know the types, you can spot them fast and use simple moves to fix each—especially if you rely on a reliable online survey tool.

Here’s the thing: this is your roadmap.

Leading & Biased Questions

The Lure of a Lean

Biased questions examples slip in when you quietly tip the scales in your favor. You see this all the time in customer satisfaction surveys or political polls, anywhere you really want people to agree with you.

Sometimes you lean the question without realizing it. Other times, you give it a not-so-subtle shove and your data ends up as skewed as a funhouse mirror.

These questions nudge, coach, or practically beg people to answer a certain way. That is like asking your best friend if your new haircut is “amazing” while you are still sitting in the stylist’s chair.

Here are five classic biased survey questions that show what not to do:

Don’t you agree our new menu is delicious?

How satisfied are you with our excellent support team?

Most experts think recycling is essential; do you?

Wouldn’t you say our prices are affordable?

How strongly do you support the popular new policy?

What’s going wrong here?

The wording tries to tell the respondent what’s “correct.”

Your answers get warped by the question, not the truth.

Decision making becomes a guessing game.

You can fix these in a snap by swapping leading phrases for neutral language:

“How would you rate our new menu?”

“How satisfied are you with our support team?”

“What are your thoughts about recycling?”

“How would you describe our prices?”

“What is your opinion of the new policy?”

If you spot biased survey questions, you can rewrite them with fresh eyes and honest curiosity. Plus, your insights will thank you and your data analysts might finally stop side‑eyeing your survey results. For more on survey questions mistakes, explore common pitfalls to avoid for better data.

A behavioral‑science study found that merely framing a question in conflict with common sense (“backward wording”) can increase inaccurate responses by over 40% (mm-ais.com)

Certainly! Here’s a set of instructions, tailored for a new HeySurvey user who is about to start a survey from a template, written in reader-friendly language and following your guidelines (approx. 250 words):

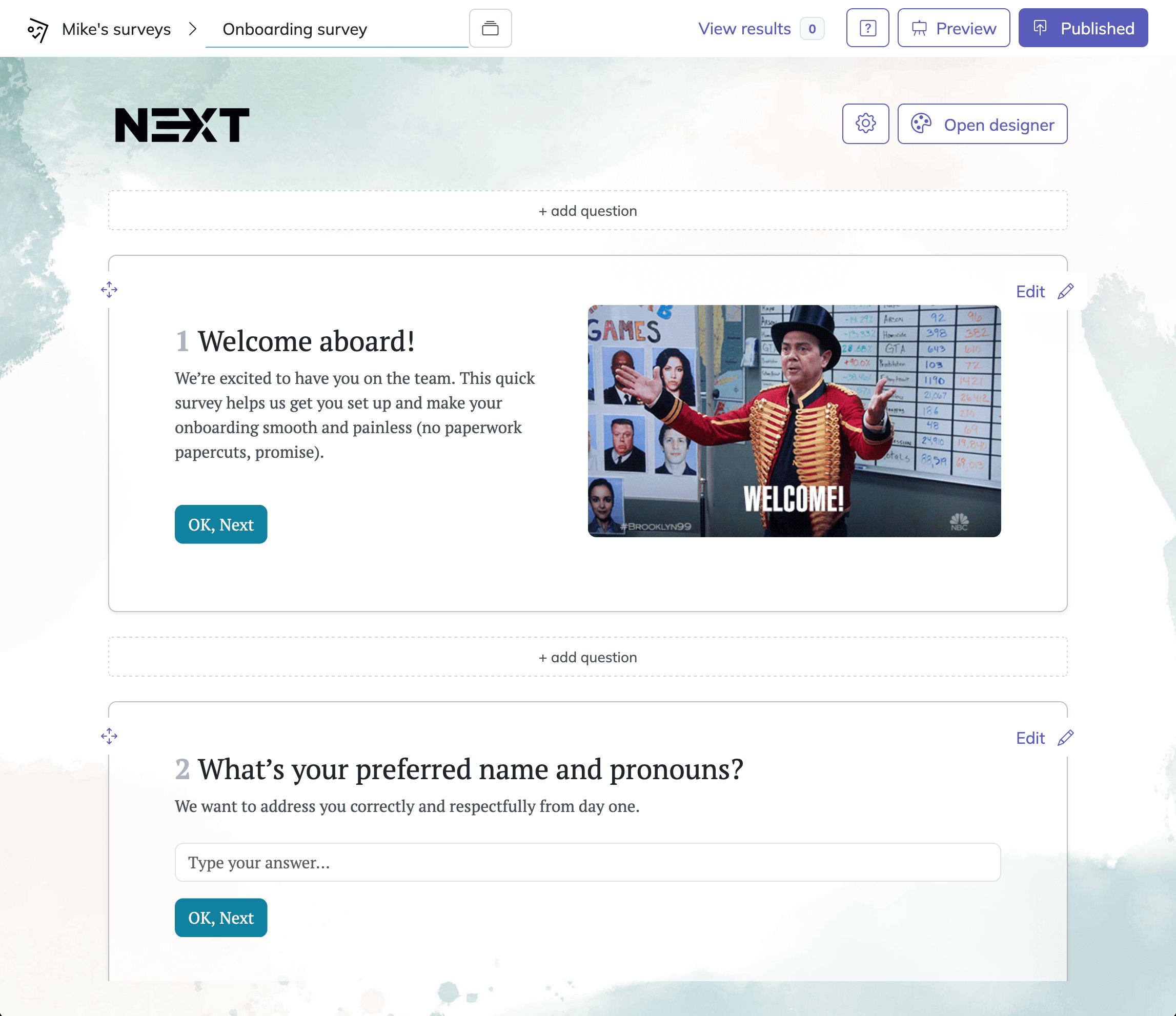

How to Create Your Survey with HeySurvey in 3 Easy Steps

Ready to build your survey? Just follow these simple steps to get started, even if you’re new to HeySurvey:

1. Create a New Survey

Start by clicking the Use This Template button below these instructions. This will instantly open a pre-made survey template in the Survey Editor. If you’d like to start from scratch, you can also select “Create New Survey” from your dashboard instead.

2. Add and Customize Your Questions

Now you’re in the Survey Editor! Here you can see the questions already included in your chosen template. Add new questions by clicking the Add Question button—choose from different question types like Multiple Choice, Scale, or Text. For each question, simply click to edit the wording, set if it’s required, and use the formatting options to personalize instructions or answer choices. You can also replace questions, rearrange their order, or remove anything that doesn’t fit your needs.

3. Publish and Share Your Survey

Once you’re happy with your questions and layout, click Preview to see how your survey will look to respondents. If everything looks good, hit the Publish button. You’ll be prompted to sign up if you haven’t already. Once published, you’ll receive a shareable link—ready to send to your audience!

Bonus Tips

- Apply Your Branding: Upload your logo or adjust survey colors using the Designer Sidebar to match your brand.

- Change Survey Settings: Set response limits, add a closing date, or configure a custom thank-you page in the Settings Panel.

- Add Logic or Branching: For more advanced surveys, direct respondents down custom paths based on their answers by setting up branching options.

You’re set! Click below to start your survey and explore all free survey software features as you go.

Loaded or Emotionally Charged Questions

When Surveys Get a Bit Too Dramatic

Ever feel like a question is already judging you before you even answer? That’s the magic trick with biased questions in surveys; “loaded” ones sneak in hidden opinions and strong emotional cues.

Researchers often drop these loaded bombs in advocacy campaigns or hot-topic polls. Plus, once people sense there’s a “right” answer, the truth quietly gets up and walks away.

These five loaded questions make things awkward:

How terrible do you feel about tax hikes?

Do you support cruel animal testing?

Should irresponsible parents get more benefits?

How angry are you about government waste?

Do you favor unjust war policies?

Here’s the thing:

Words like "terrible," "cruel," and "unjust" push your brain into a corner.

You can’t win; every response feels loaded.

Your data becomes more emotional than logical.

On top of that, you can fix this pretty quickly.

Replace judgmental words with neutral terms.

Focus on fact, not feeling.

Let’s calm things down:

“What is your opinion about recent tax increases?”

“Do you support animal testing?”

“Should parents receive more benefits?”

“What are your thoughts on current government spending?”

“Do you support current military policies?”

If you want honesty in your results, you need questions that avoid emotional baggage and let people answer without feeling pushed. You can check out survey questions mistakes to see more ways poorly worded questions impact your outcomes.

Emotive or emotionally loaded wording in survey questions can increase response distribution bias by about 31%, significantly distorting the data collected. source

Double-Barreled Questions

Two for the Price of One (But It’s a Bad Deal)

Everyone loves a shortcut, except when it comes to writing questions. A double barreled question tries to cover too much ground at once, so when you mash up two ideas into one question, you double confusion and cut your accuracy in half.

You see these in almost every bad survey and they are some of the most common bad survey question examples you will run into. Plus, you can spot them in the wild any time someone tries to save space by packing two questions into one, which only saves headache-free thinking for your respondents.

Don’t trip on these double-barreled traps:

How satisfied are you with our customer service and delivery speed?

Rate the taste and nutritional value of the snack.

How effective and affordable is the software?

Was the website informative and easy to navigate?

How happy are you with your workload and compensation?

Here’s the thing:

Respondents want to answer honestly, but they get stuck if they like one part and dislike the other.

Their answer waters down your insight.

Your data turns into a guessing game.

What’s the fix?

- Always split double-barreled questions in half.

Like this:

“How satisfied are you with our customer service?”

“How satisfied are you with our delivery speed?”

On top of that, if you separate each concept, you will always get clearer, more useful answers.

Do not let a double-barreled question example slip through just because your form looks shorter. The best survey is straightforward, and your future self will thank you for every clean question you write.

Double-Negative Questions

Dodge the Verbal Gymnastics

Double negative survey questions are legendary for tripping people up.

You might see them in legal or policy-heavy surveys that try to be precise, but they usually just confuse, and every reread costs you trust and clarity.

Here’s the thing, ambiguous questions like these aren’t just wordy, they can flip the meaning upside down for both you and your respondent.

Ready for a headache? Here are five double negative survey questions:

Do you disagree that our policy shouldn’t change?

Is it not uncommon for you to miss payments?

Do you not oppose lowering fees?

Would you be upset if we didn’t discontinue the feature?

Isn’t it unlikely you wouldn’t renew?

See the issue?

Respondents basically need a notepad and pencil to keep track.

You end up with answers that reflect confusion, not clarity.

On top of that, every twist boosts the chances someone bails on your survey entirely.

To fix:

Strip out every double negative.

Say exactly what you mean.

Clear rewrites:

“Do you think our policy should change?”

“How often do you miss payments?”

“Do you support lowering fees?”

“Would you like us to discontinue the feature?”

“Do you plan to renew?”

Keep it simple.

If you ask a question and stumble over the negatives, your respondents will trip too, and nobody gets points for verbal obstacle courses.

Respondents confronted with double-negative questions show significantly lower reliability (Cronbach’s alpha dropping from 0.66 for negatively worded to 0.26 for double-negative items), indicating serious confusion and degraded data quality [(core.ac.uk)]. If you want to write good survey questions, avoid double negatives and keep your language straightforward.

Ambiguous or Vague Questions

When “Regularly” Means Anything

Vague or ambiguous questions make you and your respondents guess.

The problem with ambiguous questions is that words like “regularly” or “satisfied” mean different things to different people, so “quick” for you may be “slow” for someone else.

These questions often sneak into quick polls where you just want to fire and forget.

Instead, you get responses that mean nothing, which is about as useful as a survey with no answers at all.

Take a look at these classic bad survey examples:

Do you use our product regularly? (What does “regularly” mean?)

How was your experience? (Which part?)

Was the process quick? (Compared to what?)

Are you satisfied with pricing? (Which plan?)

Did the webinar help you? (Help how?)

On top of that, unclear timeframes wreck your data:

Unclear timeframes, undefined terms, and missing context make it impossible to compare answers.

You lose all sense of data reliability.

The fix is simple and you can use it right away:

Add clear definitions, examples, or timeframes to every question.

Split vague questions into specific, targeted ones.

Try these upgrades so your results actually mean something:

“How often did you use our product in the last month?”

“How would you rate your checkout experience?”

“How long did the process take from start to finish?”

“Which pricing plan are you most satisfied with?”

“Did the webinar help you improve your productivity?”

Here's the thing: details turn guesswork into real insight.

Go from guesswork to gold-standard results by being specific, because your data deserves nothing less.

Non-Exhaustive or Non-Mutually Exclusive Answer Choices

The Overlap Trap

A sneaky form of survey bias shows up when your answer ranges are unclear or incomplete.

Sometimes you write a bias survey question that looks fine, until you see the answer choices and realize nothing fits or several options overlap.

If you are building demographic or frequency questions, you need options that are exhaustive so they cover everyone and mutually exclusive so they never overlap.

Miss this, and your perfect question quietly turns into a data mess.

Here are some textbook mistakes, with bad survey question examples you might recognize in the wild:

What is your age? 18-25, 25-35 (age 25 fits both)

What is your income? <$50k, $50k,$100k, >$100k (no option to “prefer not to say”)

How many people in your household? 1-2, 2-4, 4-6 (overlap at 2 and 4)

What device did you use? Laptop, Tablet, Phone (no “Desktop” or “Other”)

How often do you exercise? Never, Sometimes, Often (no definitions or examples)

Here’s the thing, the fix is simple if you slow down for a quick check:

Check every answer set for gaps, overlaps, and missing choices.

Always add an “Other” or “Prefer not to say” when it makes sense.

Make sure someone can pick exactly one option every time.

On top of that, when you upgrade your survey this way, every bias survey question you write turns into a goldmine for clean, clear data instead of a confusing puzzle.

Unbalanced Scale Questions

When Scales Tip the Wrong Way

Ever fill out a survey that feels like it is nudging you to be extra positive? That is an unbalanced scale in action, and it shows up when you cram more answers on one side of the scale than the other.

Here's the thing: you might be eager to show customer delight, but if your scale is lopsided, you are really just stacking the deck.

Here’s a parade of common offenders:

Excellent, Very Good, Good, Fair, Poor (only one negative)

Ecstatic, Happy, Neutral, Slightly Unhappy

Perfect, Great, Acceptable, Bad

Lightning Fast, Fast, Average, Slow

Very Fair, Fair, Somewhat Unfair, Unfair

In these cases:

Respondents may default to the majority “positive” answers.

Your results get skewed.

Customers who have issues may pick the closest so-so answer, or just skip your survey.

So how do you fix it?

Always balance the positives and negatives.

Use clear, evenly weighted scales like “Very Satisfied, Satisfied, Neutral, Dissatisfied, Very Dissatisfied”.

On top of that, balanced answer choices give you a clearer look at what is really happening, no happy-face filters required.

Social-Desirability Bias Questions

The Urge to Look Good

You probably do not love admitting your bad habits, especially in a survey. Biased questions that trigger social-desirability bias take full advantage of that, and suddenly everyone looks like a superhero in yoga pants.

These bad survey questions usually show up around sensitive topics like health, money, or eco-habits. Plus, when people want to look good, you get polished fantasy instead of messy fact.

Spot this bias in these sample questions:

How often do you exercise every day?

Do you always recycle?

How much junk food do you avoid each week?

Do you regularly donate to charity?

Do you never text while driving?

See what’s happening?

You’re making it tough for anyone to admit to less-perfect behaviors.

Honest answers get replaced by what sounds best.

Your study turns into a self-improvement book.

Here’s the thing:

Use neutral, frequency-based questions that make it okay to be real.

Add anonymous options or “sometimes” ranges.

For example:

“How many days a week do you exercise?”

“How often do you recycle: Always, Often, Sometimes, Rarely, Never?”

“How many times a week do you eat junk food?”

If you want real data, you need to make it feel safe for people to tell the truth. On top of that, remember that truth may sting, but it is incredibly valuable.

Best Practices: Dos and Don’ts to Eliminate Bad Survey Questions

Bad survey questions are everywhere, but you can fix them quickly if you know what to watch for. Keep things clear, neutral, balanced, and respectful so you get actual insight, not confusion.

Here’s your quick-reference list for writing great, bias-free questions.

Do: Focus on clarity and fairness

Keep wording neutral and free from assumptions.

Split up double-barreled questions into single, focused items.

Balance positive and negative choices in scales.

Define all ambiguous terms, time frames, or contexts.

Make answer options non-overlapping, exhaustive, and inclusive (“Other,” “Prefer not to say”).

Test your survey with a small group before launching.

Offer “I don’t know” or “Not applicable” as needed.

Don’t: Accidentally push people toward bad data

Lead respondents or force a “correct” response.

Use emotionally charged or loaded words.

Overlap answer categories or skip "Other" choices.

Confuse with double negatives or legalese.

Make assumptions about the habits or motives of those taking your survey.

Plus: Level up how you test and review your survey

A/B test tough questions when you’re unsure.

Ask a peer or expert to review your draft.

Make sure your survey looks just as friendly on a screen reader or phone as it does on your desktop.

Keep this checklist close next time you build a survey, and you’ll dodge every trap from biased survey questions to ambiguous questions examples.

If you want data you can trust, write questions your audience trusts, then check and double-check them like a detective on a good mystery. On top of that, do not be afraid to run a pilot round so your results turn out smarter, sharper, and free from the usual survey slip-ups.

Related Question Design Surveys

29 Quantitative Survey Research Questions Survey Questions Example

Explore 25 quantitative survey research questions with survey questions example to enhance your n...

31 Good Survey Questions for Better Feedback

Explore 25 good survey questions to boost response quality, gather insights, and improve feedback...

27 Survey Questions Mistakes to Avoid

Discover 25 sample questions on survey questions mistakes to avoid common errors, improve respons...