31 Post Program Survey Questions

Explore 25 post program survey questions with sample questions to improve feedback collection, measure outcomes, and refine your program strategy.

You ran the program. Now you need to know what actually landed.

Post program survey questions help you measure participant satisfaction, learning, engagement, and overall impact so you can see what worked and what needs a tune-up. Plus, this guide walks you through the main types of post program survey questions, when to use each one, sample questions you can borrow, and how to turn responses into smarter improvements with an online survey tool. Because guessing is brave, but data is usually better.

Satisfaction Survey Questions

Sample questions

How satisfied were you with the program overall?

To what extent did the program meet your expectations?

How likely are you to recommend this program to others?

Which part of the program did you find most valuable?

What, if anything, disappointed you about the program?

A quick satisfaction check can reveal a lot.

Why & When to Use

Use satisfaction survey questions right after a program ends to find out how people felt about the experience as a whole.

They work especially well for workshops, training programs, coaching cohorts, nonprofit initiatives, employee development programs, and educational events.

Here’s the thing, these questions help you spot whether the program met expectations and where the experience may have fallen a little flat.

Send them within 24 to 48 hours after completion for the best response quality.

That timing matters because details are still fresh, and your participants are less likely to answer with the fuzzy accuracy of "I think it was good... probably."

A smart satisfaction survey mixes question types:

Use rating-scale questions to measure trends quickly.

Use open-ended questions to uncover specifics, surprises, and pain points.

Use both together to see not just how people felt, but why they felt that way.

Plus, satisfaction data is useful, but it is not the whole story.

Someone can love a program, enjoy the facilitator, and still walk away without much lasting learning or behavior change.

On top of that, satisfaction questions give you an early read on participant experience, which makes them a great starting point before you dig into learning, outcomes, and long-term impact.

Post-program satisfaction surveys capture participant reactions, but research using Kirkpatrick’s model shows satisfaction alone cannot measure learning, behavior change, or program impact (source).

How to create a post program survey in HeySurvey

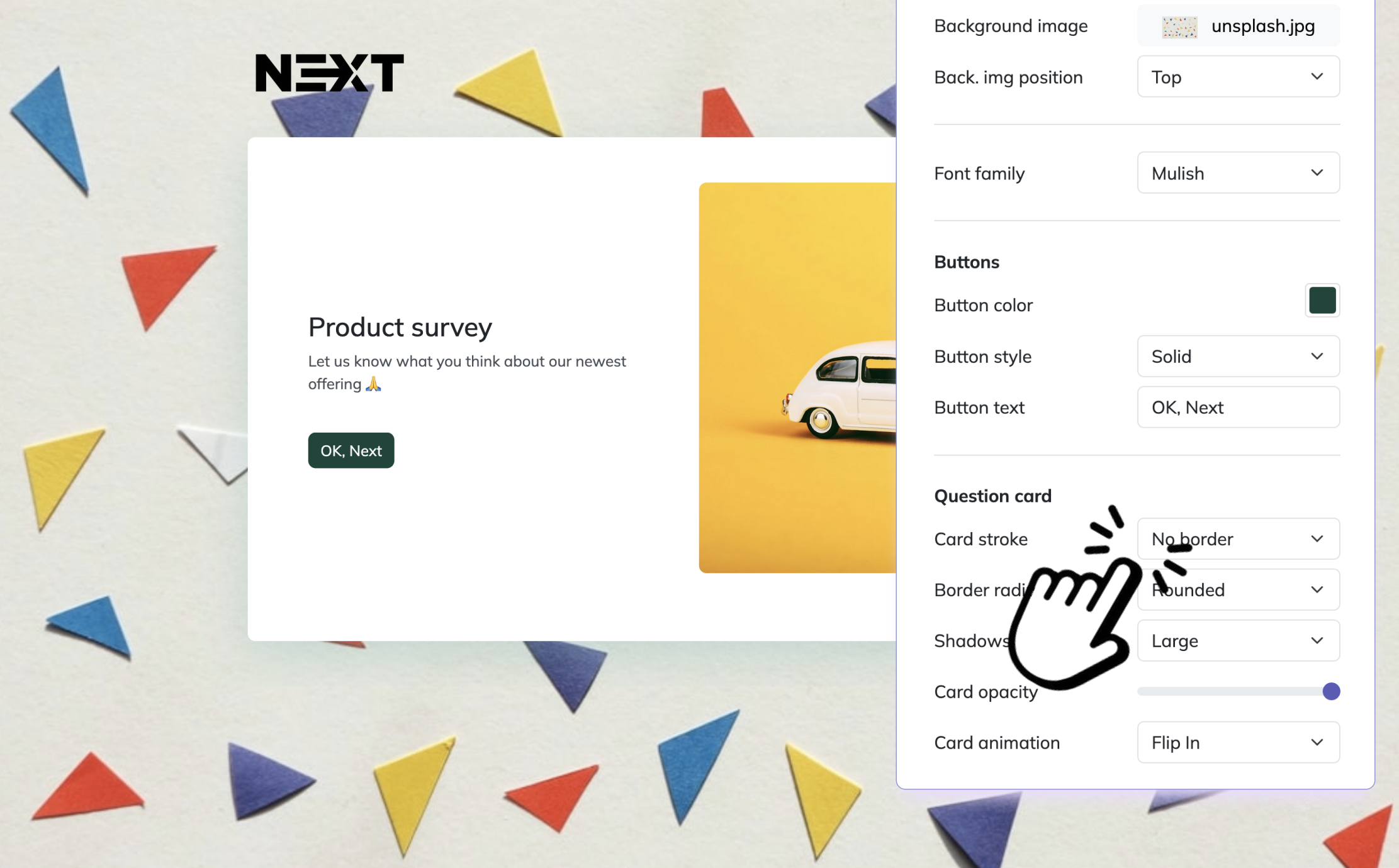

1. Create a new survey

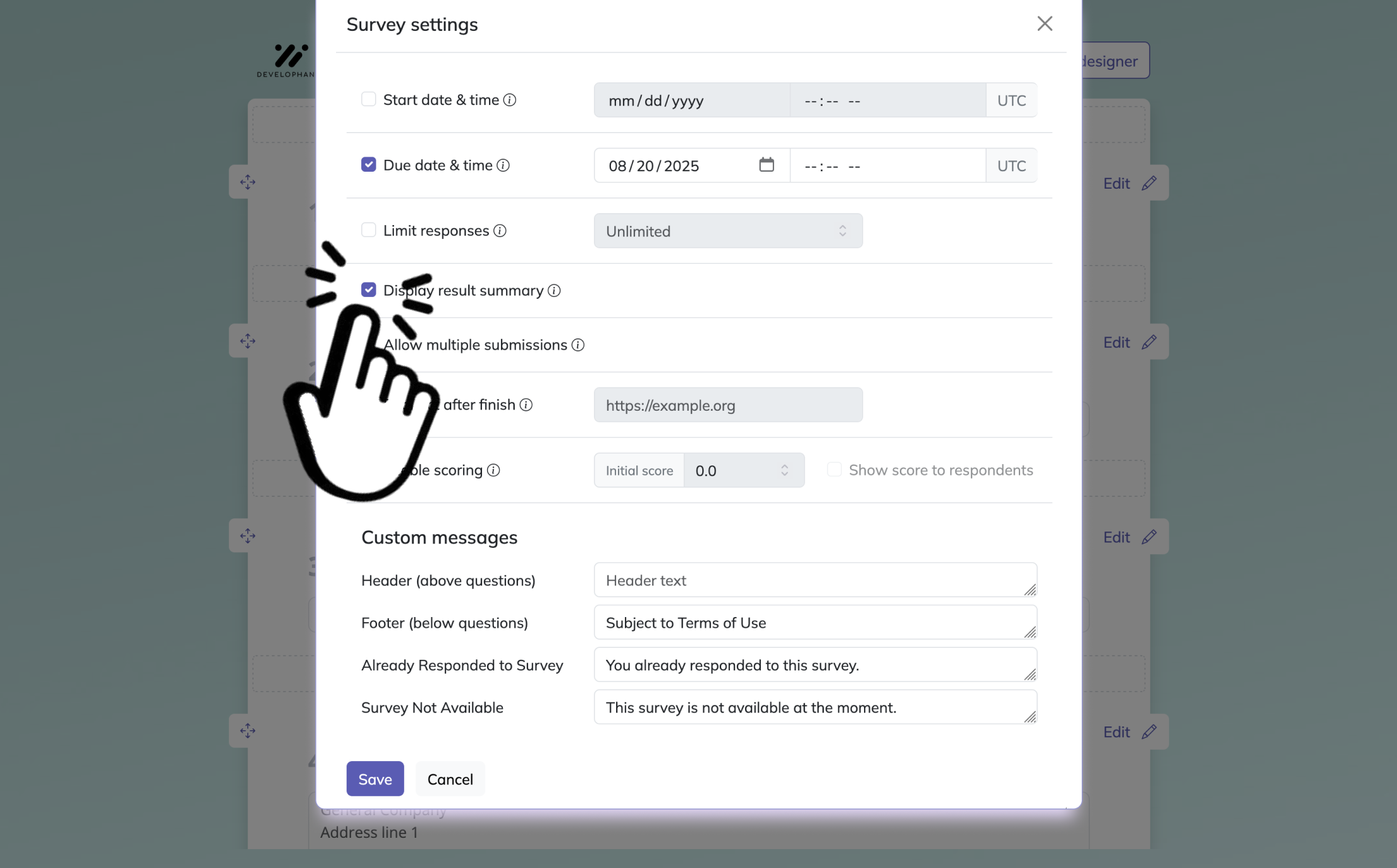

Start by opening HeySurvey and choosing a template or starting from an empty sheet. For a post program survey, a template can save time, and you can open it with the button below this guide. After the survey opens, give it a clear internal name so you can find it later. If you want, you can also add your logo and adjust basic settings such as dates or response limits.

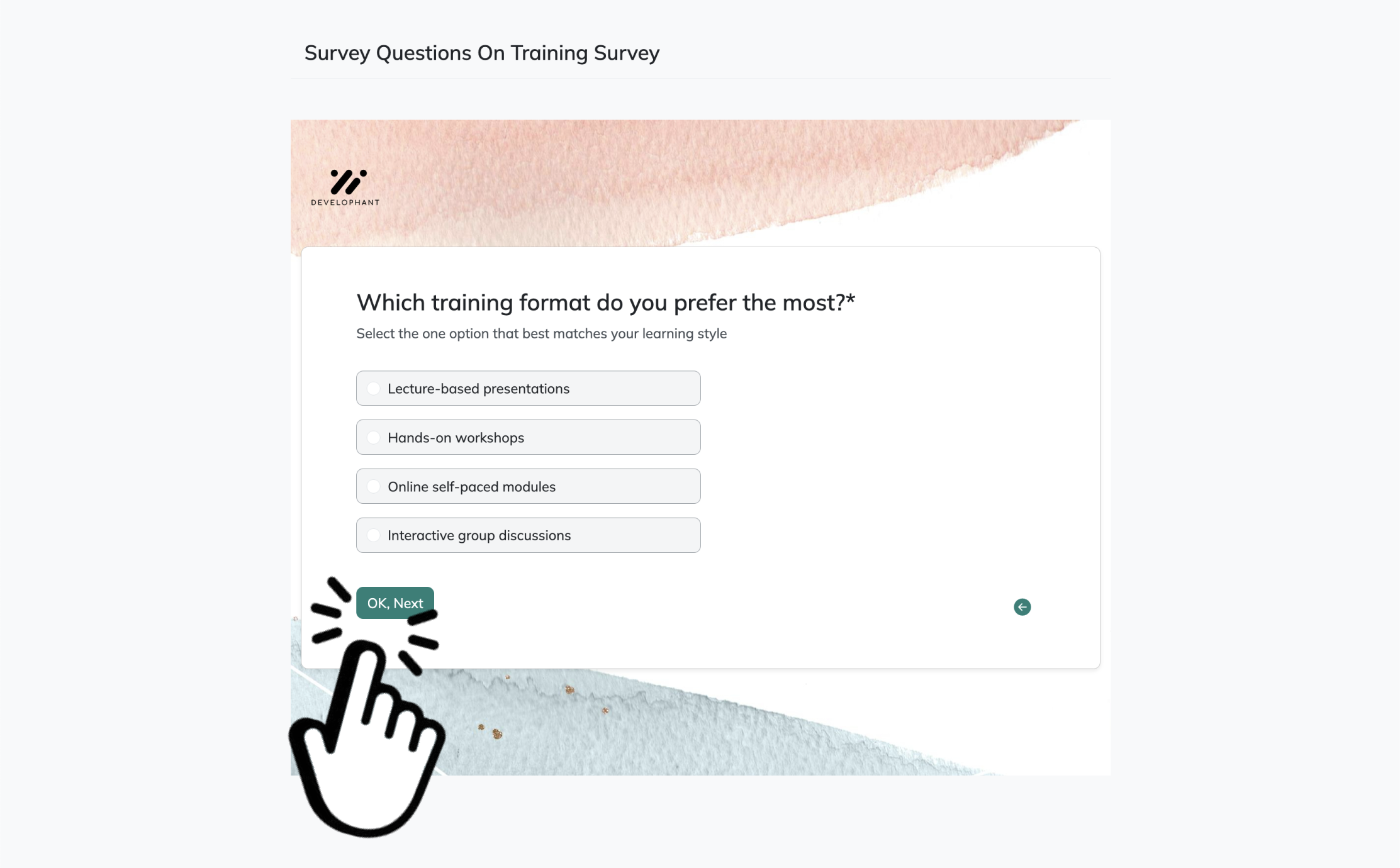

2. Add questions

Click Add Question to build your survey. Use choice or scale questions for ratings, satisfaction, and multiple-choice feedback. Add text questions for open comments, suggestions, or follow-up ideas. You can mark important questions as required and reorder them anytime.

3. Publish your survey

Before sharing, click Preview to check how it looks on desktop or mobile. When everything is ready, click Publish to create your shareable link. You can then send the survey to program participants and start collecting responses.

Learning Outcomes Survey Questions

Sample questions

How much did your knowledge of the topic improve during the program?

Which new skills or concepts did you gain from the program?

How confident do you feel applying what you learned?

Which topic do you feel you understand best after completing the program?

Were there any concepts that remained unclear?

Learning outcomes questions show what actually stuck.

Why & When to Use

Use learning outcomes survey questions when you want to understand what participants learned, retained, or understood by the end of a program.

They are especially useful for training, onboarding, education, certification, mentorship, and skill-building programs.

Here’s the thing, a program can feel great and still leave people confused about the core material.

That is why this survey type helps you measure self-reported learning and perceived confidence, not just overall enjoyment.

It also works best when your questions match the program’s stated goals.

If your objective was to teach a process, build a skill, or improve understanding of a topic, your survey should ask directly about those outcomes.

A strong learning outcomes survey can help you spot issues like:

unclear lessons or weak explanations

rushed pacing that left people behind

curriculum gaps between what was taught and what was expected

facilitator delivery that clicked for some people but not others

Plus, these responses give program managers a clearer view of content effectiveness and instructional clarity.

On top of that, they can highlight where learners need reinforcement, which is much better than discovering confusion later when the stakes are higher and the coffee is gone.

Post-program learning surveys should measure perceived gains in knowledge, skills, and confidence because Level 2 evaluation captures whether participants actually learned from training (source).

Engagement and Participation Survey Questions

Sample questions

How engaged did you feel throughout the program?

Which activities or sessions kept you most involved?

Did you feel encouraged to participate and ask questions?

What made it easier or harder for you to stay engaged?

How would you rate the pace and structure of the program?

Engagement questions reveal what pulled people in, and what quietly pushed them out.

Why & When to Use

Use engagement and participation survey questions when you want to understand how actively people took part in sessions, activities, materials, and discussions.

They work especially well for multi-session programs, cohort-based learning, volunteer programs, online courses, and hybrid events.

Here’s the thing, engagement is not just a nice bonus.

It often shapes completion rates, satisfaction, and overall outcomes, so if attention drops, results usually wobble right behind it.

These questions help you look at both behavioral engagement and emotional engagement.

That means you are not only asking whether people showed up or joined in, but also whether they felt interested, included, and motivated to participate.

A strong survey in this category can uncover barriers like:

session timing that clashed with real life

heavy workloads that drained energy

tech issues that made participation annoying

facilitation styles that felt unclear or uninviting

pacing or structure that made it hard to stay involved

Plus, it helps you identify the sessions, formats, or activities that sparked the most involvement.

On top of that, when you know what keeps people engaged, you can build future programs that feel less like homework and more like something people actually want to show up for.

Facilitator and Delivery Survey Questions

Sample questions

How effective was the facilitator or instructor in delivering the program?

How clearly were concepts and expectations explained?

How responsive was the facilitator to participant questions or concerns?

Did the facilitator create a supportive and inclusive learning environment?

What feedback do you have about the way the program was delivered?

Great delivery can make strong content land, while weak delivery can make even useful material feel like wallpaper.

Why & When to Use

Use facilitator and delivery survey questions when you want to evaluate how well a program was taught, guided, explained, and supported.

They are especially useful for programs led by trainers, instructors, coaches, mentors, speakers, or internal staff.

Here’s the thing, even excellent content can fall flat if the delivery feels confusing, rushed, or hard to connect with.

That means delivery quality often shapes satisfaction just as much as the material itself, which is mildly unfair but very real.

These questions help you assess things like:

clarity of instruction

communication style

responsiveness to questions

facilitator preparedness

tone, support, and inclusiveness

Plus, they give you practical insight into whether participants felt guided or just politely left to figure things out alone.

When writing these questions, keep the wording neutral so you get honest feedback instead of accidentally steering people toward praise or criticism.

On top of that, anonymous surveys usually lead to more candid responses about facilitators, especially when participants worry about being too direct.

Used well, this survey type helps you improve presenter effectiveness, strengthen participant support, and make future delivery smoother, clearer, and a lot more human.

A systematic review found facilitator delivery was associated with at least one parent or child outcome in most parenting-program studies, supporting post-program delivery surveys (source).

Program Impact Survey Questions

Sample questions

How has the program affected your work, studies, or daily activities?

Have you applied what you learned since completing the program?

What measurable results, if any, have you seen after the program?

How has the program influenced your confidence or decision-making?

What lasting value did the program provide?

Impact questions show whether your program changed anything beyond the applause at the end.

Why & When to Use

Use program impact survey questions when you want to find out whether a program led to meaningful change in behavior, performance, confidence, or real-world outcomes.

They work especially well for leadership development, workforce training, youth programs, nonprofit services, coaching, and educational interventions.

Here’s the thing, immediate feedback tells you how people felt right after the experience, but impact feedback tells you what actually stuck once real life barged back in.

That difference matters because a program can feel inspiring on day one and still produce zero change by day thirty.

These questions are most useful in follow-up surveys sent after participants have had time to apply what they learned, such as:

30 days after completion

60 days after completion

90 days after completion

Plus, this timing gives you a better shot at spotting behavior change instead of collecting fresh enthusiasm in a nicer outfit.

When writing impact questions, look for signs of:

implementation of new skills

improved performance or results

stronger confidence or decision-making

changes in habits, actions, or outcomes

On top of that, ask for measurable examples when possible so responses move beyond vague praise and into evidence you can actually use.

Improvement and Future Planning Survey Questions

Sample questions

What is one thing we should improve for future participants?

Which part of the program should be expanded or reduced?

Were there any topics you wish had been included?

How could the program schedule or format be improved?

What would make this program more useful or relevant in the future?

Improvement questions turn feedback into a practical to-do list instead of a polite shrug.

Why & When to Use

Use improvement and future planning survey questions when you want clear ideas for making the next version of your program better.

They work best when you need direct participant input on content, format, scheduling, communication, and support.

Here’s the thing, broad prompts like “any feedback?” often give you fuzzy answers that sound nice but help exactly nobody.

Specific questions give you details you can actually use, which is much better for your team and slightly less dramatic for your planning meeting.

These questions are especially useful after a full program cycle, when participants can reflect on what worked, what felt clunky, and what was missing.

To make responses easier to analyze, group feedback into themes like:

content

logistics

facilitation

participant support

Plus, this makes it easier to spot patterns instead of chasing one-off comments like they hold the secrets of the universe.

On top of that, improvement questions help you build a more participant-centered program because they invite people to shape what comes next.

That means your updates are based on real experience, not just internal guesses dressed up as strategy.

How to Choose the Right Post Program Survey Questions

Sample questions

What decision will this survey help you make?

Which questions measure outcomes, and which ones explain why those outcomes happened?

Is this audience more likely to finish a short rating-based survey or answer a few open-ended questions?

Which questions are essential, and which ones are just nice to know?

Are any questions repetitive, unclear, or unlikely to lead to action?

The best survey questions earn their spot by helping you make a real decision.

Why & When to Use

Use this approach when you are building a post program survey from scratch or trimming one that has quietly become a small novel.

Here’s the thing, the right questions depend on your goal, your audience, and your timing.

If you want to measure satisfaction fast, use short quantitative questions like ratings, scales, or yes-or-no items.

If you want richer detail about what worked or what missed the mark, add a few qualitative questions that let people explain things in their own words.

A smart mix usually works best:

quantitative questions show patterns

qualitative questions explain the patterns

together, they give you numbers and context

Plus, survey length matters more than most people want to admit.

Use a short survey when participants are busy, the program was simple, or you only need a few clear metrics.

Use a more detailed survey when the program was longer, more complex, or tied to decisions about content, staffing, or future planning.

On top of that, cut anything redundant, vague, or unrelated to action.

If a question will not influence a real choice, it is probably just taking up space and stealing patience, which your respondents were not exactly carrying in unlimited supply.

Sample questions

Are your questions simple enough that someone can answer them without rereading?

Does each question connect to a clear program goal or decision you need to make?

Are you using the right mix of ratings, multiple choice, and open-ended questions?

Will participants get this survey while the experience is still fresh in their minds?

Have you removed any questions you do not actually plan to review or use?

Best Practices for Writing Post Program Survey Questions

A strong survey feels easy to finish and useful to answer.

Think of this section as your practical checklist for writing surveys people will actually complete, without sighing dramatically at question three.

Why & When to Use

Use these best practices when you want post program survey questions that are clear, helpful, and friendly to real humans with limited time.

Here’s the thing, good survey writing is not just about asking more questions. It is about asking better ones.

Dos

Keep questions clear, specific, and easy to answer.

Match every question to a program goal or evaluation objective.

Use a mix of rating scales, multiple-choice items, and open-ended responses.

Send the survey soon after the program, unless you are measuring long-term impact.

Keep it short enough to finish quickly.

Test wording before sending to make sure it sounds neutral and clear.

Don’ts

Do not ask leading or biased questions.

Do not use jargon, vague wording, or double-barreled questions.

Do not make every question open-ended.

Do not ask for feedback you will never review or act on.

Do not overload people with repetitive questions.

Do not forget segmentation if different groups had different experiences.

Plus, when your survey respects people’s time, you usually get better answers back. Funny how that works.

Sample questions

What patterns show up again and again in participant feedback?

Which survey comments point to quick fixes you can make right away?

What issues need bigger planning, budget, or staffing changes?

How do results compare across different cohorts or program cycles?

Who needs to see these findings so improvements actually happen?

Turning Post Program Survey Insights Into Action

Good feedback only shines when you actually use it.

Once responses come in, your job is to look for patterns, not just cherry-pick the loudest comment or the one that made you spill your coffee.

Why & When to Use

Use this step after collecting post program survey responses and before planning your next round of changes.

Here’s the thing, survey data becomes useful when you sort it into themes, spot trends, and notice concerns that keep showing up. One complaint might be random, but repeated feedback usually means something needs your attention.

A simple way to organize findings is to group them into action levels:

Quick wins, like clearer instructions, better reminders, or smoother scheduling.

Larger improvements, like updating activities, training facilitators, or adjusting session length.

Long-term strategy changes, like redesigning goals, delivery models, or support systems.

Plus, compare results across cohorts, seasons, or program cycles so you can see whether changes are actually working. That helps you separate one-off grumbles from real trends.

On top of that, share relevant findings with facilitators, stakeholders, and program leaders so the people who can act on the feedback actually see it.

The practical takeaway is simple: strong post program survey questions matter most when they lead to measurable improvements. If feedback sits in a folder forever, it is basically just very organized wallpaper.

Related Training Survey Surveys

31 Professional Development Evaluations Survey Questions

Explore 25 professional development evaluations survey questions with examples, insights, and pra...

28 Knowledge Survey Questions Examples for Effective Assessments

Discover 25 knowledge survey questions examples to assess understanding effectively. Explore top ...

30 Coaching Survey Questions to Improve Coaching Success

Explore 25 coaching survey questions with sample prompts to improve feedback, assess clients, and...