30 Help Desk Survey Questions to Boost Support Feedback

Discover 25 help desk survey questions with sample questions to improve support quality, boost feedback, and enhance customer service insights.

Help desk surveys are the quiet workhorses of great support. A help desk customer satisfaction survey helps you measure how people feel after an interaction, while an IT support survey or service desk assessment questionnaire helps you see whether your team is consistently fast, clear, and useful. You will usually trigger these surveys after ticket closure, during quarterly reviews, or after a rollout or implementation. The goal is simple: improve first contact resolution, benchmark service quality, reduce churn, and lift loyalty without making your users fill out a novel.

Customer Satisfaction (CSAT) Survey Questions

CSAT helps you capture the emotional temperature of a support interaction before the memory gets fuzzy.

When you want a quick read on help desk customer satisfaction, CSAT is usually your best starting point.

It works especially well right after a ticket is marked resolved, because that is the moment when your user still remembers the speed, tone, and usefulness of the interaction.

If you wait too long, you get blurry feedback.

If you ask too soon, before the person feels the issue is truly fixed, you get false confidence.

That is why timing matters so much with help desk customer satisfaction survey questions.

A good CSAT survey tells you whether the user felt supported, respected, and actually helped.

It also gives you a simple benchmark that non-analysts can understand fast.

If your score dips, you know something needs attention.

If it rises, your team is probably doing something right and deserves a tiny victory lap.

Here’s the thing: CSAT is not only about friendliness.

It measures whether the user believes the resolution was worth the time spent getting it.

That means your survey should focus on three things:

Satisfaction with the outcome.

Satisfaction with the process.

Satisfaction with the person providing support.

When you build it help desk survey questions for CSAT, keep the wording plain and human.

Nobody wants to decode a corporate riddle while trying to rate a password reset.

Short questions work best, especially on mobile.

You also want a mix of rating and yes-or-no items so the survey feels easy to finish.

That balance gives you data without turning the experience into homework.

Here are five sample CSAT questions you can use in a help desk customer satisfaction survey:

“On a scale of 1–5, how satisfied are you with the resolution provided by our help desk today?”

“Did our support agent clearly explain the solution to you?”

“How would you rate the overall professionalism of our IT support team?”

“Was your issue resolved within your expected timeframe?”

“How likely are you to use our help desk again if a similar issue occurs?”

These questions work because they move from broad satisfaction to specific details.

That gives you both a headline score and clues about what shaped it.

If your users say they were satisfied but still felt the explanation was unclear, your team may need better communication training.

If satisfaction is low but professionalism scores high, the issue may be process-related instead of people-related.

Plus, CSAT is one of the easiest survey types to automate.

You can send it by email, add it to a portal, or show it after a chat ends.

The easier it is to answer, the more responses you will get.

And more responses mean fewer guesses.

Research indicates transactional help desk CSAT surveys get fresher, more accurate feedback when sent immediately after ticket closure or issue resolution (Qualtrics).

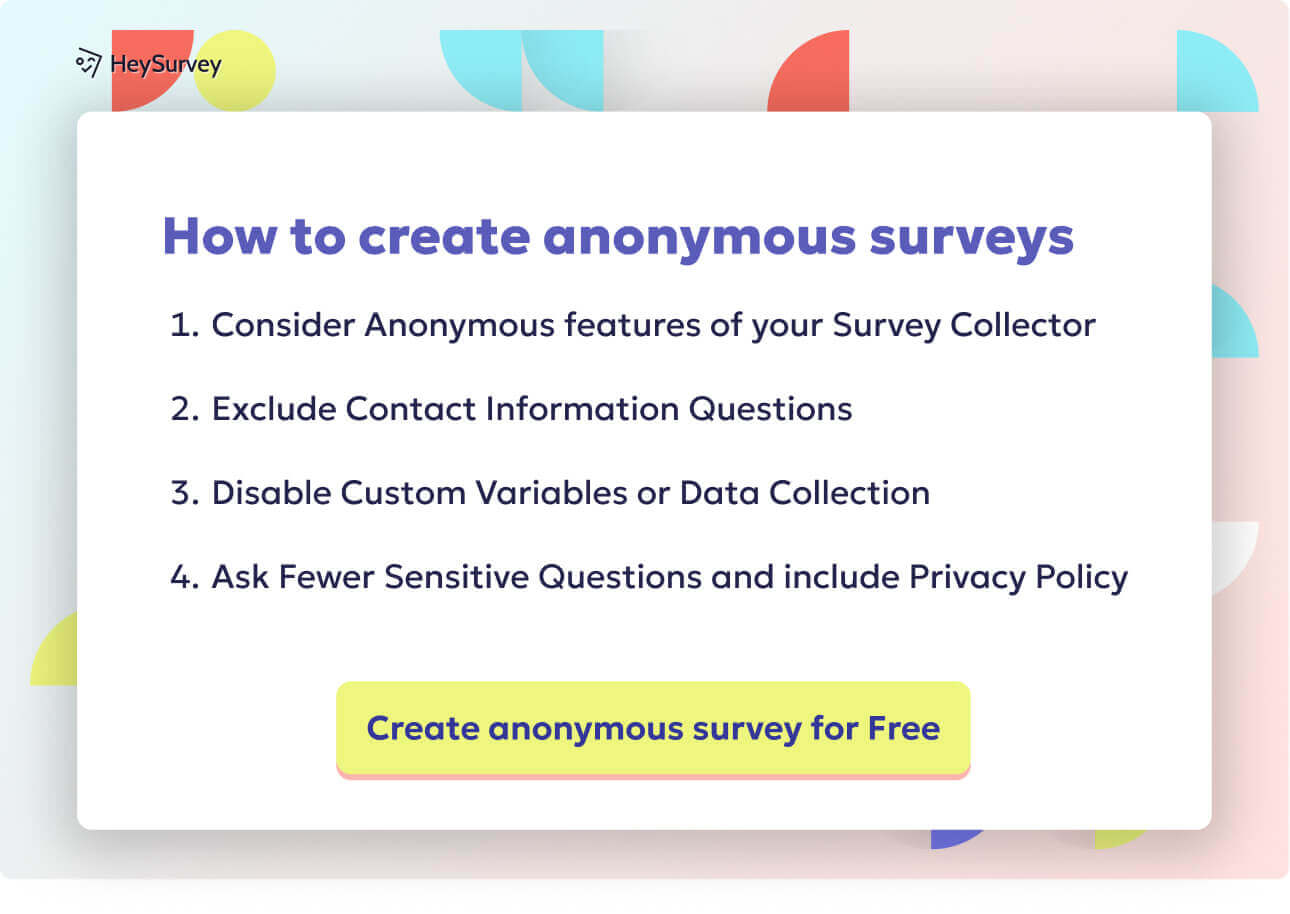

Here’s a simple way to create your survey in HeySurvey. If you want to get started quickly, you can first open a template using the button below these instructions. You do not need an account just to begin building, but you will need one to publish and view responses later.

1. Create a new survey

Start by choosing how you want to build your survey: from a blank sheet, from a pre-built template, or by typing questions directly into HeySurvey. Once the survey opens, you can give it an internal name and begin shaping it into the survey you need.

2. Add questions

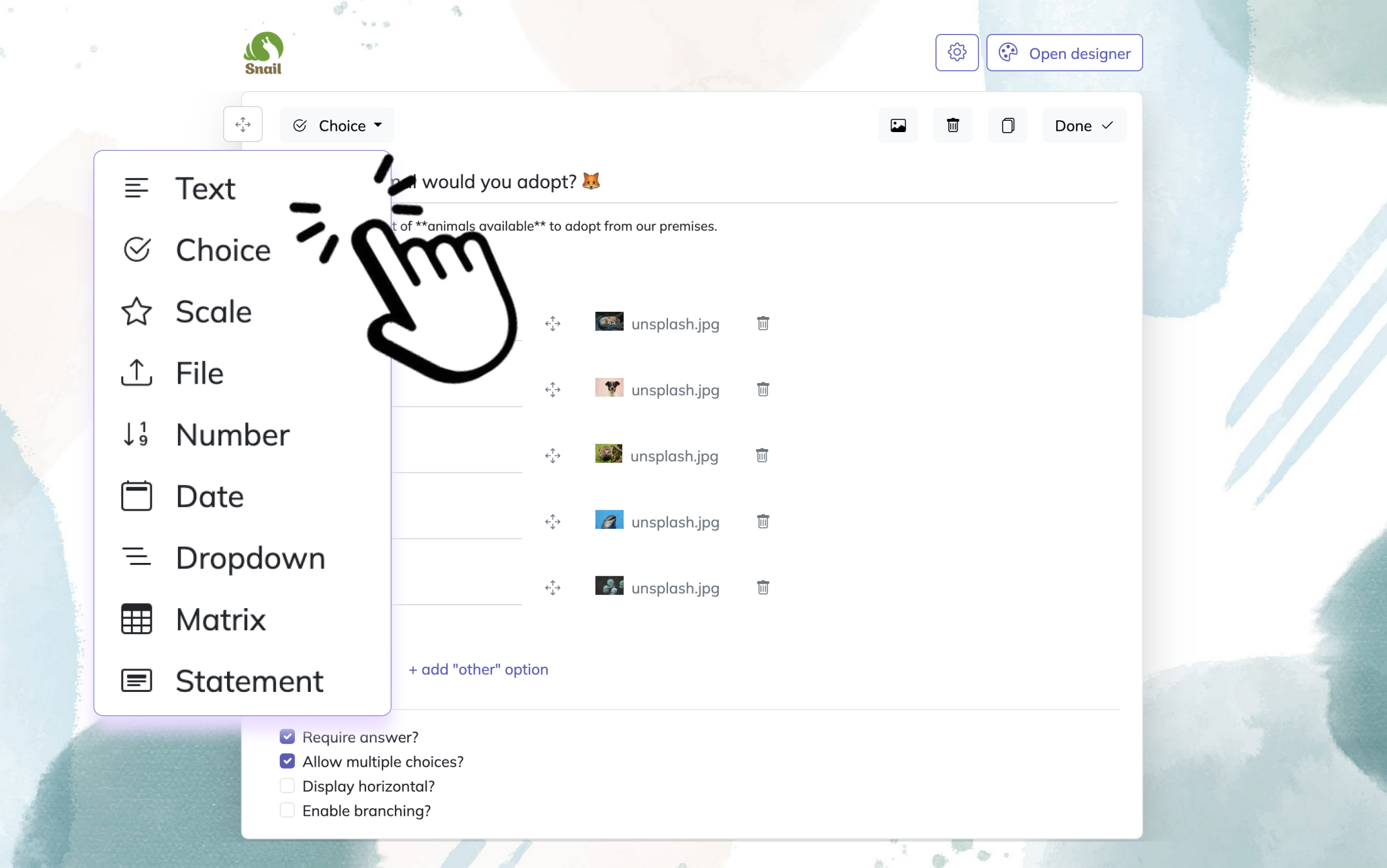

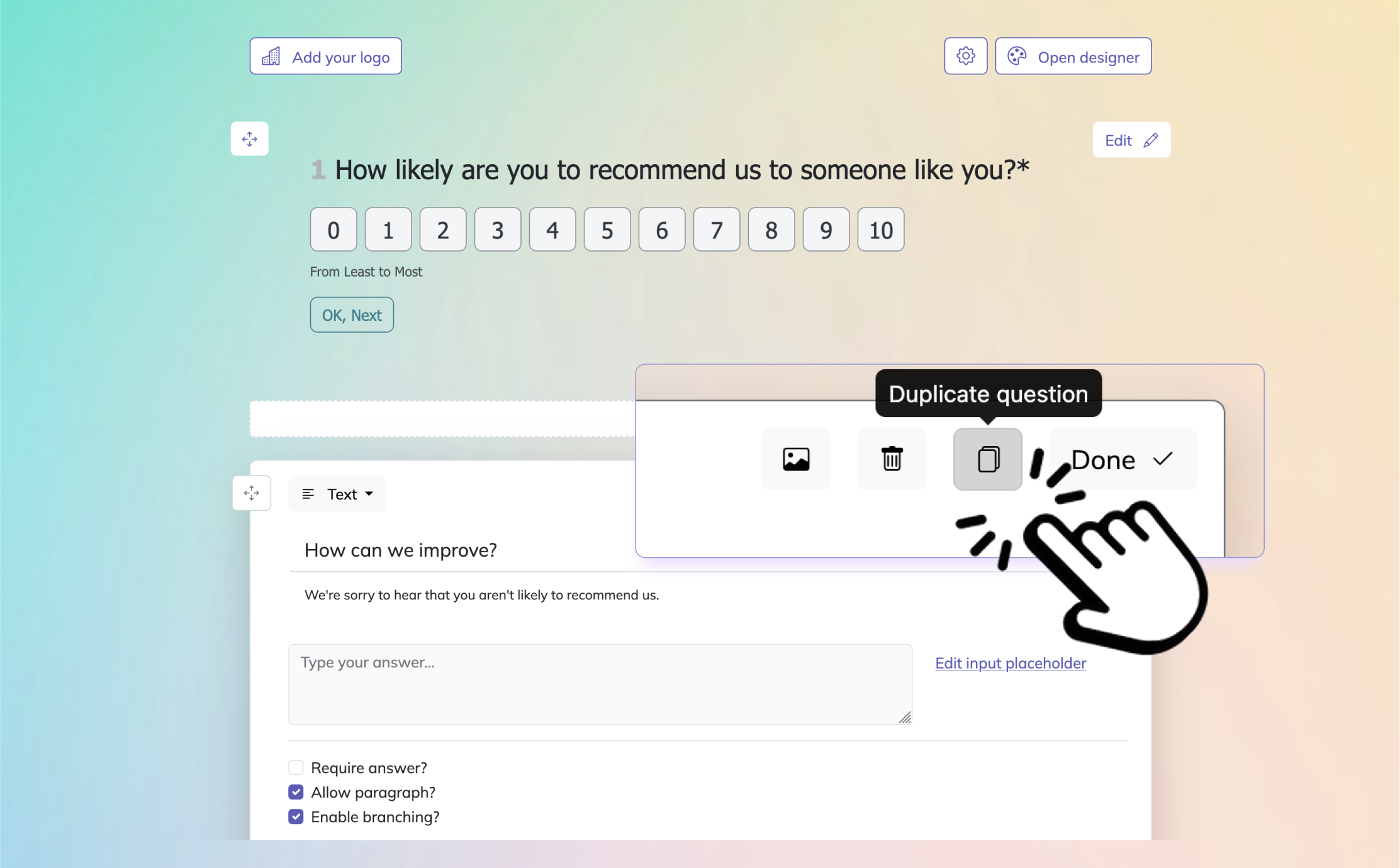

Click Add Question to insert your first question, or add one between existing questions. HeySurvey supports many question types, including text, choice, scale, dropdown, date, number, file upload, and statement. You can mark questions as required, add descriptions, duplicate questions, and include images if helpful. If your survey needs a more personal flow, you can also set up branching so different answers lead to different next questions.

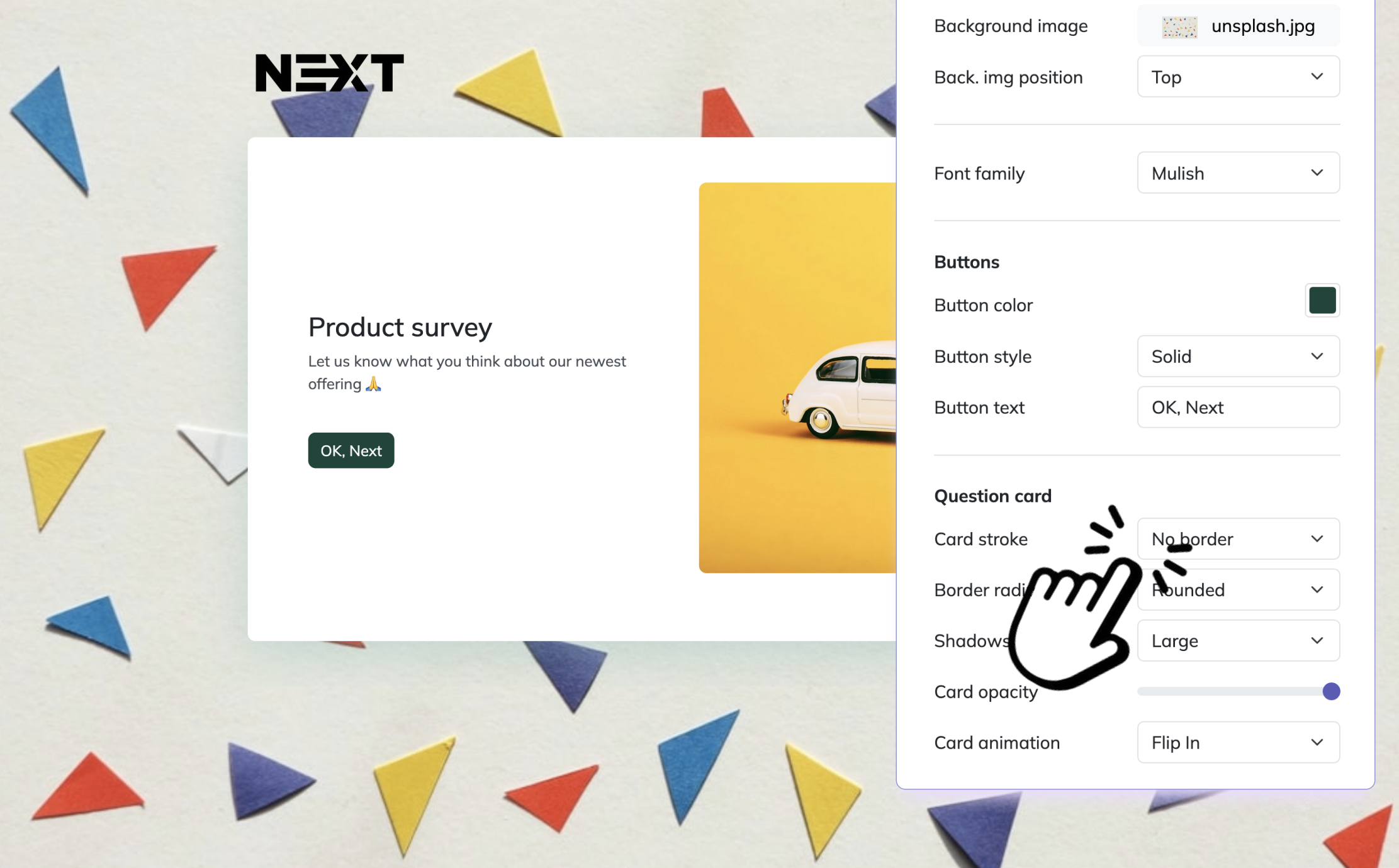

Bonus: apply branding and settings

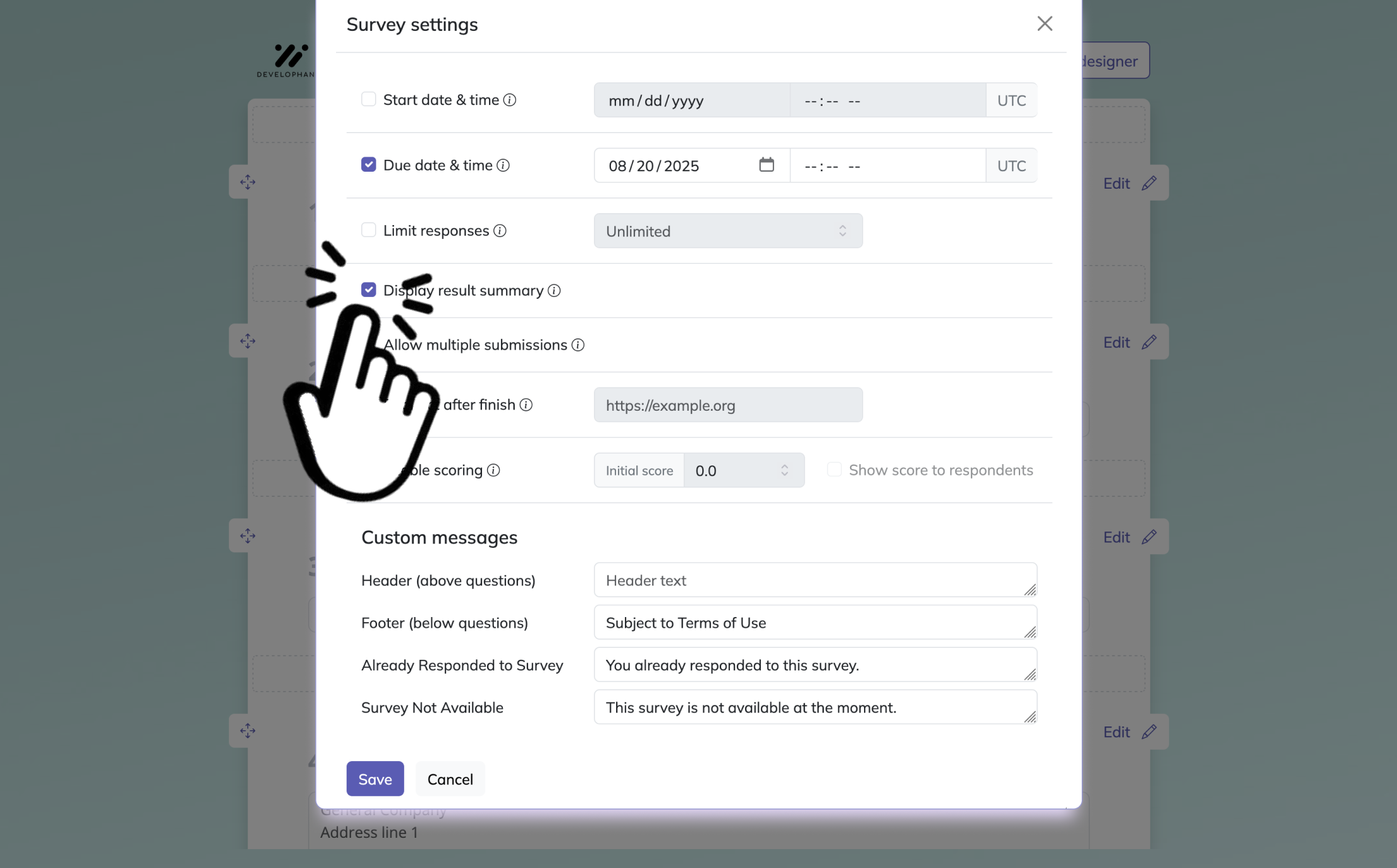

Before publishing, you can customize the survey to match your style. Add your logo, adjust colors, fonts, backgrounds, and question card design in the Designer Sidebar. In the settings panel, you can define start and end dates, response limits, redirect URLs, or allow respondents to view results where applicable.

3. Publish survey

When everything looks ready, use Preview to check the survey experience, then click Publish to generate a shareable link. Your survey is now ready for respondents on desktop, tablet, and mobile.

Customer Effort Score (CES) Survey Questions

Customer effort tells you how hard people had to work to get help, and yes, people remember hassle better than politeness.

A user might like your support agent and still feel exhausted by the experience.

That is exactly why CES matters.

In many IT environments, ease predicts loyalty just as strongly, and sometimes more strongly, than standard satisfaction measures.

If your support process feels clunky, your users notice.

They may not throw tomatoes, but they will remember the friction.

CES works best right after ticket closure or inside a live support flow.

That is because effort is easiest to judge in the moment.

Users can still recall whether they had to repeat details, jump channels, or click through five confusing screens just to ask a simple question.

This makes CES especially useful in it support survey programs where speed and simplicity are major goals.

A strong CES survey focuses on the user’s workload, not your team’s internal effort.

That distinction matters.

Your agent may have worked heroically behind the scenes, but if the user had to explain the same issue three times, the experience still felt difficult.

That is the part you need to measure.

You should use CES when you want to improve:

Channel routing.

Self-service usability.

Handoff quality between agents.

Ticket workflow simplicity.

The overall smoothness of the support journey.

Unlike CSAT, which asks “Were you happy?”, CES asks “Was this easy?”

That is a different question with very practical value.

A high-effort experience often points to broken workflows, messy escalation paths, or poor documentation.

Those are fixable problems, which is good news.

The bad news is users are not usually shy about noticing them.

Here are five sample CES questions for your help desk satisfaction survey:

“The company made it easy for me to resolve my issue—strongly disagree to strongly agree.”

“How many times did you contact us for this single issue?”

“Rate the effort you personally had to invest to get your problem solved.”

“Did you have to repeat your information to multiple agents?”

“How quickly could you locate the right support channel (phone, chat, portal)?”

These questions help you spot process friction quickly.

If users consistently report high effort, you may need to simplify intake forms, improve channel guidance, or reduce handoffs between teams.

On top of that, CES can be very helpful when reviewing in-app support, chat support, and self-service experiences.

If users can find help quickly and solve issues without extra steps, that is a strong sign your support design is working.

If not, your process may be playing a little game of hide-and-seek with the answer.

Gartner research found 96% of customers with high-effort service interactions become more disloyal, underscoring why CES questions matter in help desk surveys. Source

Net Promoter Score (NPS) Survey for IT Support

NPS shows whether people trust your help desk enough to recommend it, which is a fancy way of asking whether your support has built real loyalty.

Unlike ticket-level surveys, NPS is not mainly about one interaction.

It is about your user’s overall relationship with the service desk over time.

That makes it useful for monthly or quarterly check-ins when you want to understand bigger patterns in perception.

A single good ticket can lift CSAT.

A strong NPS usually means your team has earned trust more consistently.

This is why NPS belongs in any mature program for help desk customer satisfaction survey questions.

It helps you identify promoters, passives, and detractors.

Those groups matter because they signal different risks and opportunities.

Promoters are your fans.

Passives are unconvinced.

Detractors may not be writing dramatic speeches about your ticketing system, but they are often the first sign of hidden service problems.

For IT support teams, NPS is especially useful when you want to know whether users believe future support will be dependable.

That forward-looking trust is powerful.

If someone believes your team will handle the next issue well, they are more likely to engage with support early instead of delaying, escalating, or trying random fixes that create bigger problems.

NPS also works well for spotting changes over time.

You can compare results quarter to quarter and connect movement to operational changes such as staffing shifts, new tools, portal redesigns, or updated SLAs.

That gives leadership something more solid than “people seem cranky lately.”

Here are five sample NPS questions for an IT support survey:

“On a 0–10 scale, how likely are you to recommend our help desk to a colleague or friend?”

“What’s the primary reason for your score?”

“How satisfied are you with the consistency of service you receive from our IT support?”

“How confident are you that future issues will be handled effectively?”

“How has your perception of our help desk changed over the last six months?”

These questions help you move from score to story.

The first question gives you the NPS number.

The rest explain what sits behind it.

That matters because a score alone cannot tell you whether users are frustrated by delays, inconsistency, poor communication, or weak follow-through.

Plus, NPS can complement your other support survey questions beautifully.

CSAT tells you how one interaction felt.

CES tells you how hard it was.

NPS tells you whether the whole support experience is earning loyalty.

Put together, that is much more useful than one lonely metric trying to do all the work.

First Contact Resolution (FCR) Feedback Survey

FCR surveys help you confirm whether “resolved on first contact” is actually true in the eyes of the person who asked for help.

Internal metrics can look impressive on dashboards.

Users, however, are wonderfully honest.

If your system says a ticket was closed on first contact but the user still had to follow up, reopen the issue, or seek help elsewhere, your FCR number is inflated.

That is exactly why this survey matters.

You should send an FCR survey within one to two hours after closure when the ticket is tagged as resolved on first contact.

That timing keeps the experience fresh.

It also reduces the chance that the user confuses the interaction with another recent issue, which can happen more often than support teams like to admit.

FCR is one of the most important indicators in help desk customer satisfaction because it combines efficiency with effectiveness.

Users love quick support, but only if it truly fixes the problem.

A fast non-solution is still a non-solution.

It just arrives sooner.

That is not exactly the kind of speed trophy you want.

A strong FCR feedback survey helps you verify:

Whether the issue was fully resolved the first time.

Whether the explanation was clear enough to act on.

Whether the interaction length felt reasonable.

Whether the user would have preferred a different resolution path.

You also gain a useful reality check on agent notes and closure codes.

If users repeatedly report incomplete fixes on first-contact tickets, you may need better closure rules, stronger quality reviews, or more training on issue confirmation.

Here are five sample FCR questions to include:

“Was your issue fully resolved during your first interaction with our agent?”

“If ‘No,’ what additional steps were required?”

“How long did the initial interaction take from start to finish?”

“Please rate the clarity of the solution provided on first contact.”

“Would you have preferred a follow-up instead of immediate resolution?”

These questions help you distinguish speed from success.

That distinction is vital.

A team can chase first contact resolution too aggressively and end up closing tickets before users feel confident the issue is gone.

This survey keeps your metric honest.

On top of that, FCR feedback can reveal where certain issue types should not be pushed into one-touch resolution goals.

Some problems genuinely need a callback, escalation, or deeper troubleshooting.

There is no shame in that.

The real mistake is pretending every problem should be solved in one magical burst of keyboard wizardry.

Qualtrics reports that higher first contact resolution likely correlates with better CSAT and lower customer effort, making FCR questions essential in help desk surveys (source).

IT Service Quality / Service Desk Assessment Questionnaire

A service desk assessment questionnaire helps you step back and judge the quality of your support operation as a whole, not just one ticket at a time.

This type of survey is broader than CSAT, CES, or FCR.

It is usually run quarterly or twice a year, often alongside ITIL or ISO-aligned review cycles.

Instead of focusing on one interaction, it evaluates the larger support ecosystem.

That includes SLA performance, escalation quality, self-service content, and communication habits.

This is where a service desk assessment questionnaire becomes especially valuable.

It helps you understand whether your help desk is simply busy or genuinely effective.

Those are not always the same thing.

A team can close lots of tickets and still frustrate users with poor updates, weak knowledge articles, or slow escalation handling.

This survey helps uncover those deeper issues.

When you build an assessment questionnaire, focus on dimensions that users can reasonably judge.

You do not need to ask them to evaluate backend architecture or incident categorization logic.

You do want to ask whether they felt informed, supported, and able to access useful resources.

A strong service quality questionnaire often explores:

SLA adherence from the user’s point of view.

Knowledge base usefulness and accuracy.

Escalation experience.

Proactive communication during known issues.

Resource gaps that affect support outcomes.

These topics matter because they shape trust.

If users feel your team communicates clearly during outages and escalates issues smoothly, they are more forgiving even when resolution takes time.

If they feel left in the dark, frustration grows quickly.

Nothing spices up a Monday quite like mysterious silence during a system outage.

Here are five sample service quality questions:

“How satisfied are you with our adherence to published SLAs?”

“Rate the accuracy of the knowledge base articles linked in your ticket.”

“How effective was the escalation path when your issue required higher-tier support?”

“Do you feel the help desk proactively communicates known outages or issues?”

“What additional resources would improve your support experience?”

These questions give you practical improvement signals.

If knowledge base ratings are low, you may need better article ownership and review cycles.

If escalation scores lag, your handoff process may be too slow or too opaque.

Plus, this kind of questionnaire works well as part of a broader helpdesk feedback survey template.

It adds strategic depth to your survey program and keeps you from relying only on post-ticket snapshots.

In-App or Chat Support Micro-Survey Questions

Micro-surveys are tiny but mighty because they capture feedback in the exact moment support happens.

If you offer support inside a SaaS product or mobile app, an in-app or chat survey is one of the simplest ways to gather immediate insight.

It appears right after the conversation ends, while the user still remembers the wait time, the clarity of the answer, and whether they had to leave the product to finish the job.

That timing reduces recall bias.

It also makes this format ideal when you are thinking about how to write a question in-app support service flows that feel natural instead of disruptive.

Micro-surveys need to be short.

Very short.

Users are still trying to get back to what they were doing, so the survey should feel like a quick tap, not an ambush.

That means you should ask only the highest-value questions.

The goal is not to collect everything.

The goal is to collect what is most actionable in the smallest possible space.

This format works especially well for measuring:

Response time satisfaction.

Clarity of agent communication.

Channel effectiveness.

Whether the user had to leave the app.

One clear improvement idea.

Because these surveys live inside the product experience, wording matters even more.

Keep the questions conversational.

Use plain language.

Avoid technical phrasing unless your users are very technical and expect it.

A chat support survey should feel like a helpful follow-up, not a tiny legal document.

Here are five sample in-app or chat support micro-survey questions:

“Did our live chat answer your question completely?”

“How do you rate the response time of our in-app support?”

“Was the agent’s language clear and jargon-free?”

“Did you have to leave the app to resolve your issue?”

“What could we do to improve the in-app support experience?”

These questions are compact, clear, and easy to answer on a phone or in a browser.

They also connect directly to moments your team can improve, such as staffing, script quality, onboarding, and product-support integration.

Plus, micro-surveys are excellent for spotting friction that never makes it into longer surveys.

A user might ignore an email survey later, but they are much more likely to tap a quick answer right after chat.

Sometimes the best feedback arrives when you ask before the memory has time to wander off for coffee.

Internal IT Support (Employee) Satisfaction Survey

Internal IT surveys help you understand how your own employees experience support, which matters because a slow fix inside the company can stall real work fast.

External customer support gets a lot of attention.

Internal support deserves just as much.

When employees cannot access systems, resolve device issues, or get clear answers from corporate IT, productivity drops and frustration rises.

That is why an internal IT support survey should be part of your regular feedback rhythm.

These surveys are often sent monthly or tied to closed internal tickets.

They focus on how well the IT helpdesk supports employees in doing their jobs, not just whether the ticket technically closed.

That distinction is important.

An issue may be marked resolved, but if the employee still lost half a day of work, the experience was not exactly sparkling.

Internal surveys should reflect the employee context.

Ask about speed, clarity, professionalism, and whether the fix helped them resume work quickly.

You also want to identify recurring problems, because repeated technical issues often signal process gaps, aging equipment, weak documentation, or support bottlenecks.

Good internal survey design helps you uncover all of that without making employees feel interrogated.

Areas worth measuring include:

Response speed.

Effect on productivity.

Courtesy and professionalism.

Recurring unresolved issues.

The single change that would help most.

These questions create a fuller picture of internal service quality.

They also help IT leaders prioritize improvements that affect day-to-day operations across departments.

If many employees say they cannot return to work immediately after support, that points to resolution quality, not just staffing speed.

Here are five sample internal IT survey questions:

“How satisfied are you with the speed of our internal IT helpdesk?”

“Did the solution provided allow you to resume work immediately?”

“Rate the courtesy and professionalism of IT staff.”

“Have you experienced recurring issues that remain unresolved?”

“What one change would most improve IT support for you?”

These questions are practical and direct.

They work because employees can answer them honestly based on lived experience.

On top of that, internal support data can complement your it help desk survey questions for external users.

The environments differ, but the core themes are similar: speed, clarity, trust, and useful outcomes.

And yes, even coworkers notice when support feels like a maze with a ticket number.

Periodic Help Desk Benchmarking & Continuous-Improvement Survey

Benchmarking surveys help you see the bigger picture by combining multiple support metrics into one long-view checkup.

A periodic benchmarking survey is not meant to replace your other surveys.

It brings them together.

Usually sent annually or semi-annually, this format blends ideas from CSAT, CES, NPS, and service quality reviews so you can compare performance over time and spot trends that ticket-level surveys might miss.

This is especially useful if you want to track your help desk customer satisfaction survey program in a more strategic way.

A well-built benchmarking survey tells you whether your help desk is moving forward, standing still, or quietly sliding backward while everyone is too busy closing tickets to notice.

It also helps you compare channels.

Phone support may score well for urgency, while portal support may score better for convenience.

Chat may win on speed but lose on depth.

Without periodic review, those patterns are easy to miss.

The survey should stay focused on year-over-year comparison and broad operational priorities.

That means asking about overall performance, preferred support channels, changes in satisfaction, and the most valuable improvement opportunities.

You are not trying to examine every single interaction here.

You are trying to understand the support experience at the system level.

A benchmarking survey can help you assess:

Overall performance trends.

Channel effectiveness.

Changes in user sentiment since the last period.

The improvement with the highest potential impact.

New tools or process ideas from users.

That final point matters more than some teams expect.

Users often know exactly where the friction lives.

Sometimes they will hand you the improvement idea almost gift-wrapped.

All you have to do is ask, and then resist the ancient organizational tradition of doing absolutely nothing with it.

Here are five sample benchmarking questions:

“Overall, how would you rate the performance of our help desk this year?”

“Which support channel (phone, email, chat, portal) is most effective for you?”

“How has your satisfaction with the help desk changed since last year?”

“What single improvement would have the biggest impact on your experience?”

“Would you recommend any new tools or processes to enhance support?”

These questions support meaningful comparisons over time.

They also help you prioritize roadmap decisions with real user input rather than assumptions.

Plus, by pairing this survey with your regular helpdesk feedback survey template, you create a stronger cycle of measurement, learning, and action.

Best Practices: Dos and Don’ts for Writing High-Impact Help Desk Survey Questions

The best survey questions are short, clear, useful, and respectful of your user’s time.

Writing strong support survey questions is not complicated, but it does require discipline.

You want to collect honest feedback without exhausting the person giving it.

That means every question should earn its place.

If a question will not drive a decision, improvement, or insight, it probably does not belong.

The best support survey questions follow a few simple rules.

Keep surveys short enough to finish in under a minute when possible.

Use language that sounds human.

Mix rating scales with one or two open-ended prompts so you get both trend data and real context.

Automate your trigger points so surveys arrive at the right moment, whether that is after ticket closure, after chat, or during a periodic review.

Most important of all, close the feedback loop.

If people share concerns and never see change, response rates and trust both drop.

Useful survey writing habits include:

Keep each question focused on one idea.

Use plain wording instead of technical or corporate jargon.

Match the survey length to the interaction type.

Make forms mobile-friendly and easy to tap through.

Ensure accessibility with readable text, clear contrast, and simple layouts.

On top of that, avoid common mistakes.

Do not write leading questions that push people toward a flattering answer.

Do not ask for unnecessary personal data.

Do not blast surveys after every tiny interaction, because survey fatigue is real and relentless.

And never ignore negative feedback, even if it arrives with the emotional energy of a soggy rain cloud.

Bad survey design usually fails in one of two ways.

It is either too vague to be useful or too long to be completed.

Both are avoidable.

A thoughtful set of help desk customer satisfaction survey questions should feel easy for the user and valuable for the team.

That is the sweet spot.

If you are building a helpdesk feedback survey template, remember that accessibility matters too.

People may open your survey on a phone, tablet, desktop, or screen reader.

If the design is awkward, your completion rate will suffer before your carefully crafted question even gets a chance.

The smartest survey is the one your users can actually finish.

Good help desk surveys do not need to be flashy to work. They need to be timely, clear, and tied to real improvements. If you use the right mix of CSAT, CES, NPS, FCR, service quality, in-app, internal, and benchmarking questions, you will get a much sharper view of what support feels like from the user side. Plus, you will know where to simplify, where to train, and where to fix the process instead of guessing. That is how better support gets built, one smart question at a time.

Related Customer Survey Surveys

29 Restaurant Survey Questions

Explore 25 restaurant survey questions with sample questions to improve feedback, service, and cu...

28 Airbnb Survey Questions to Improve Guest Experience

Discover 25 effective Airbnb survey questions to boost guest feedback and satisfaction. Enhance y...

29 Interior Design Survey Questions to Elevate Your Projects

Explore 25 top interior design survey questions to enhance your projects. Discover expert-crafted...